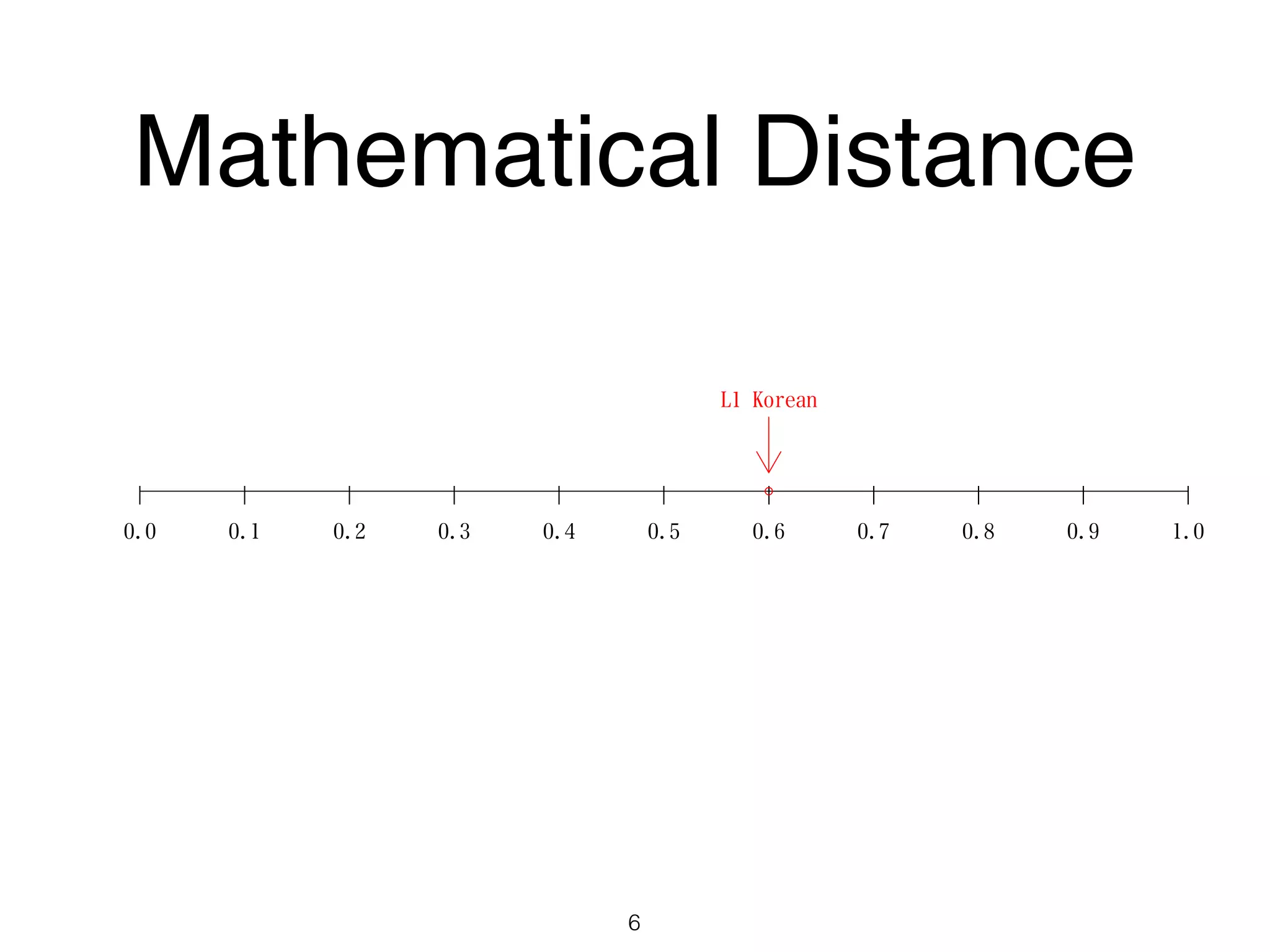

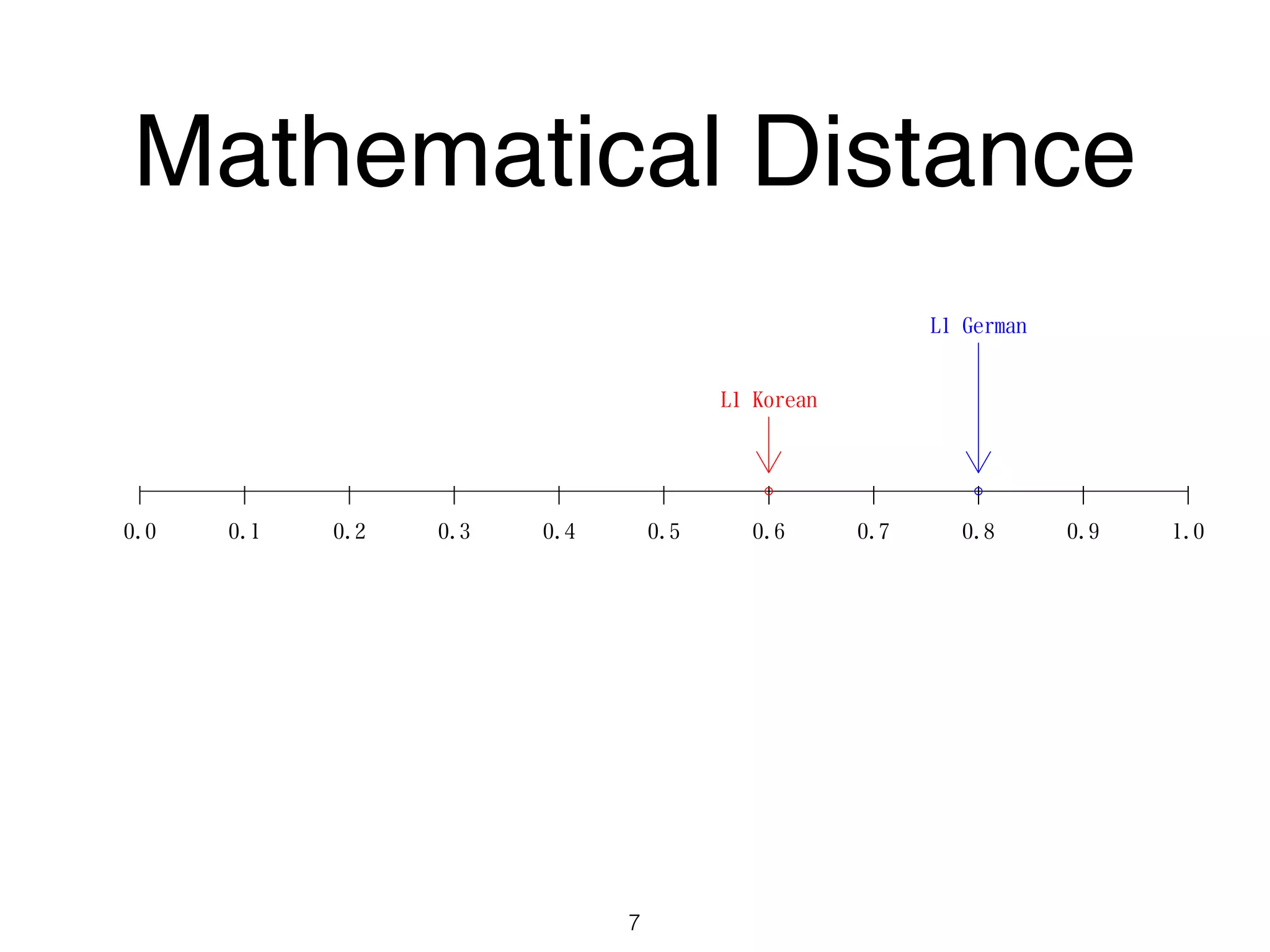

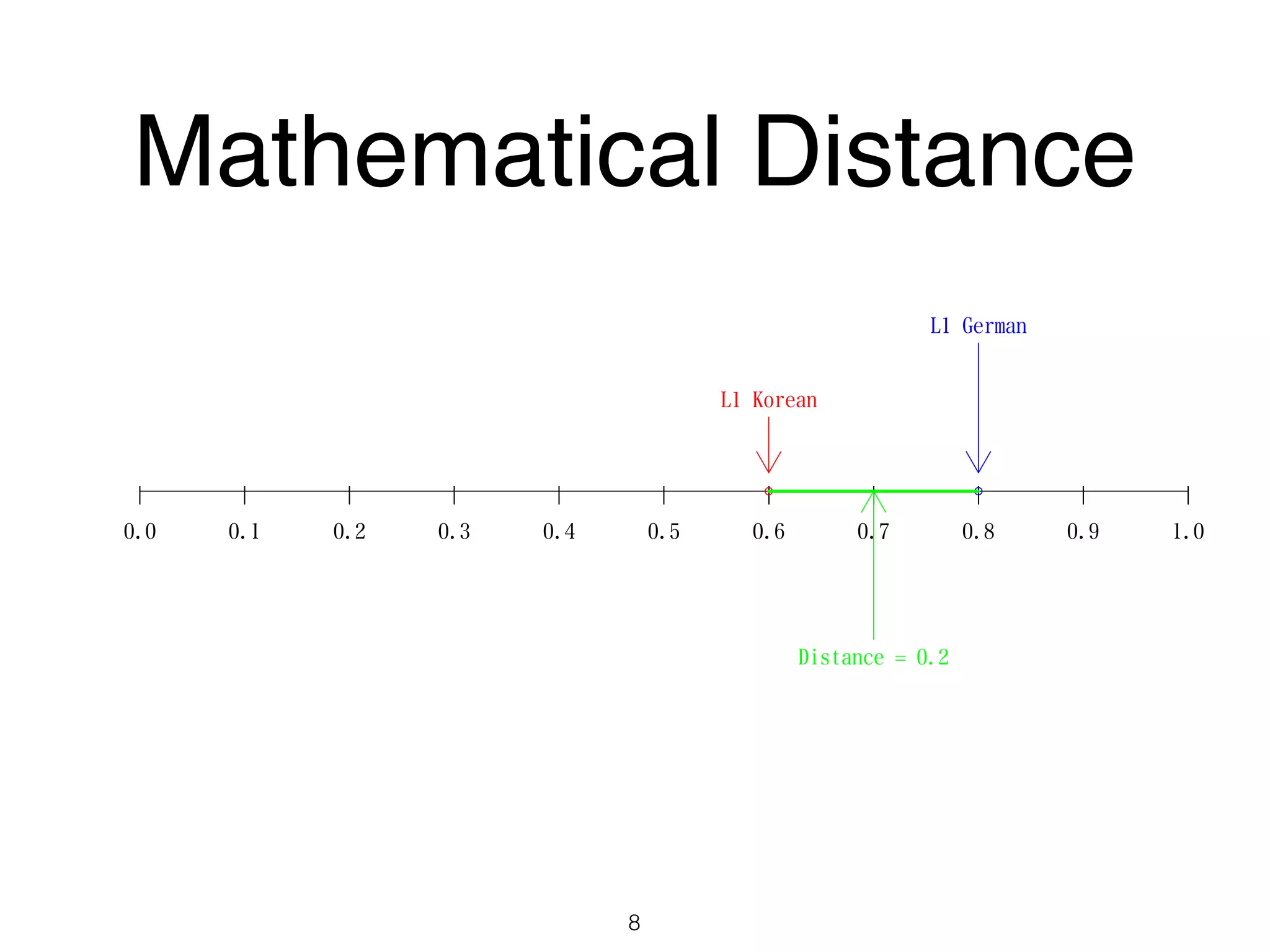

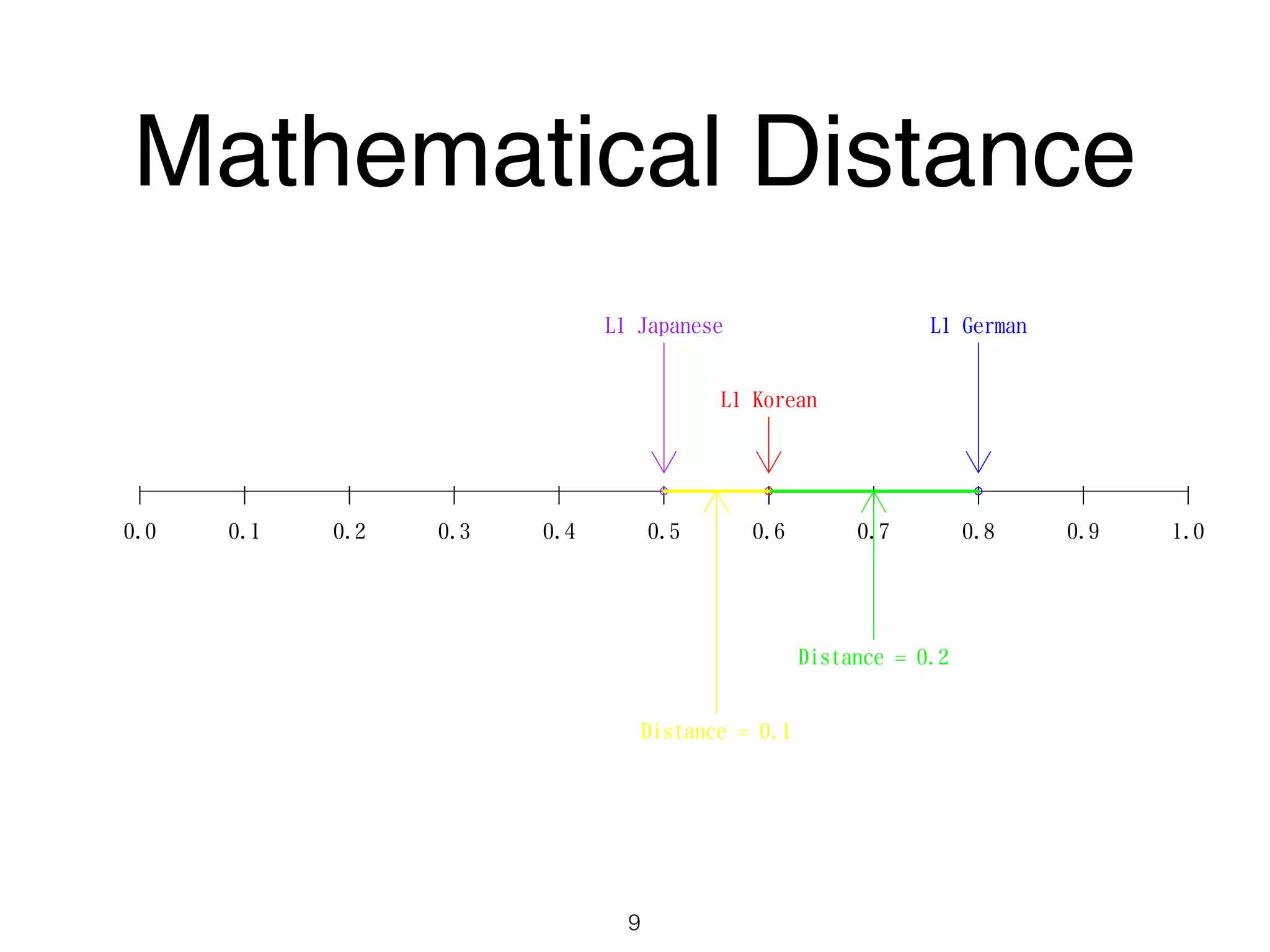

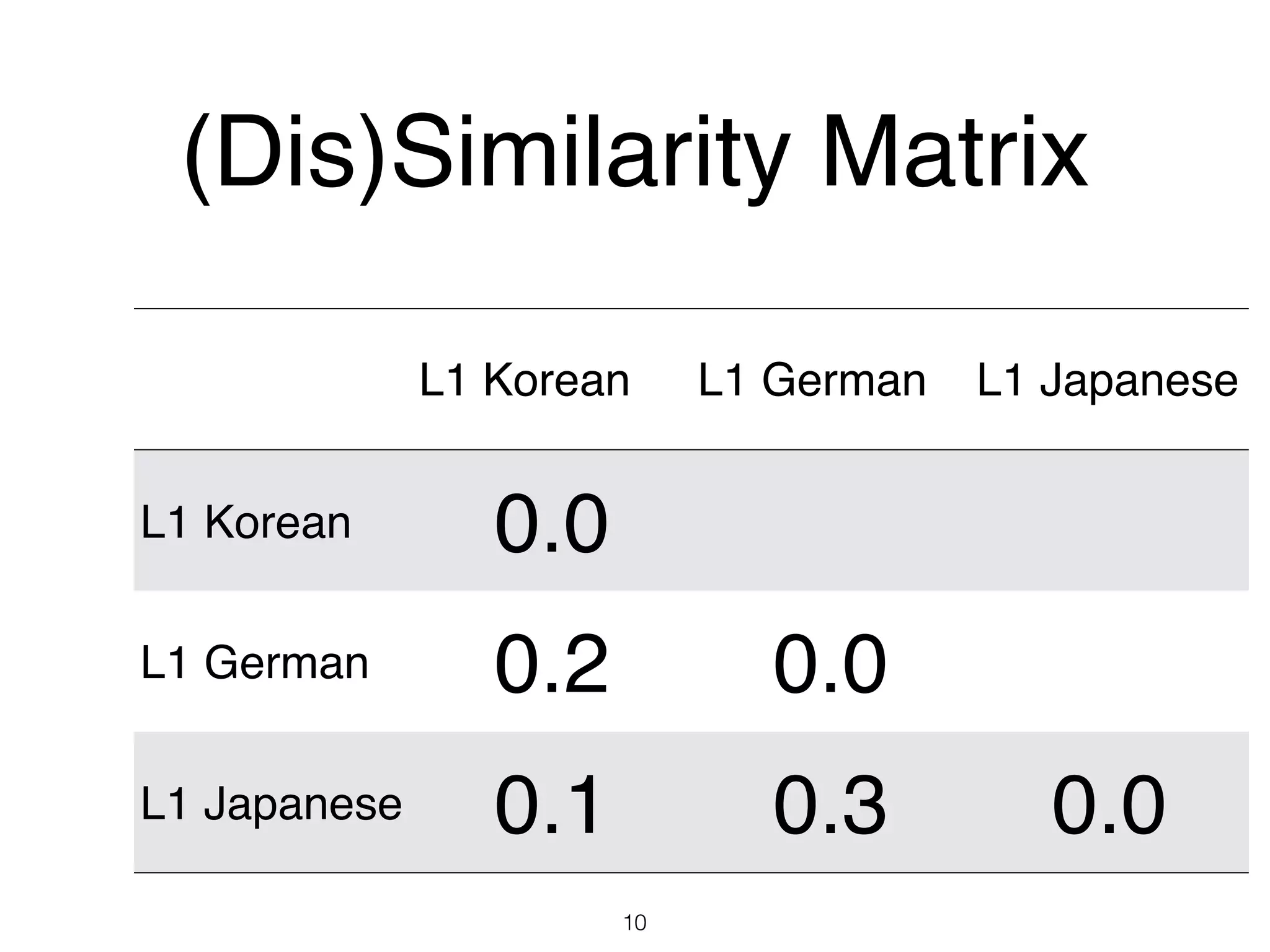

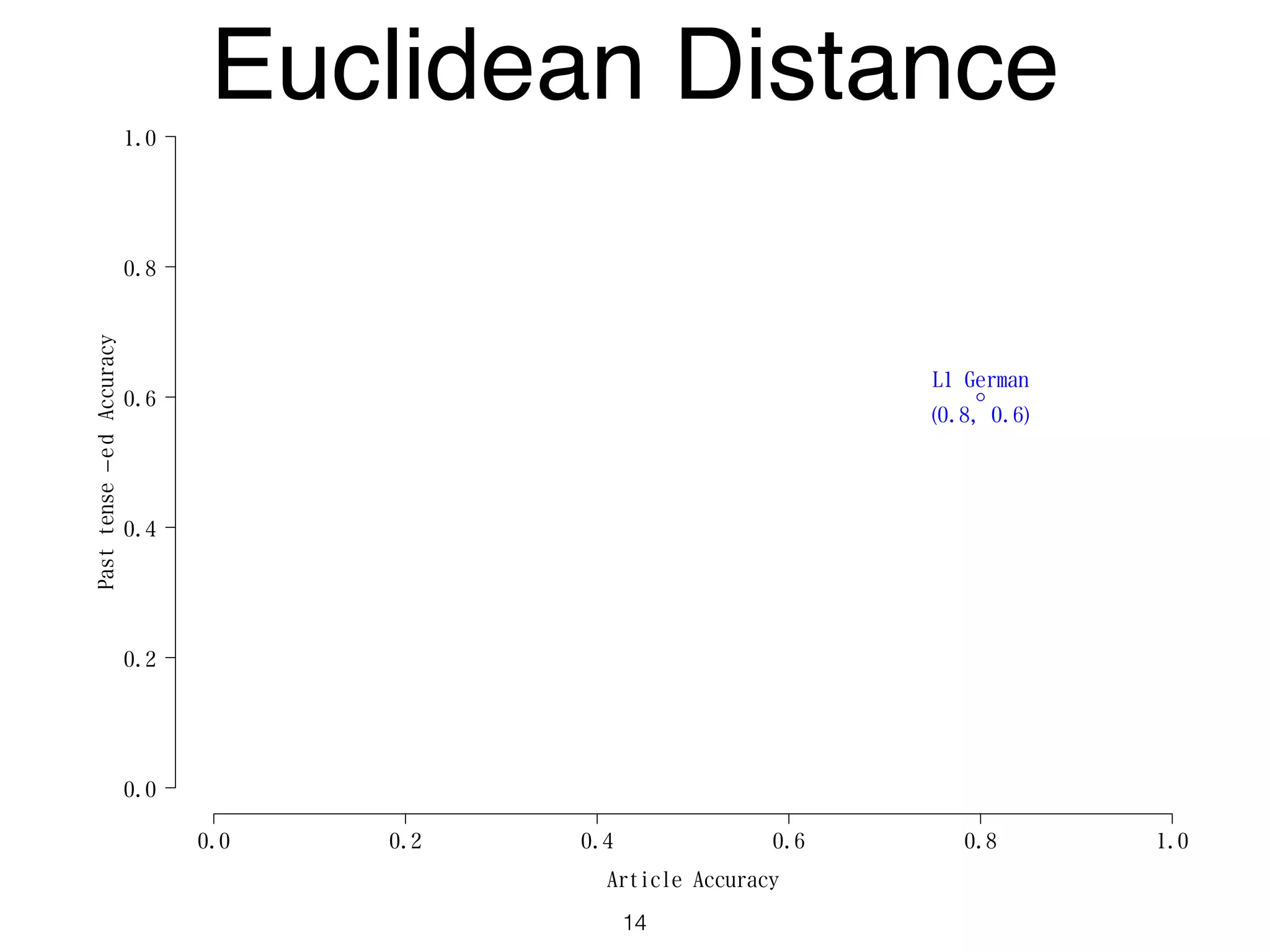

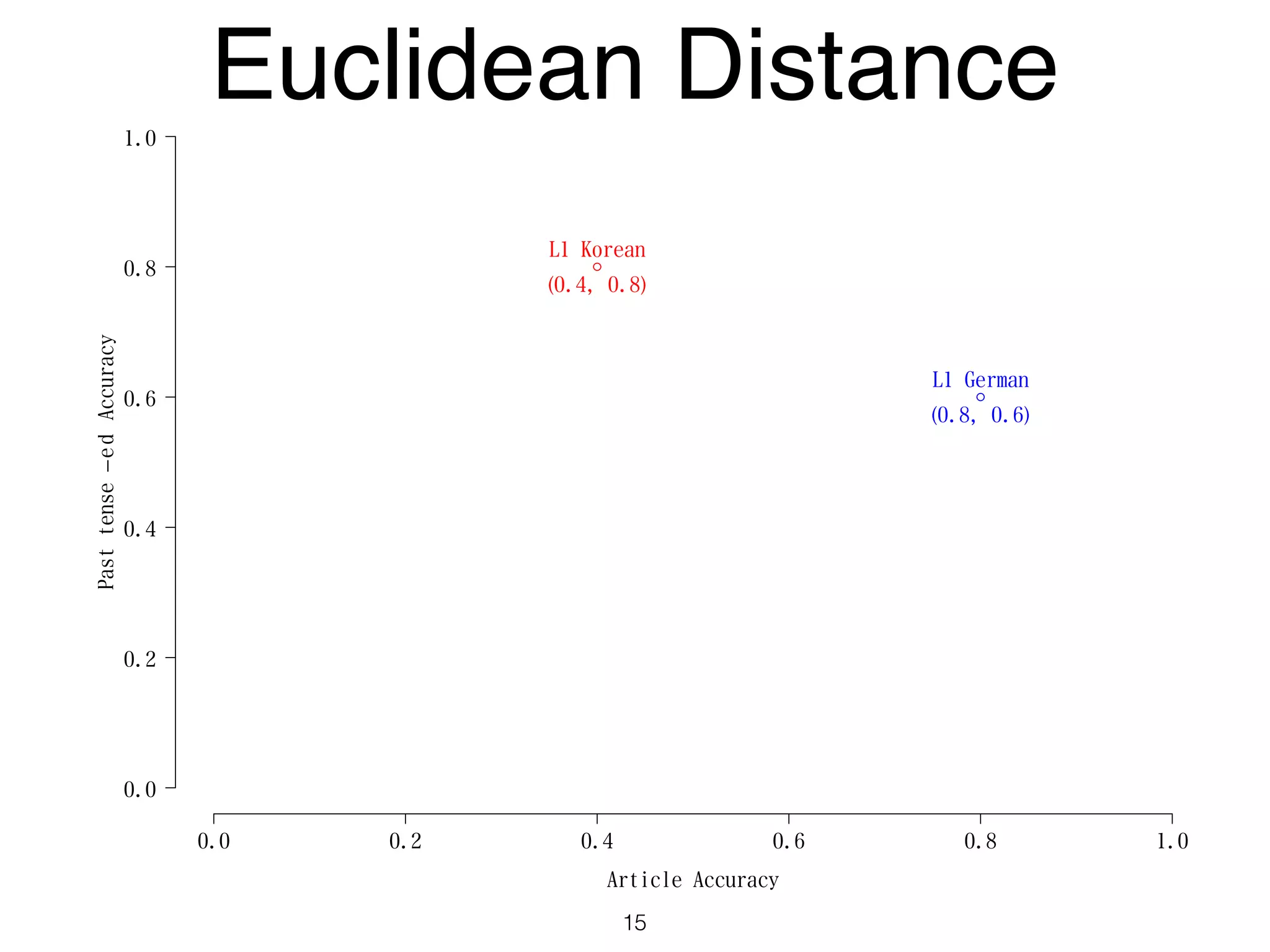

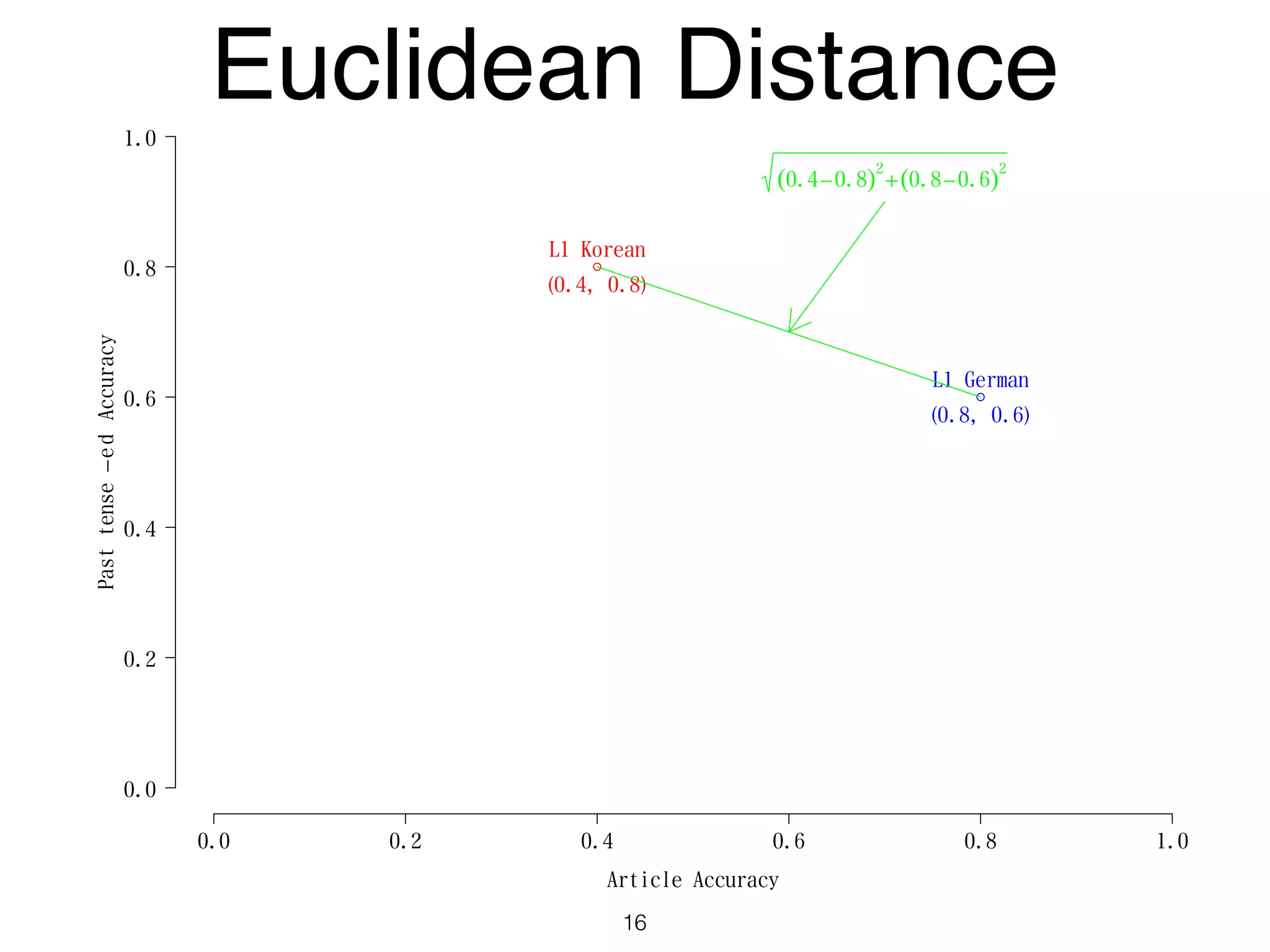

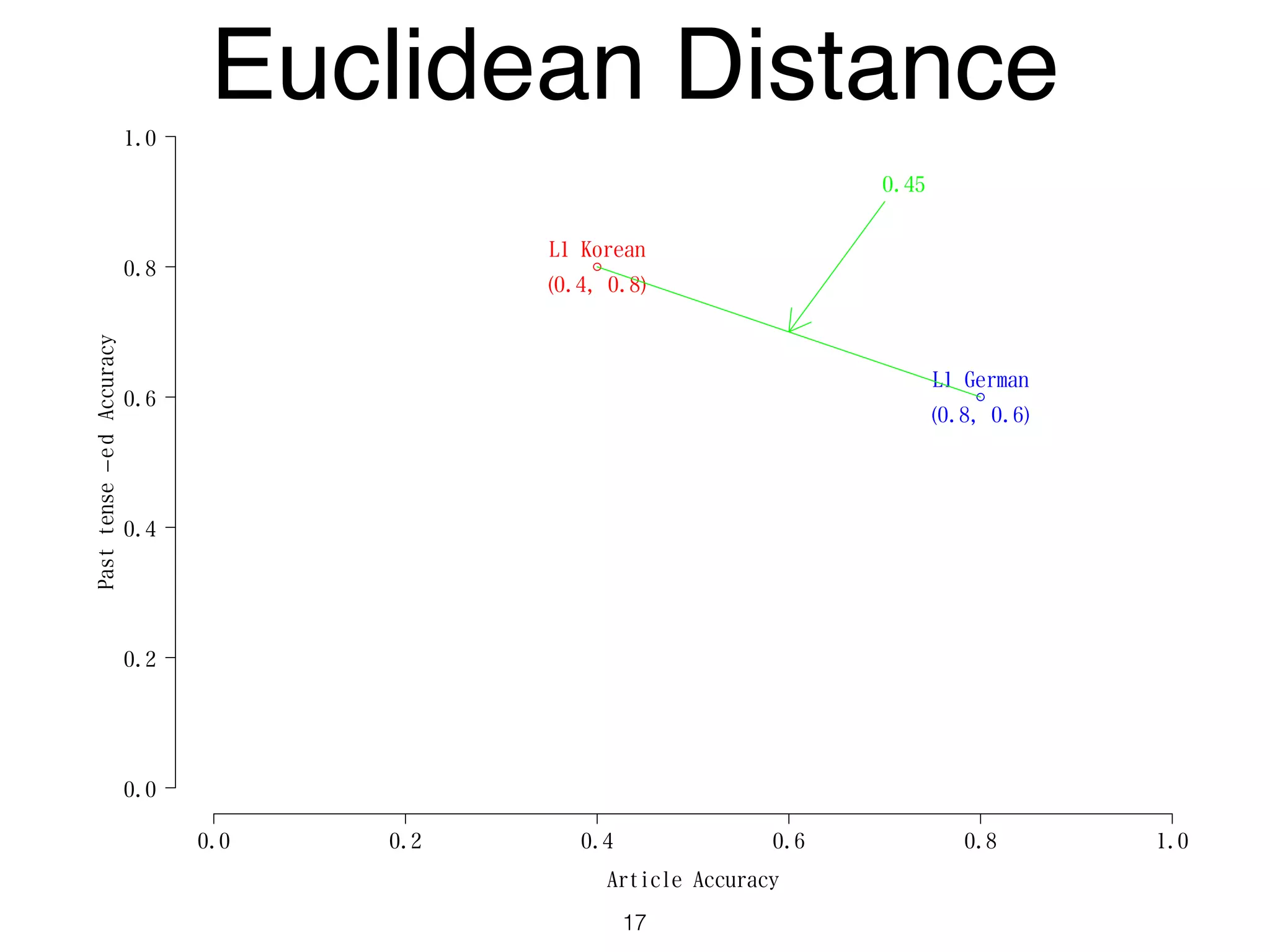

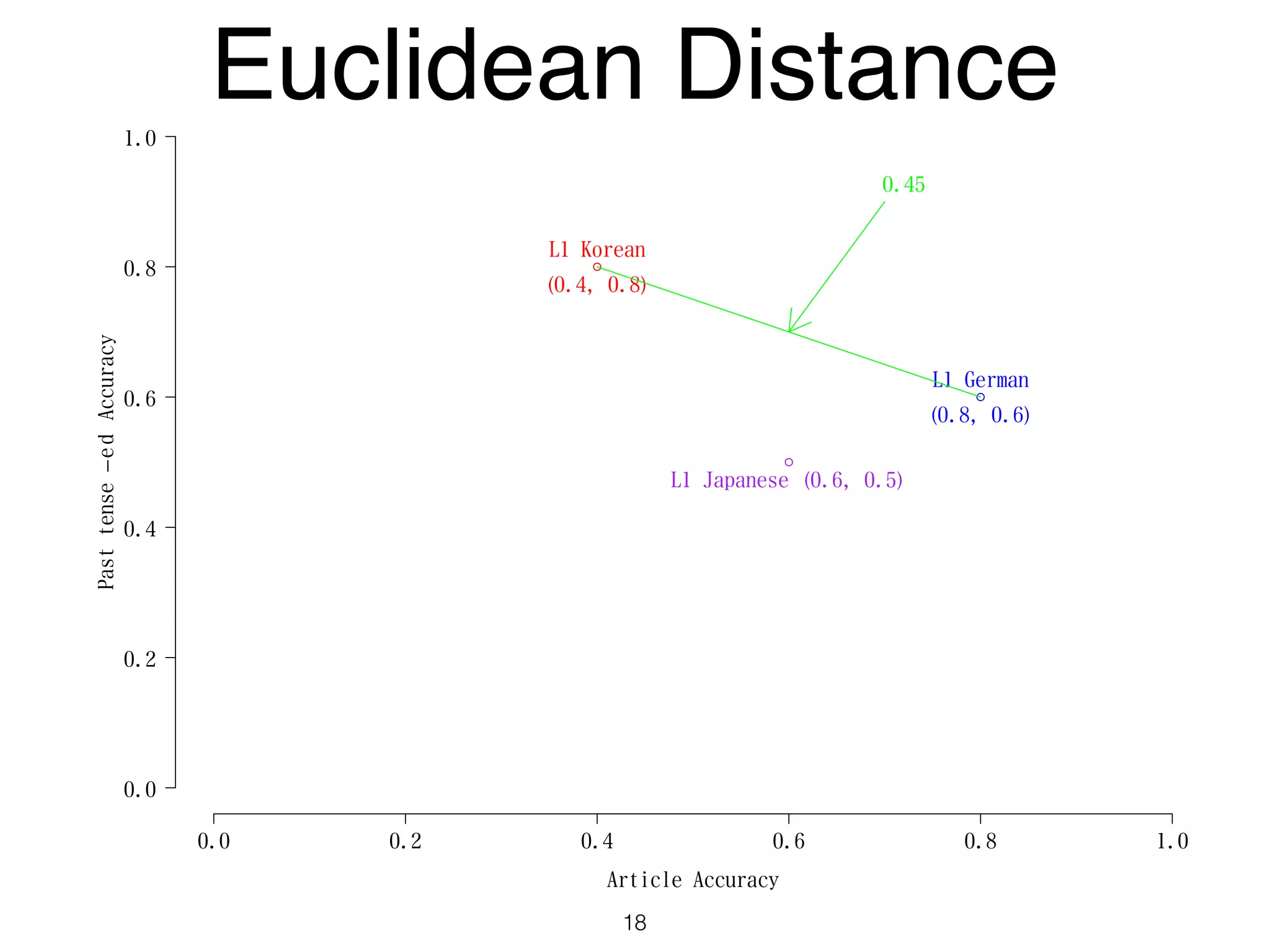

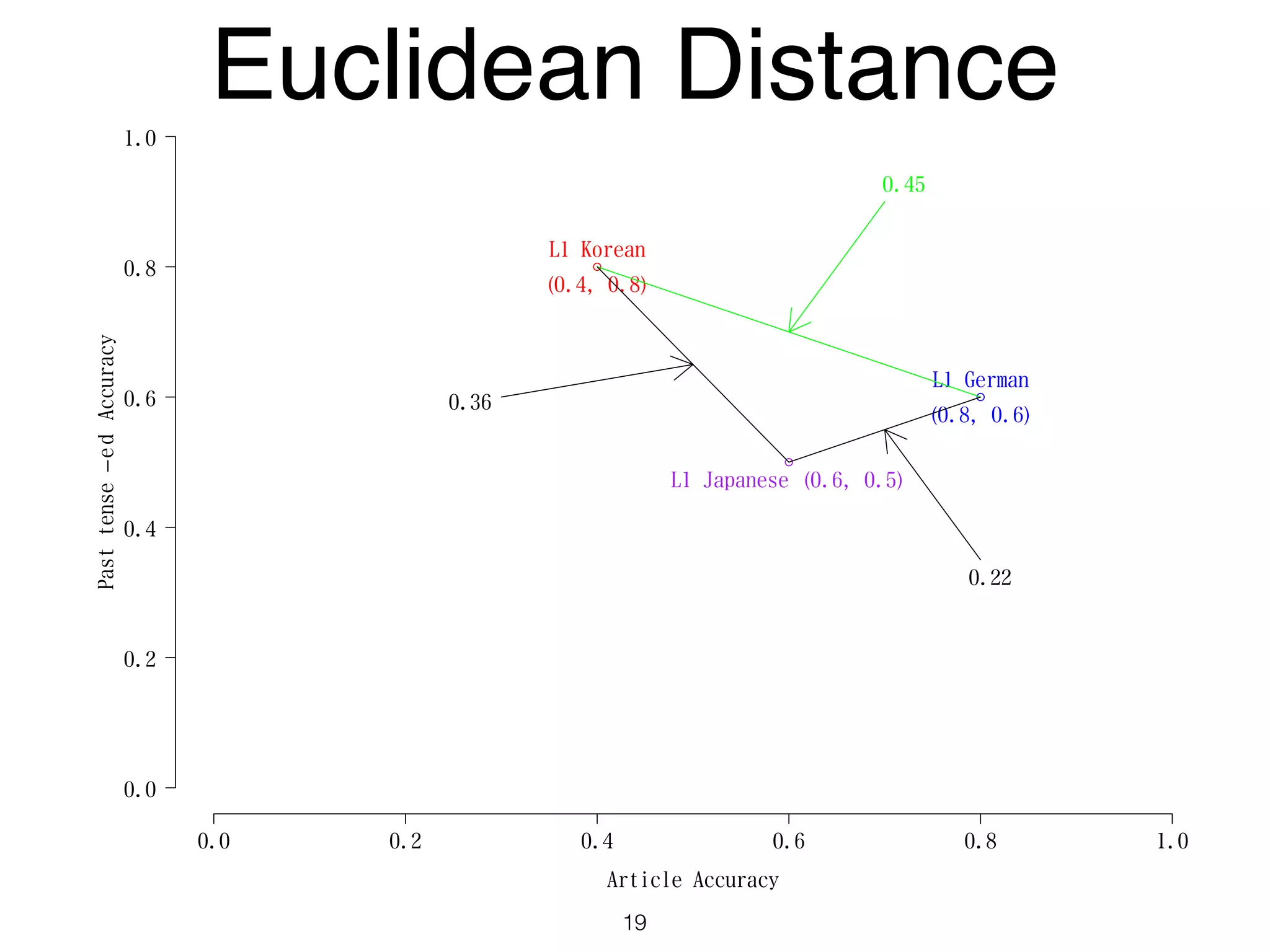

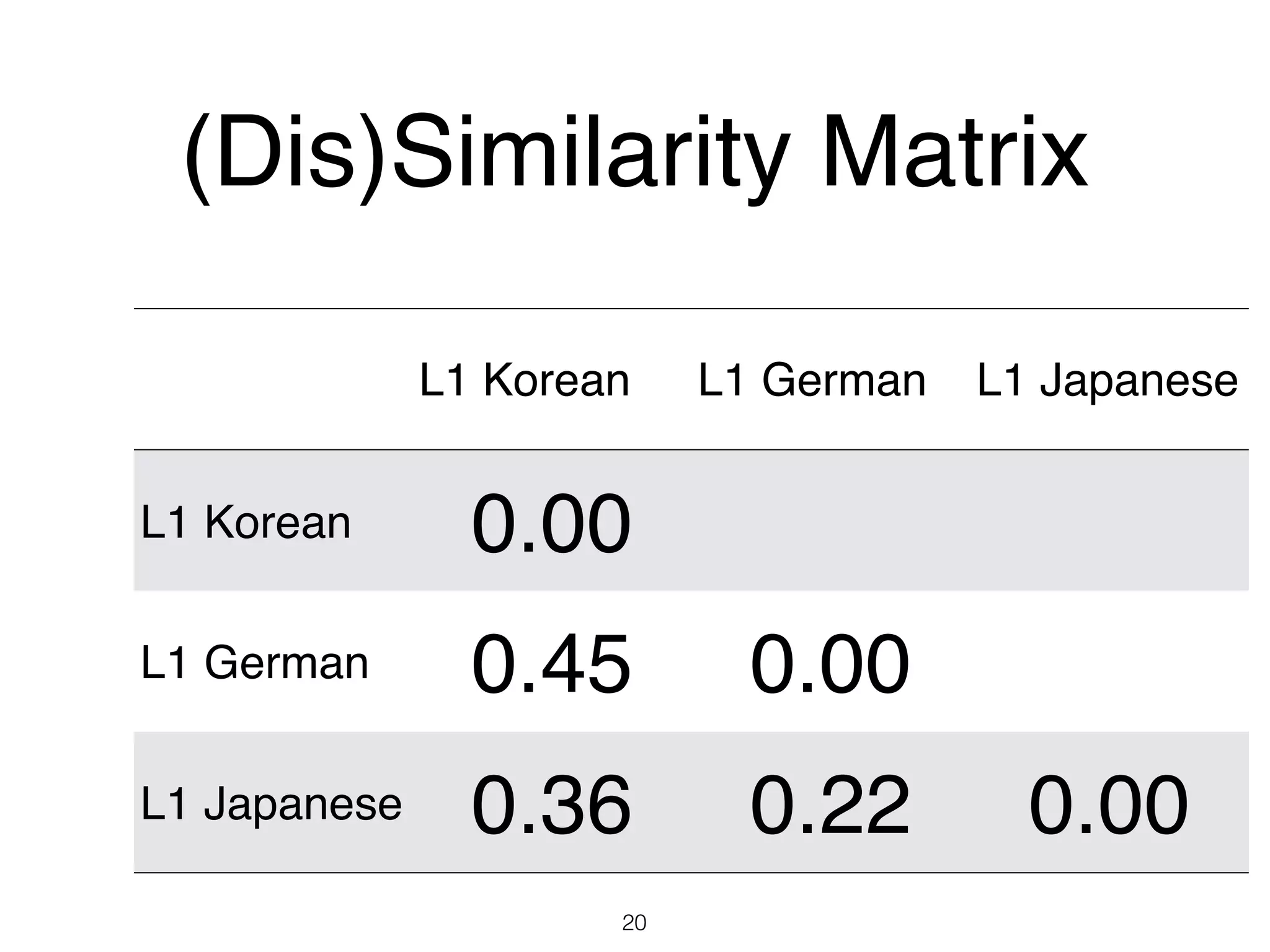

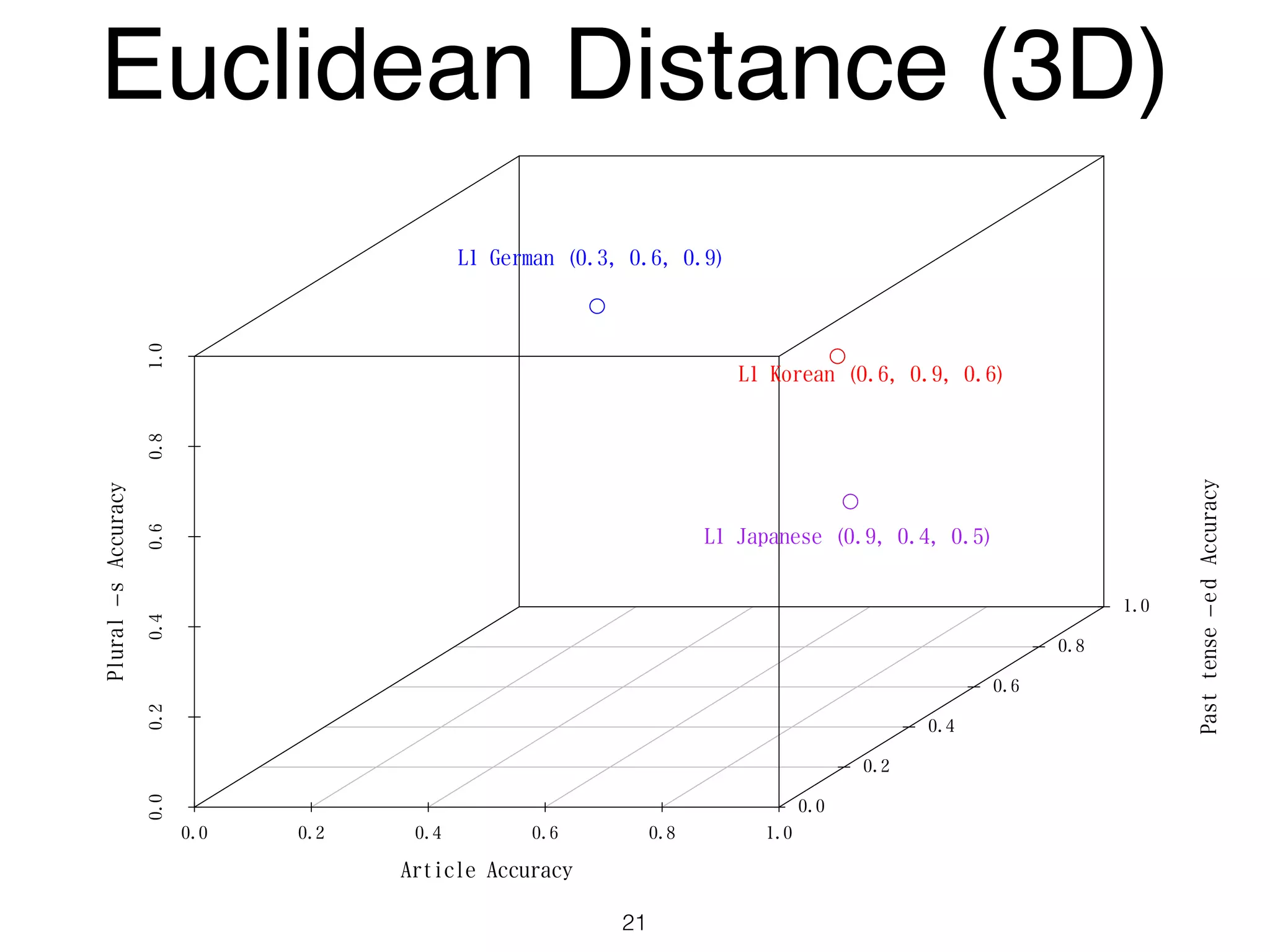

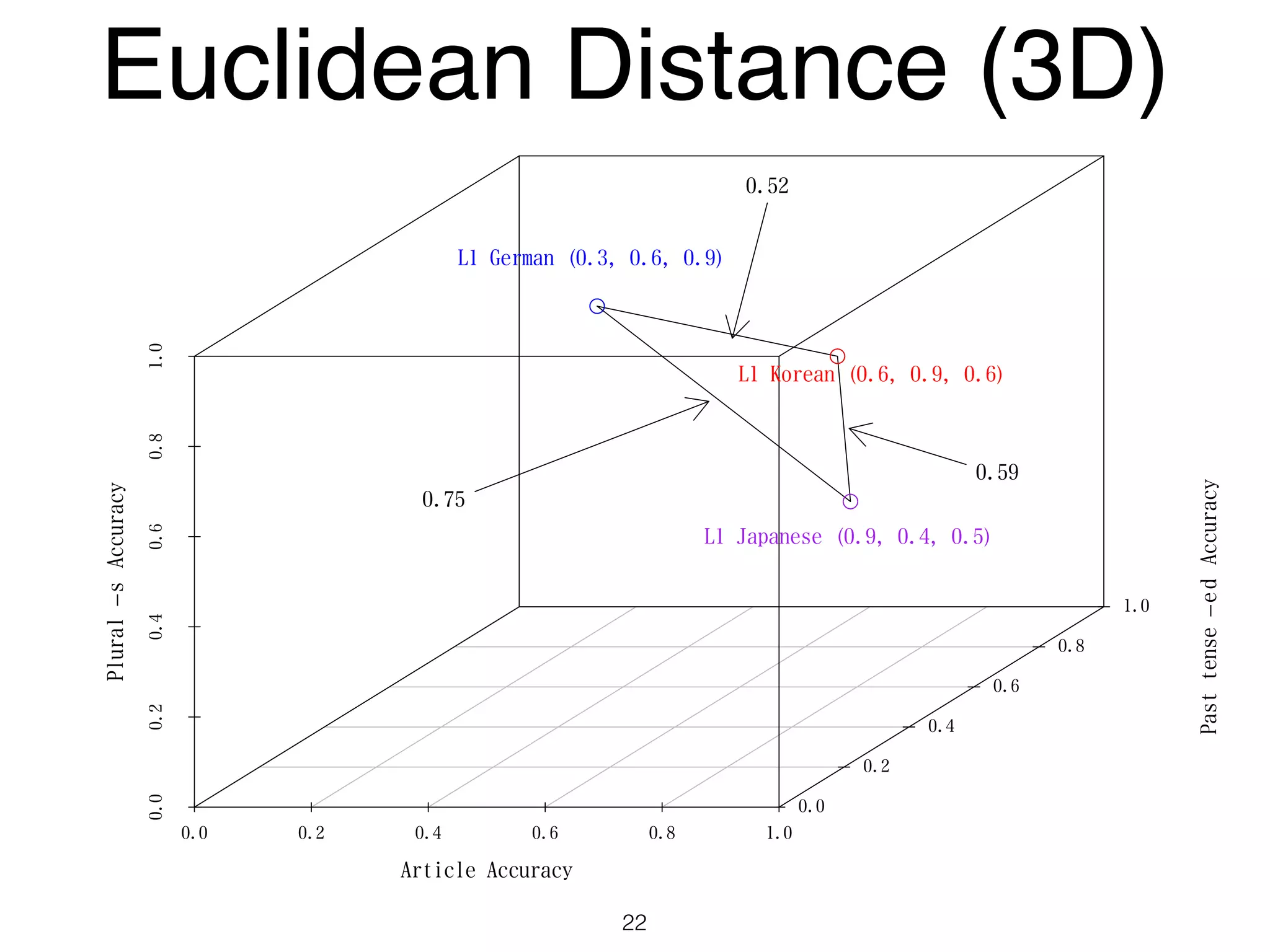

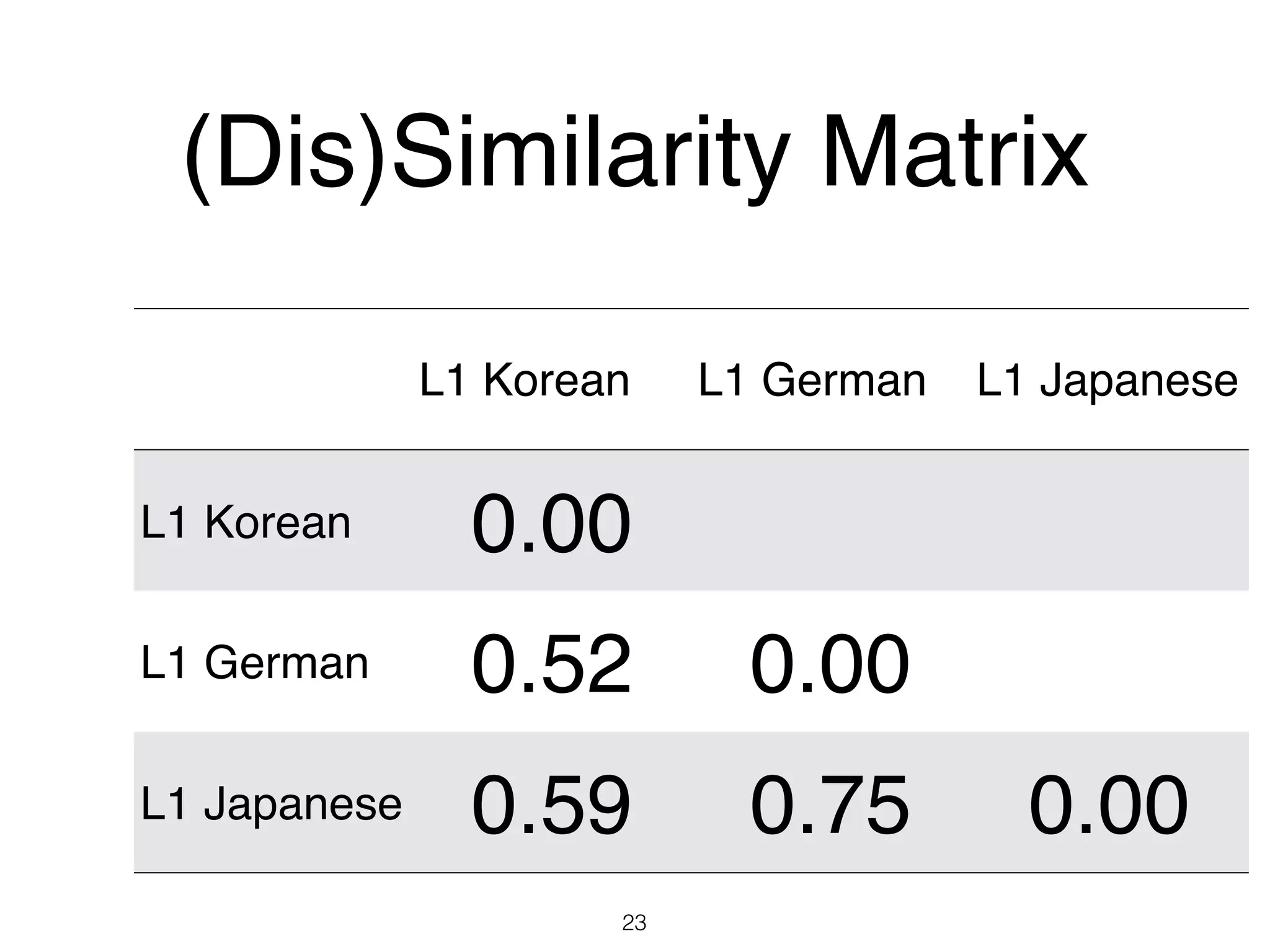

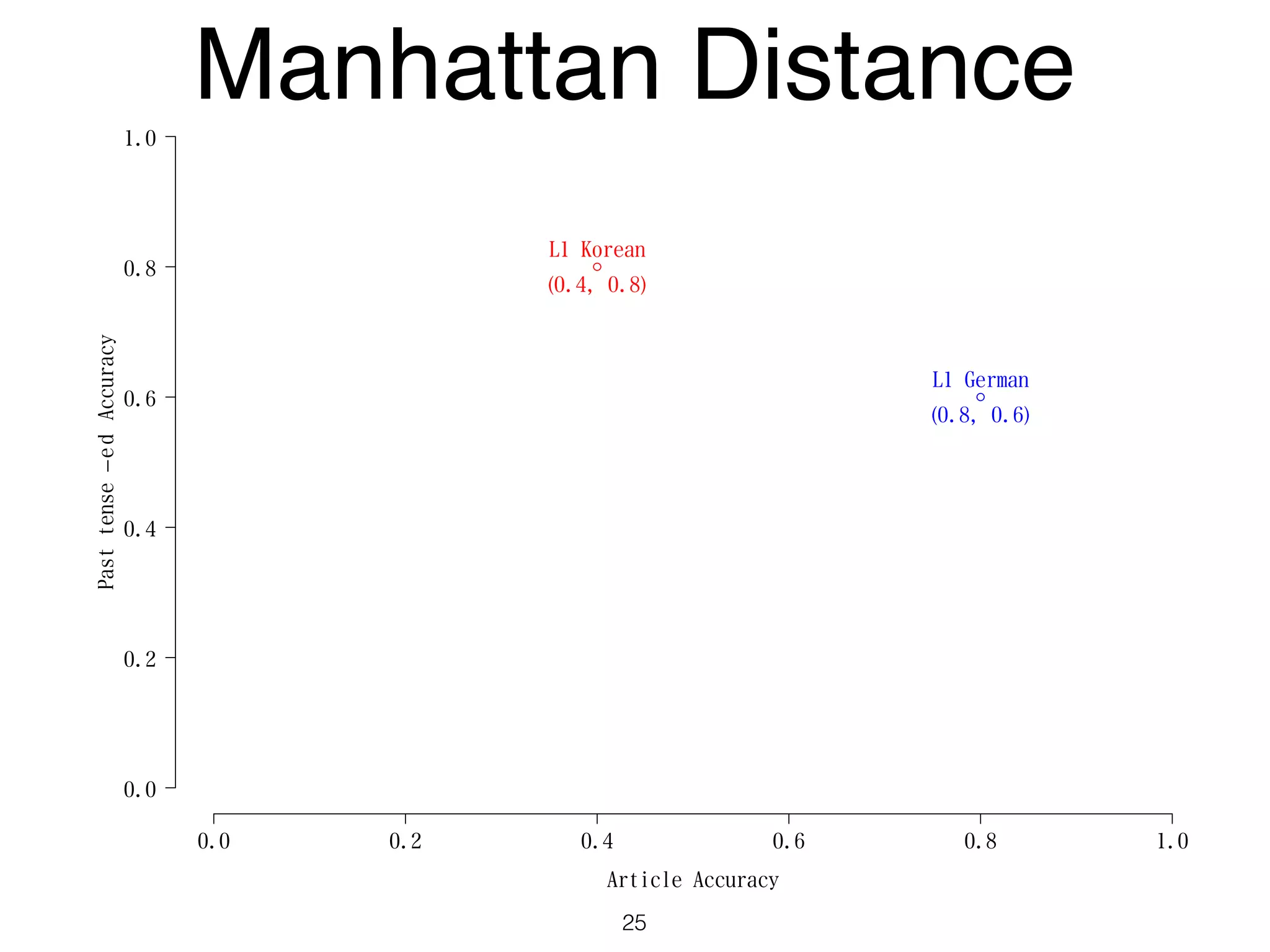

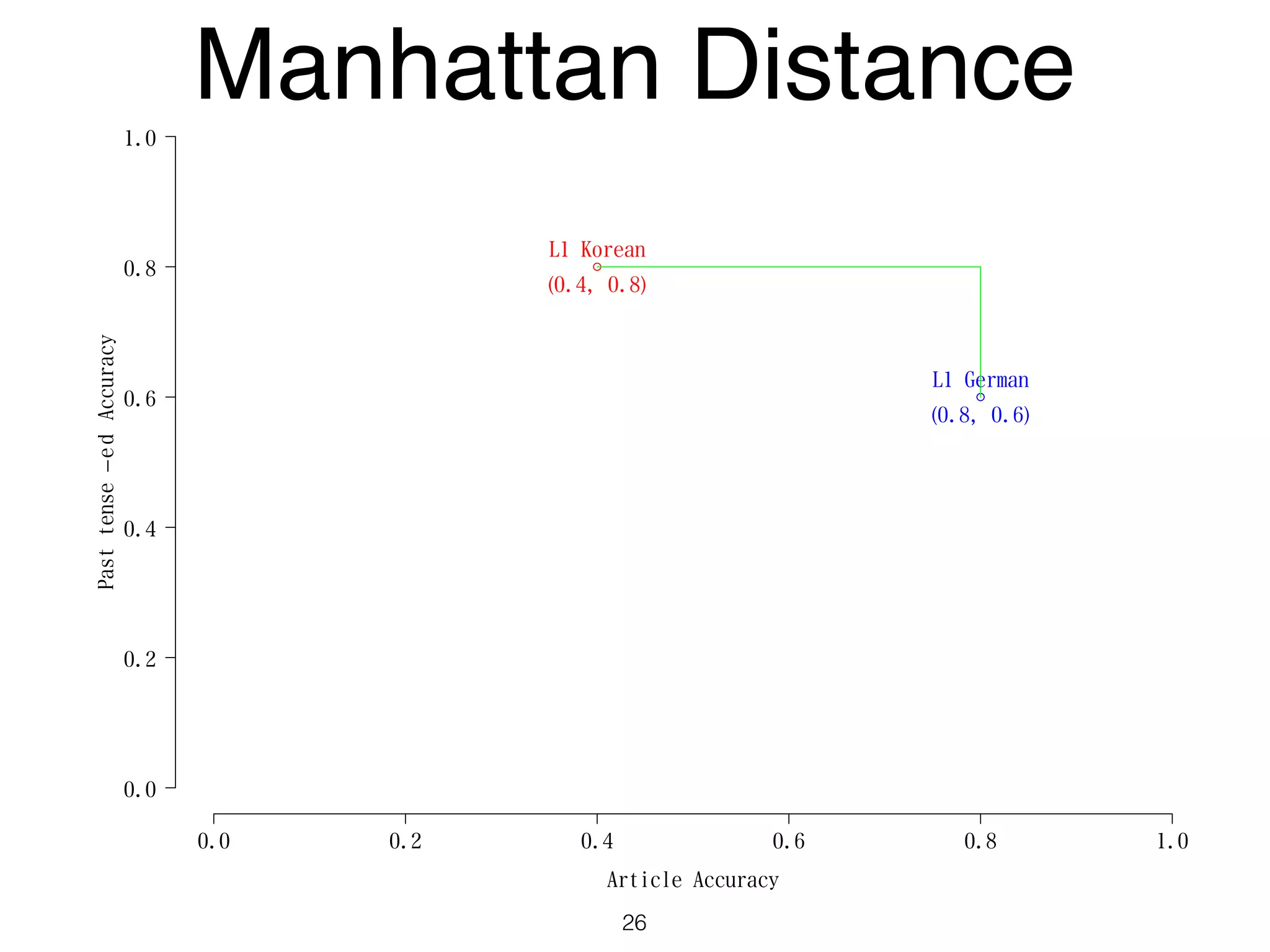

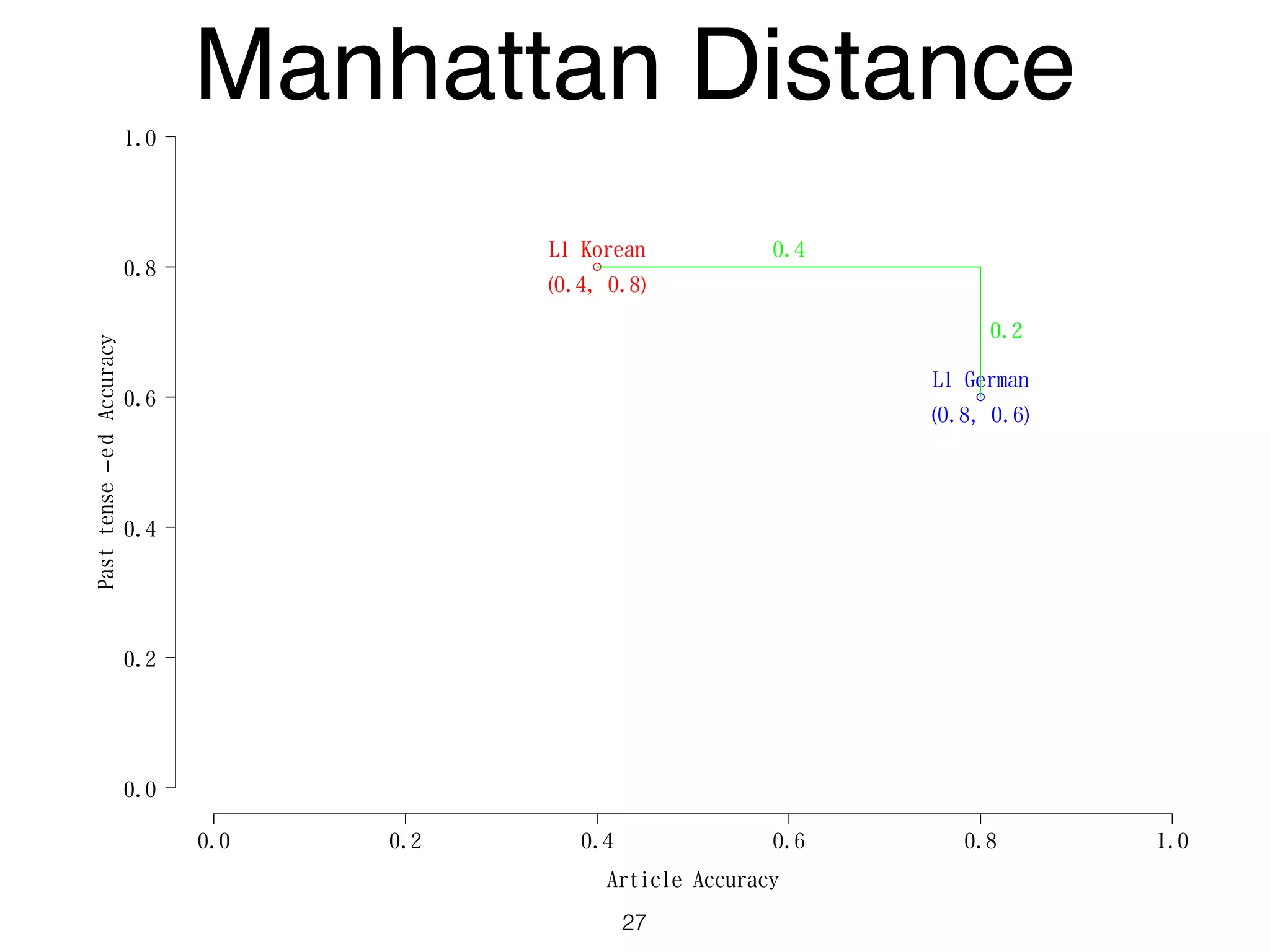

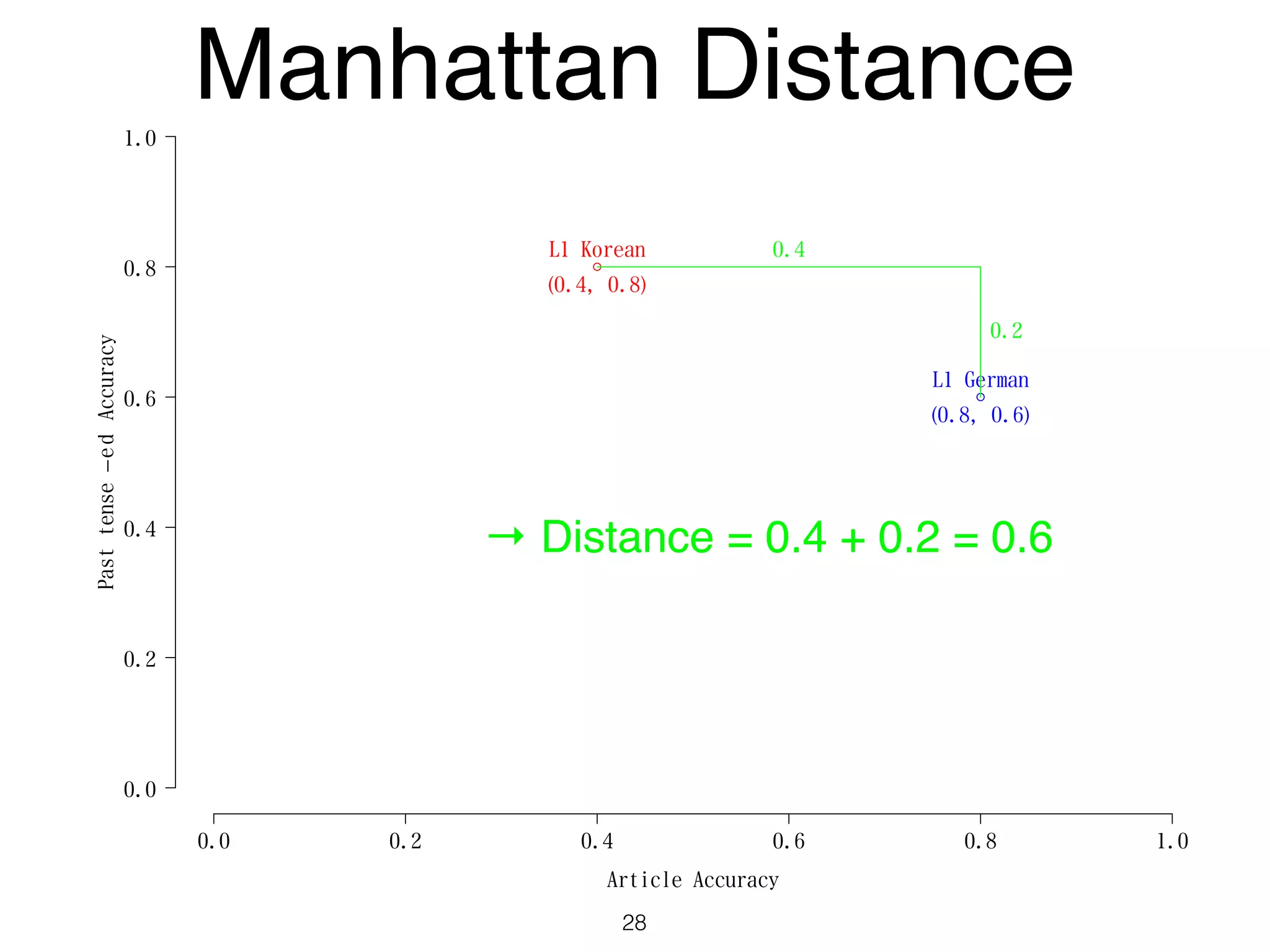

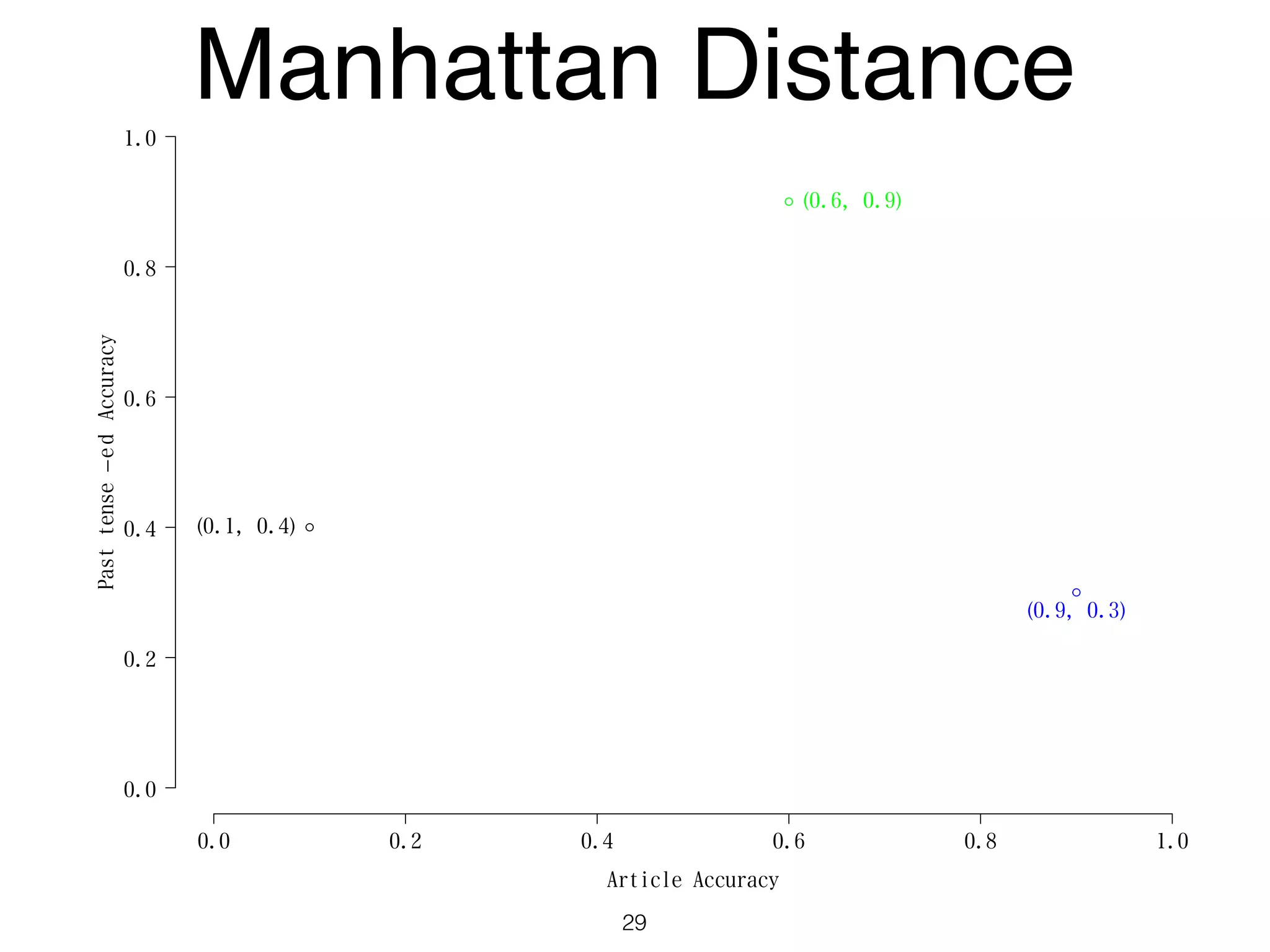

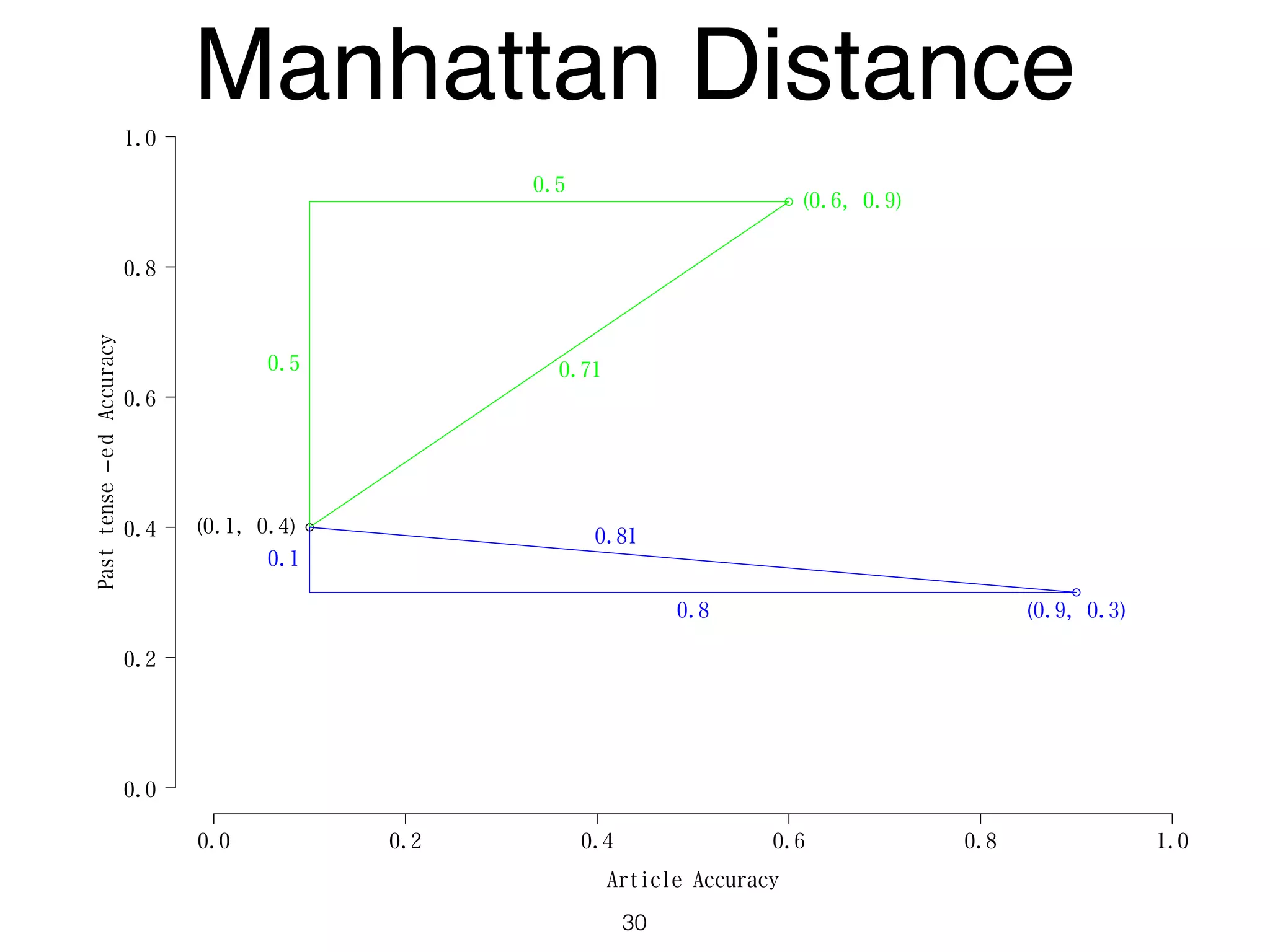

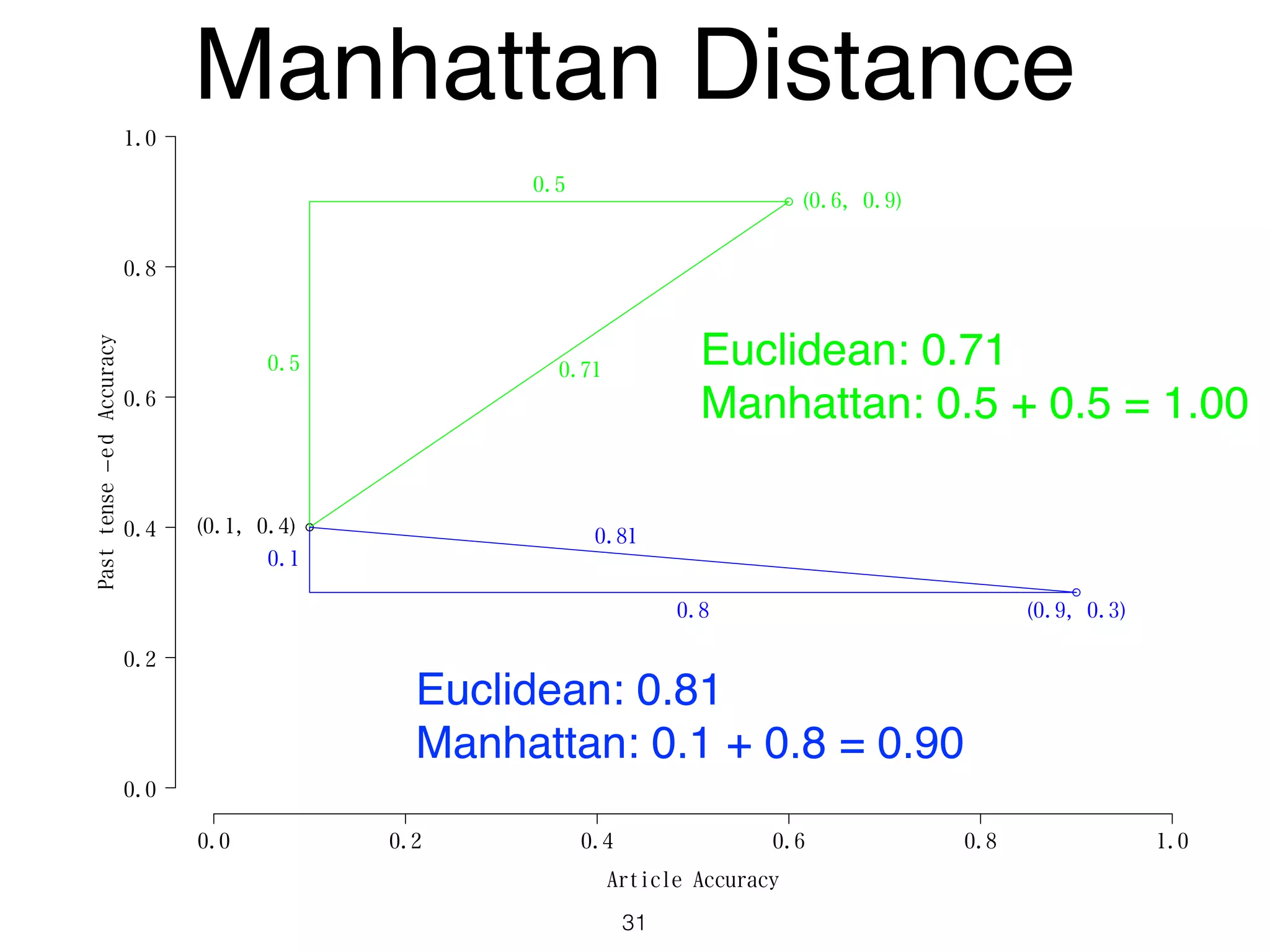

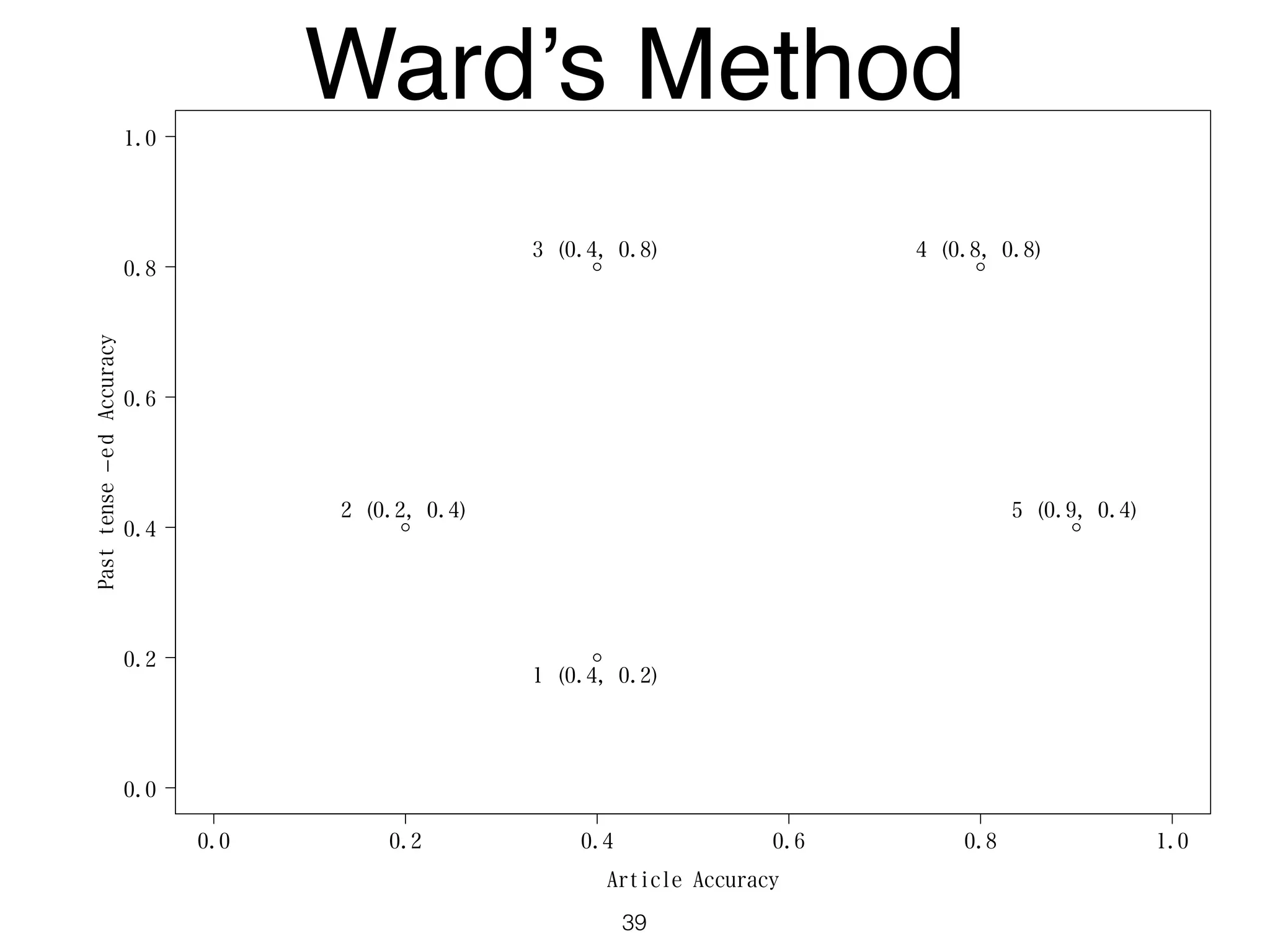

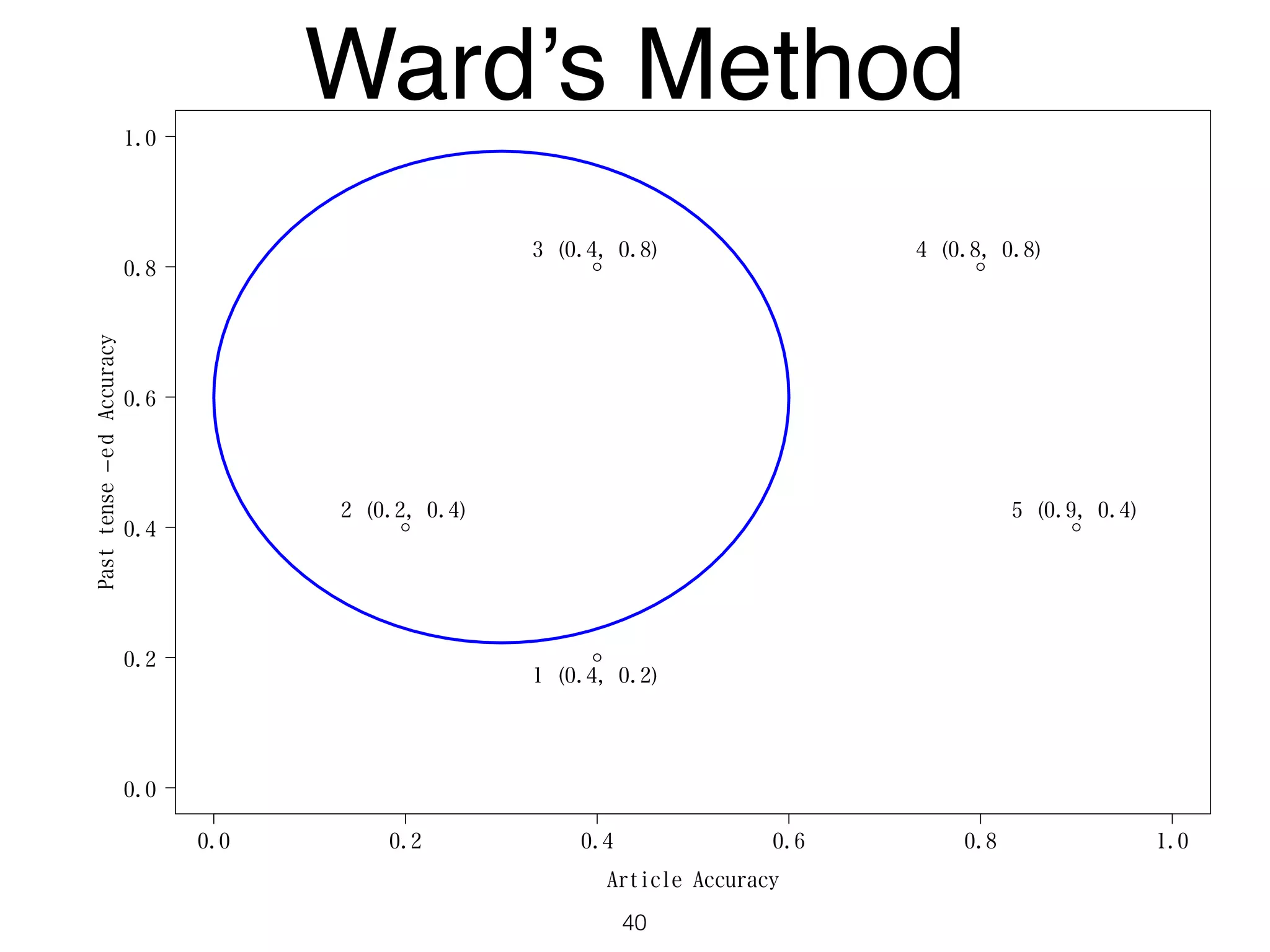

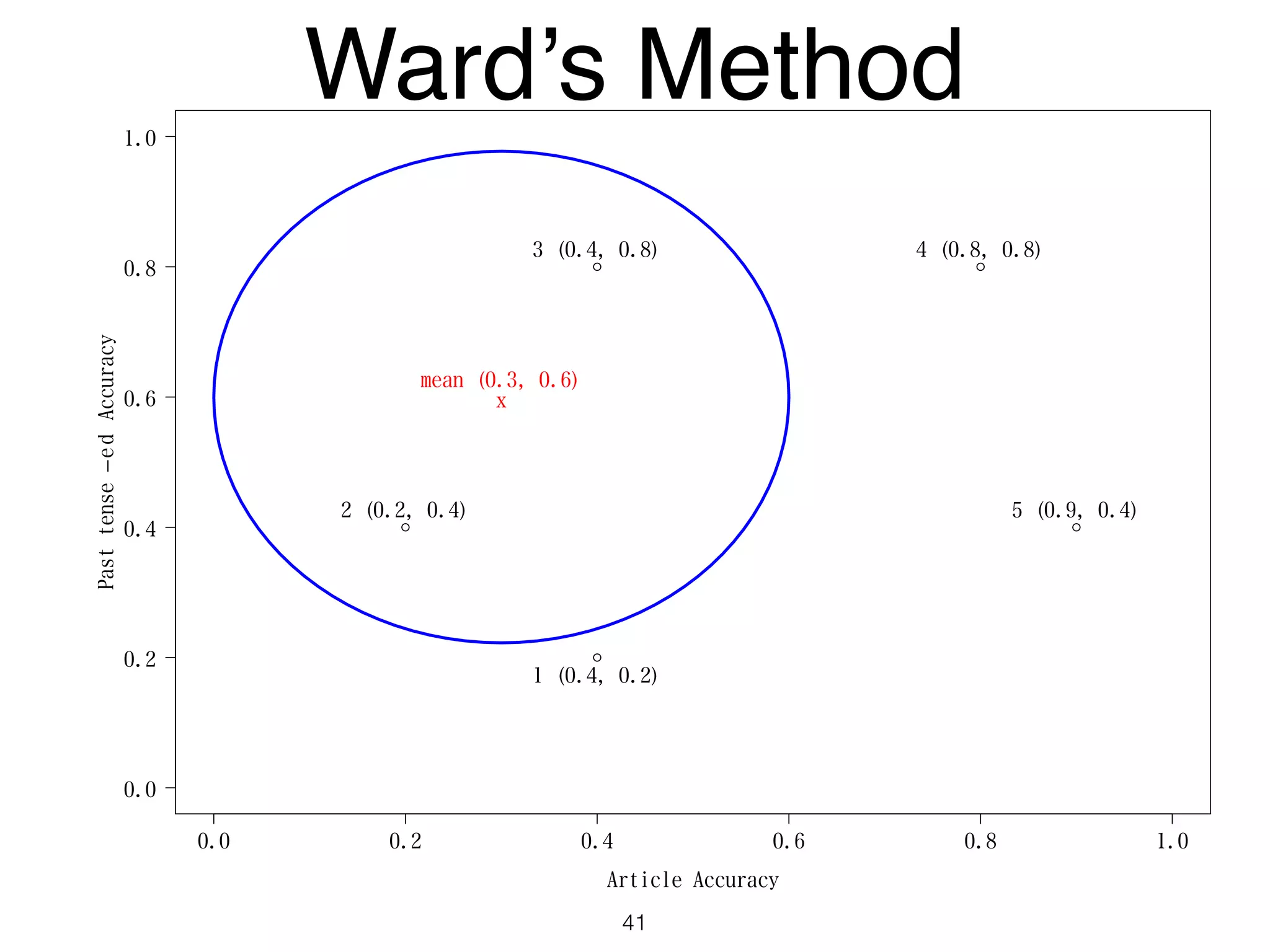

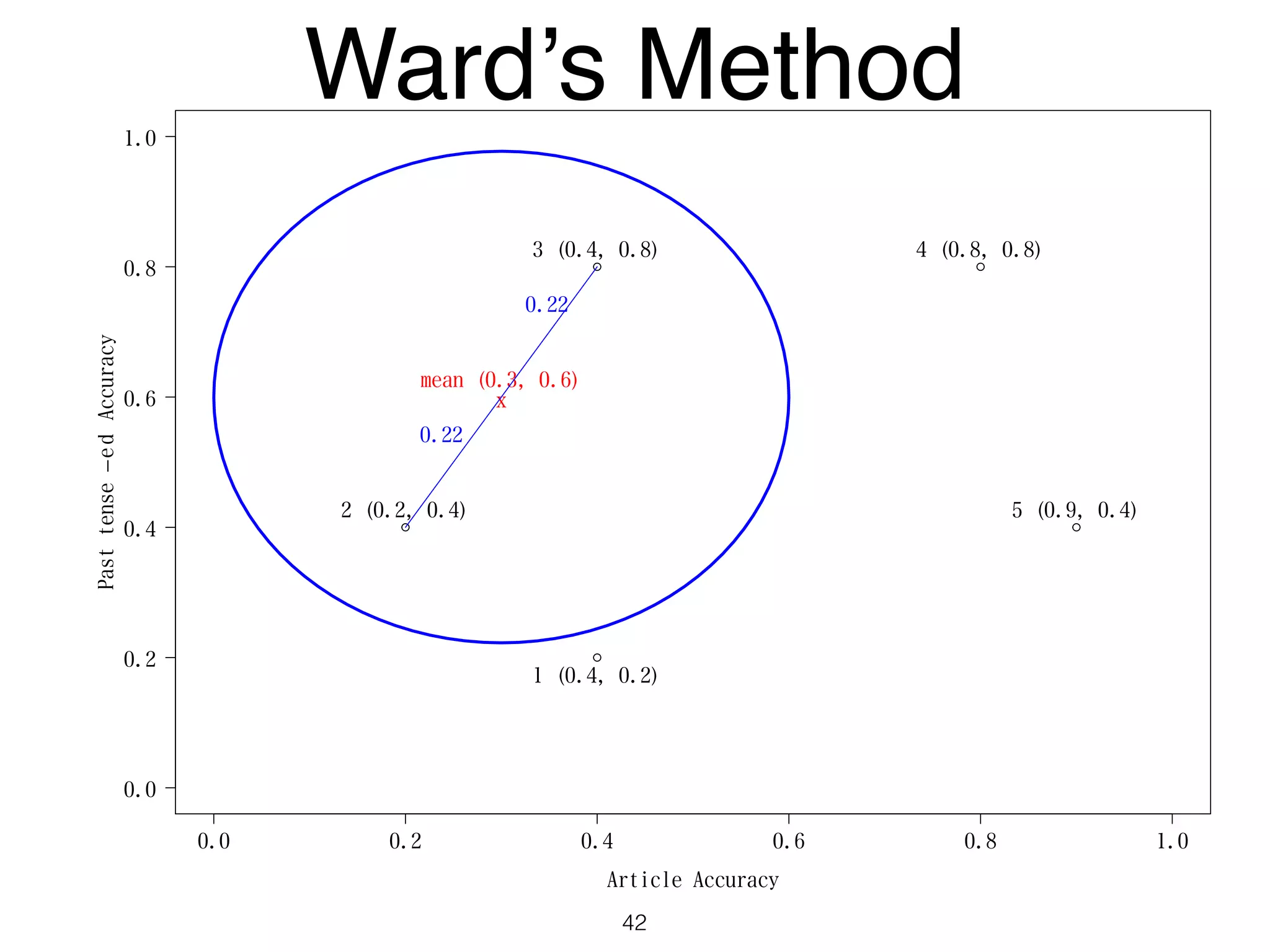

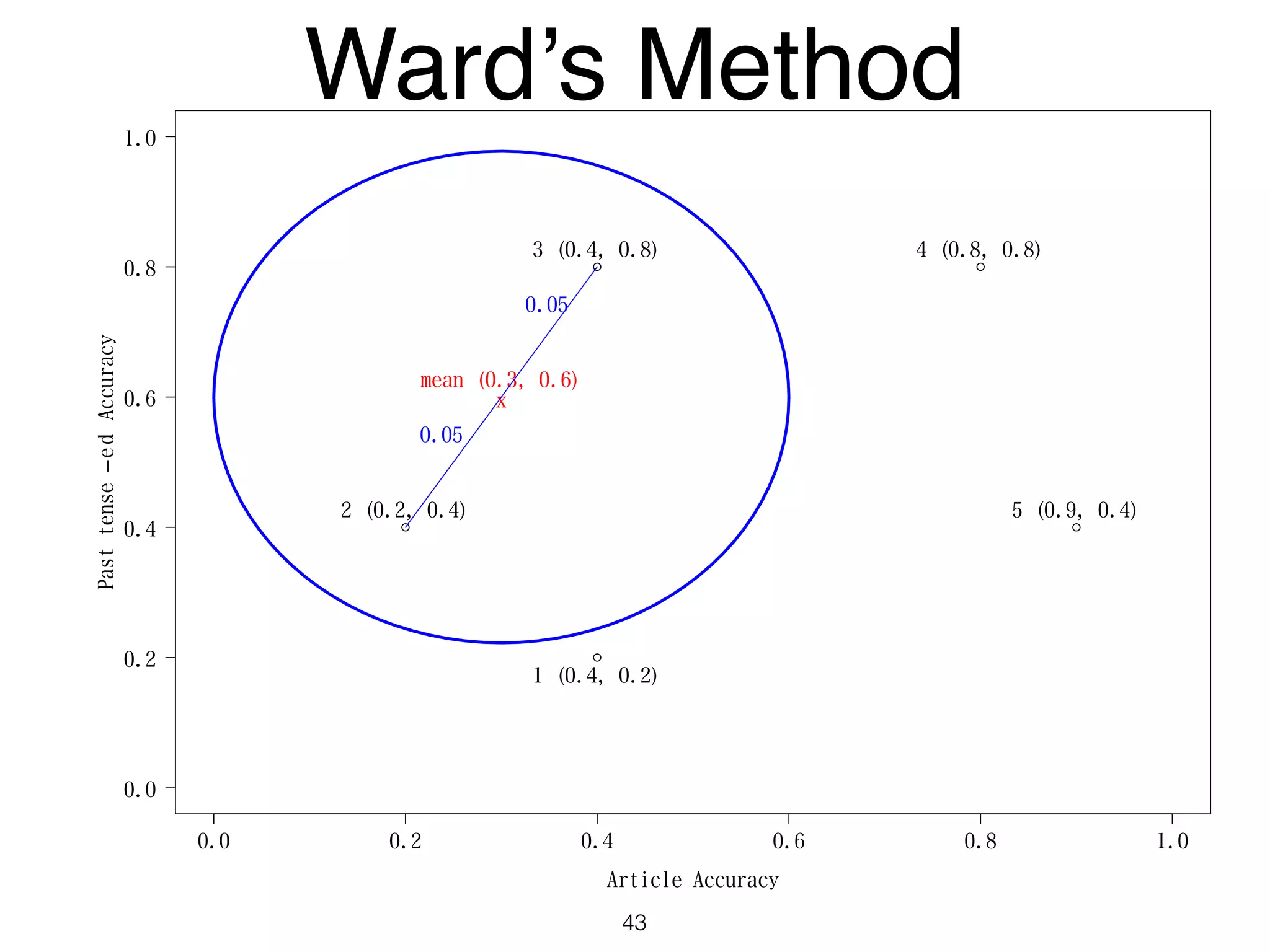

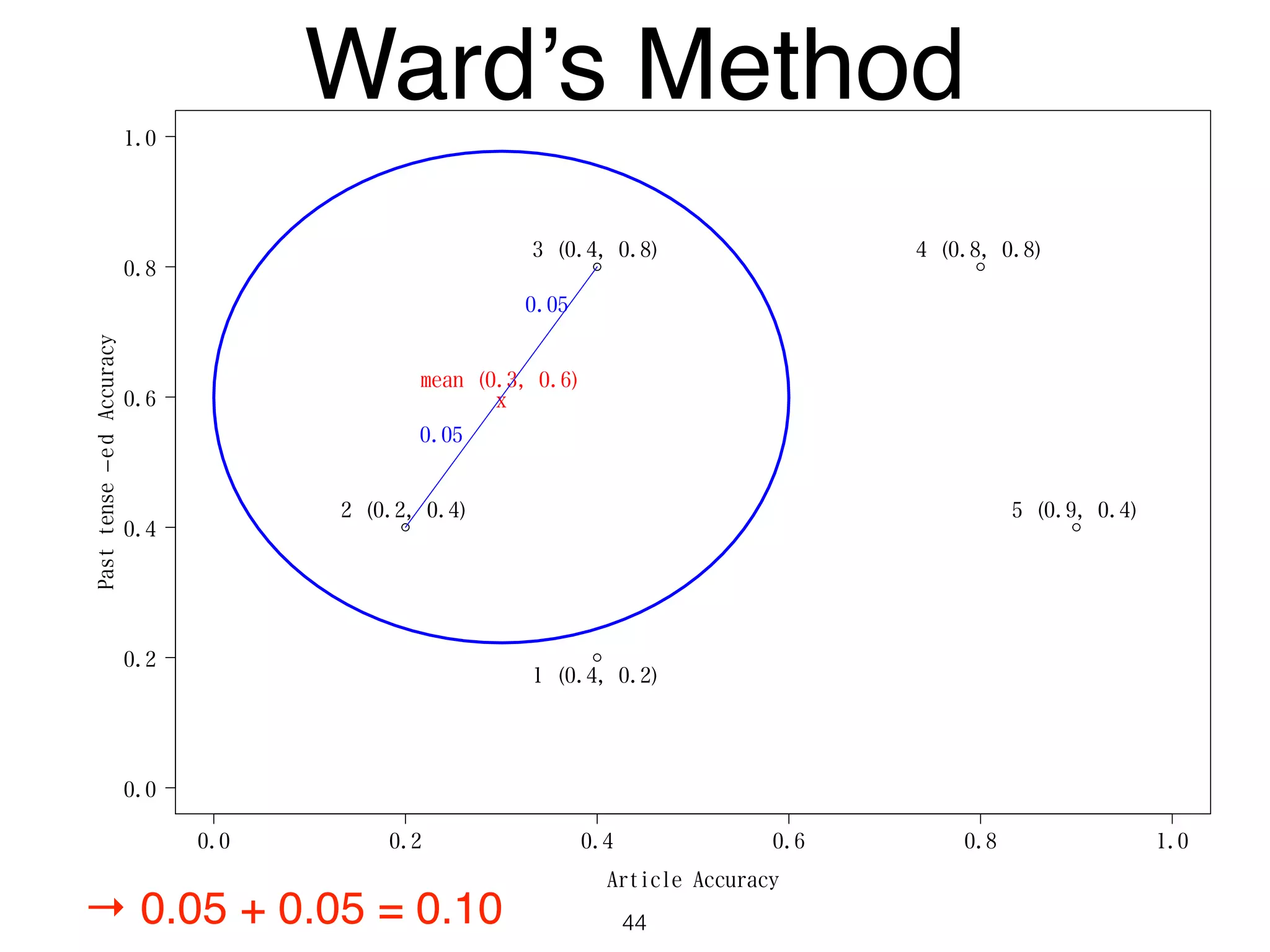

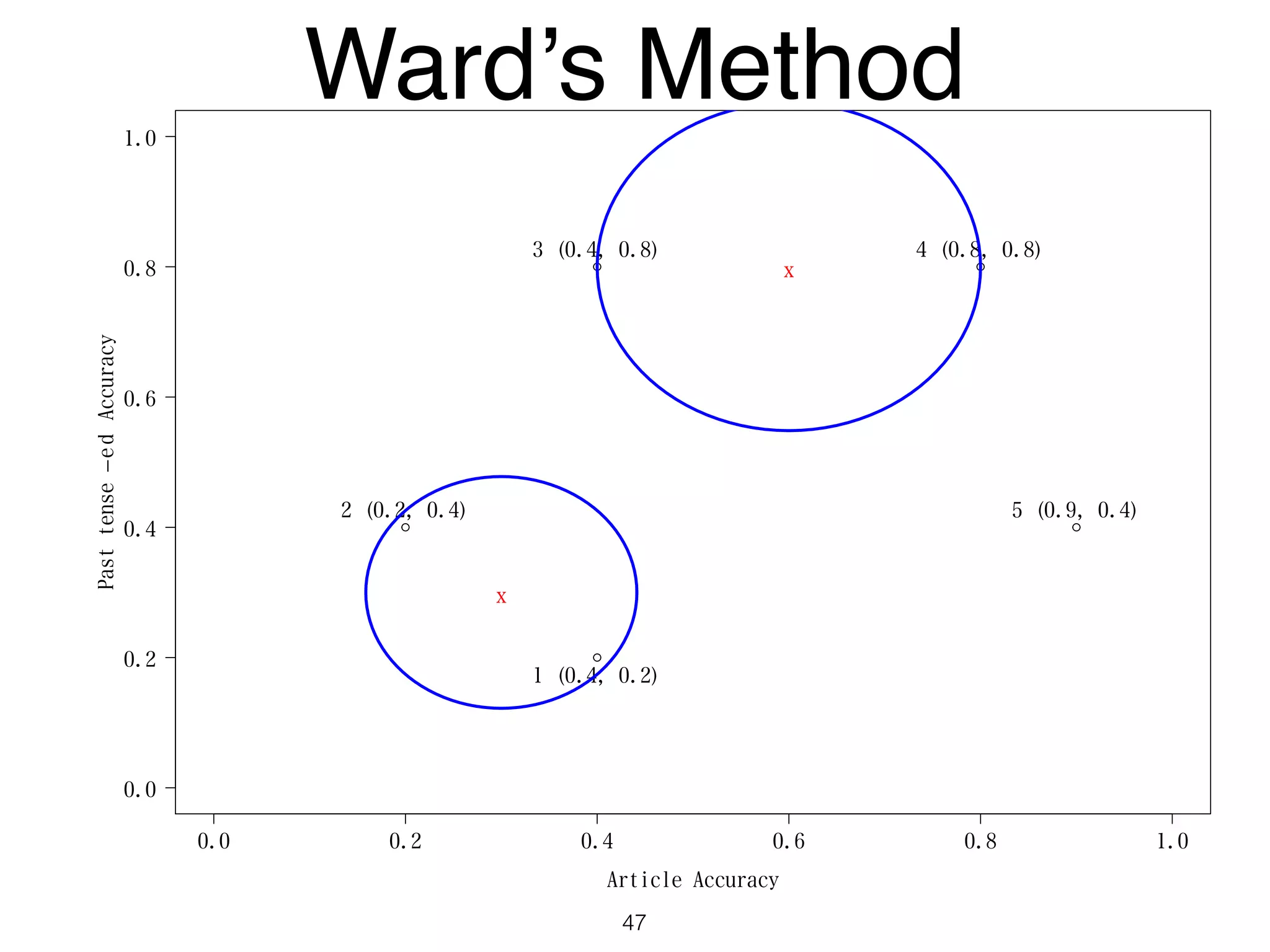

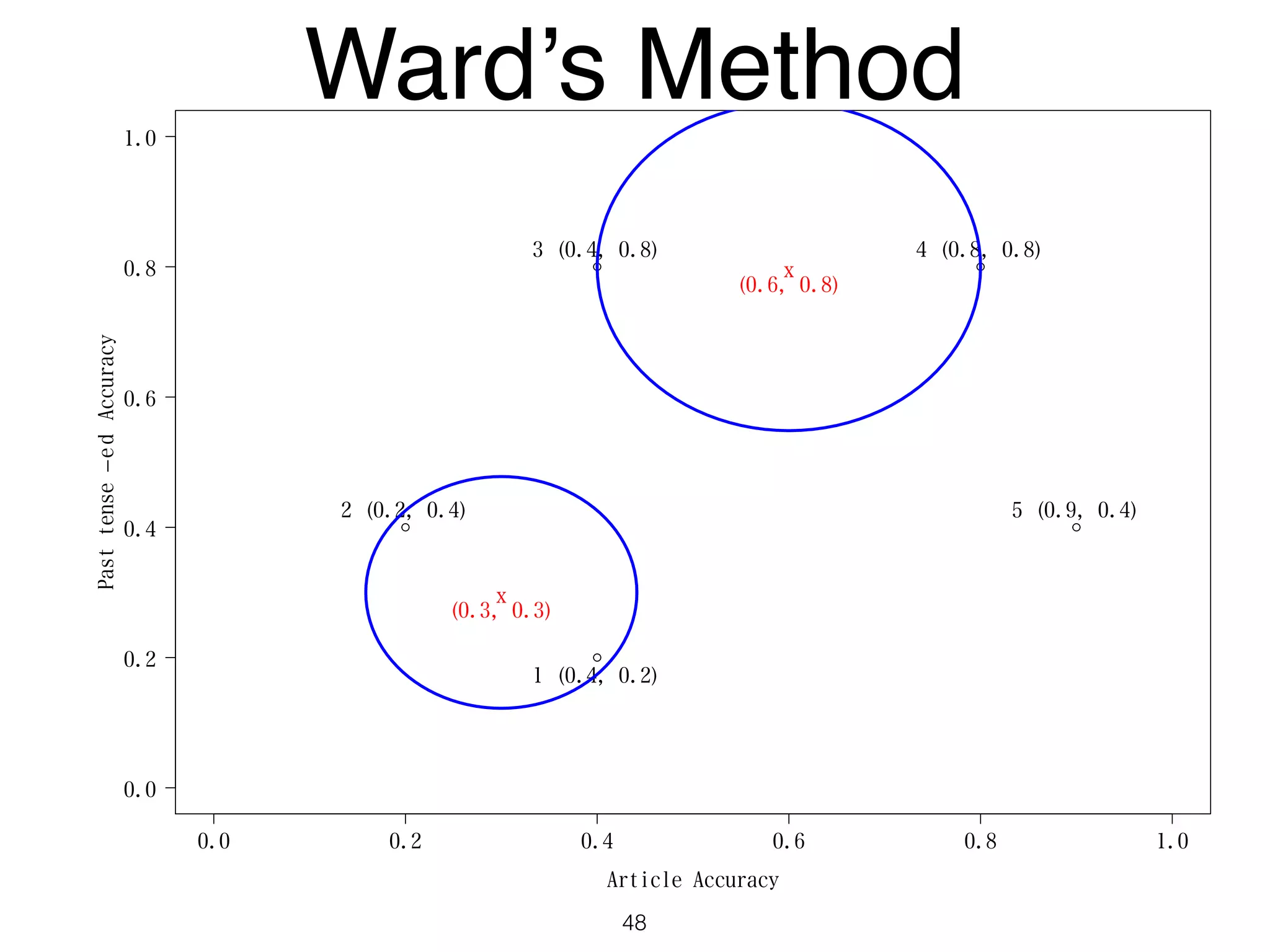

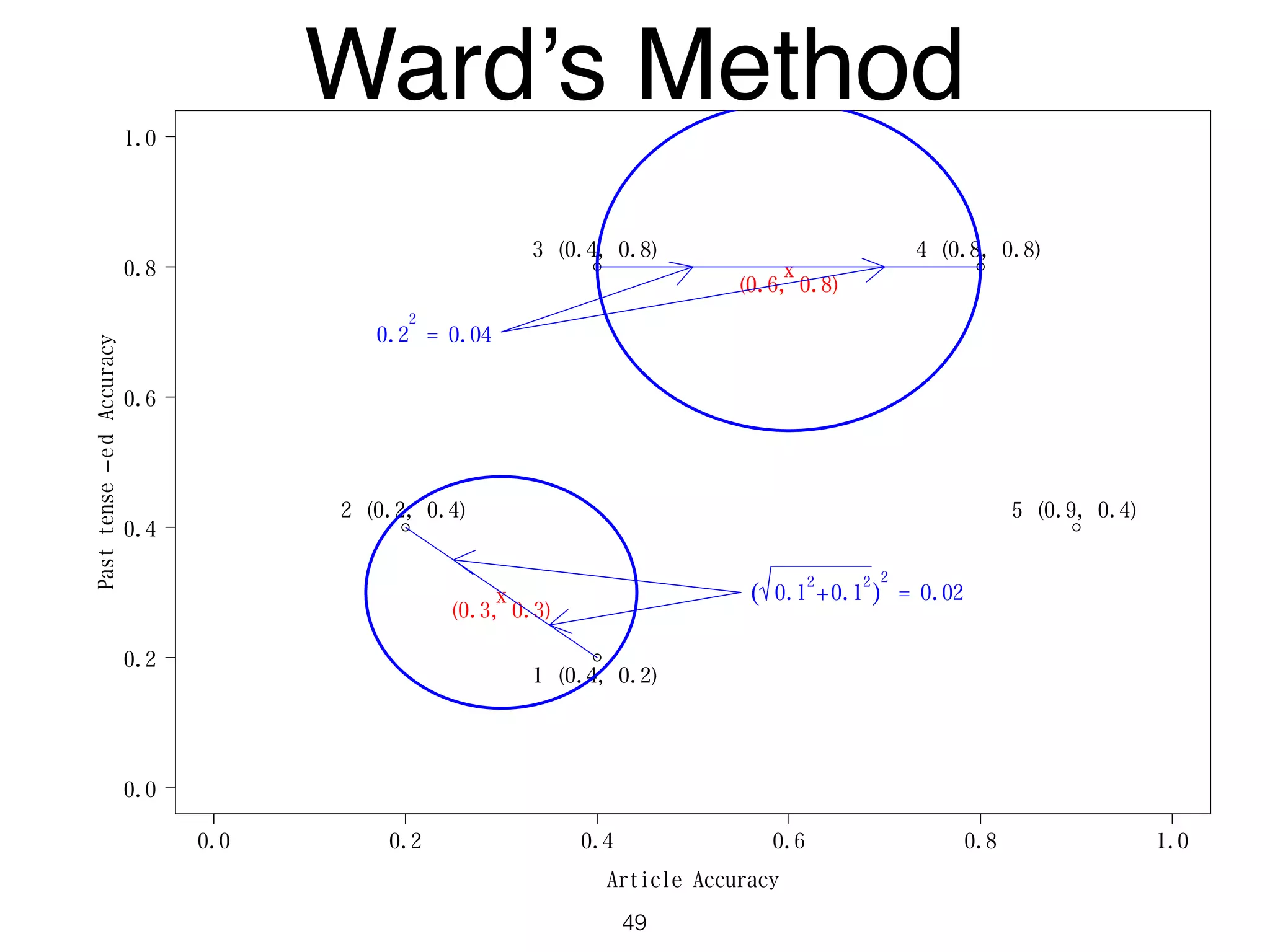

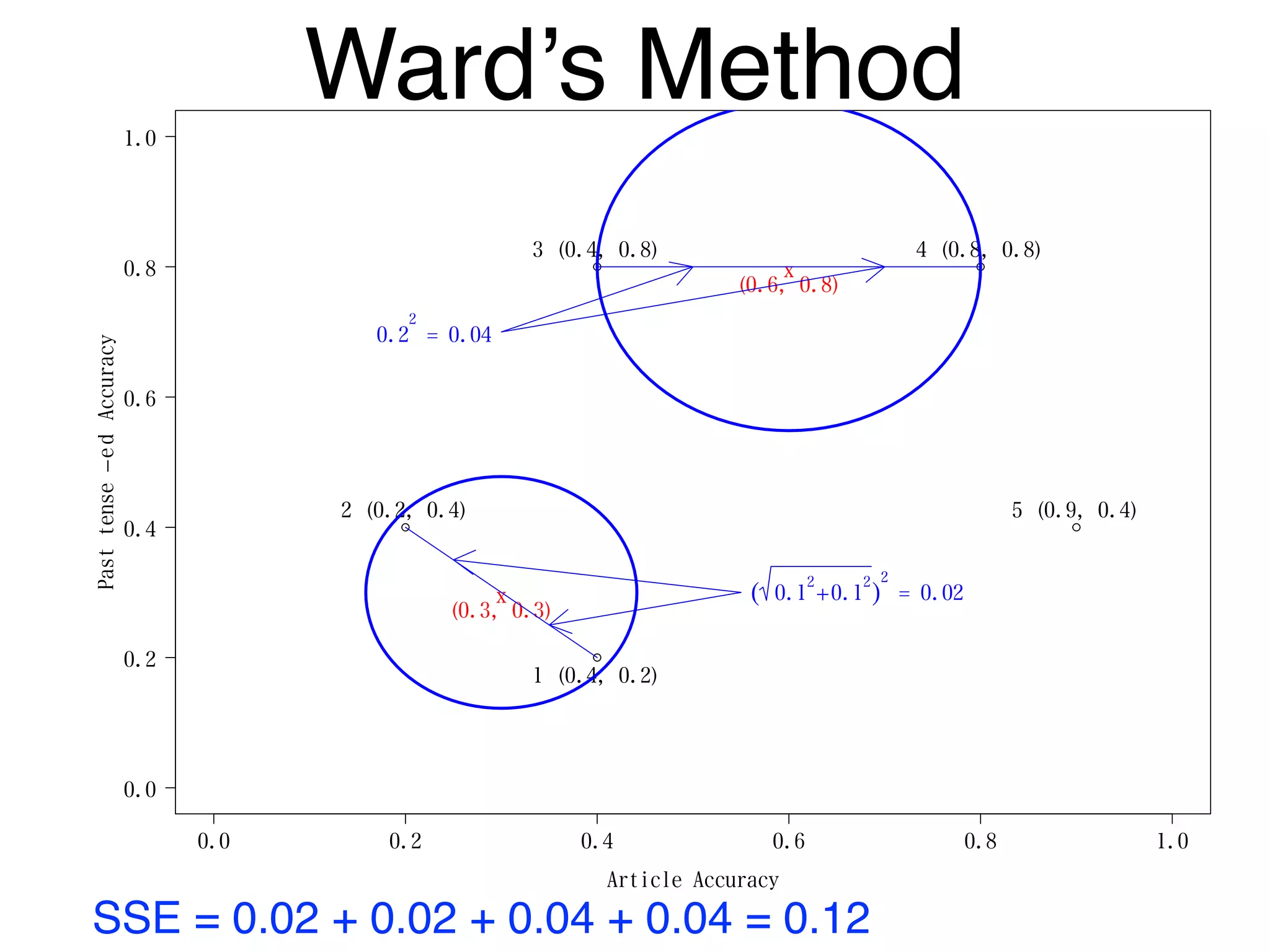

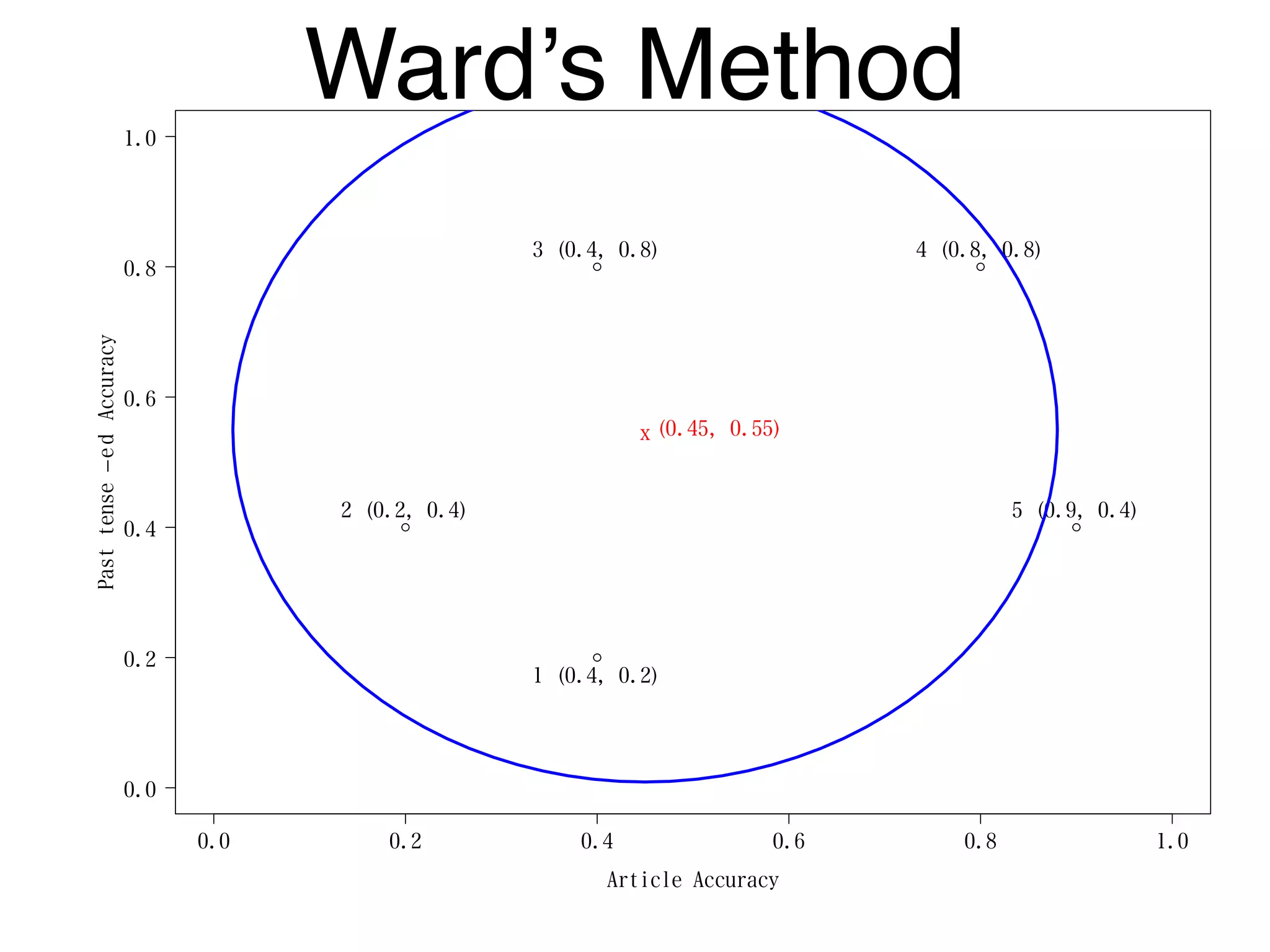

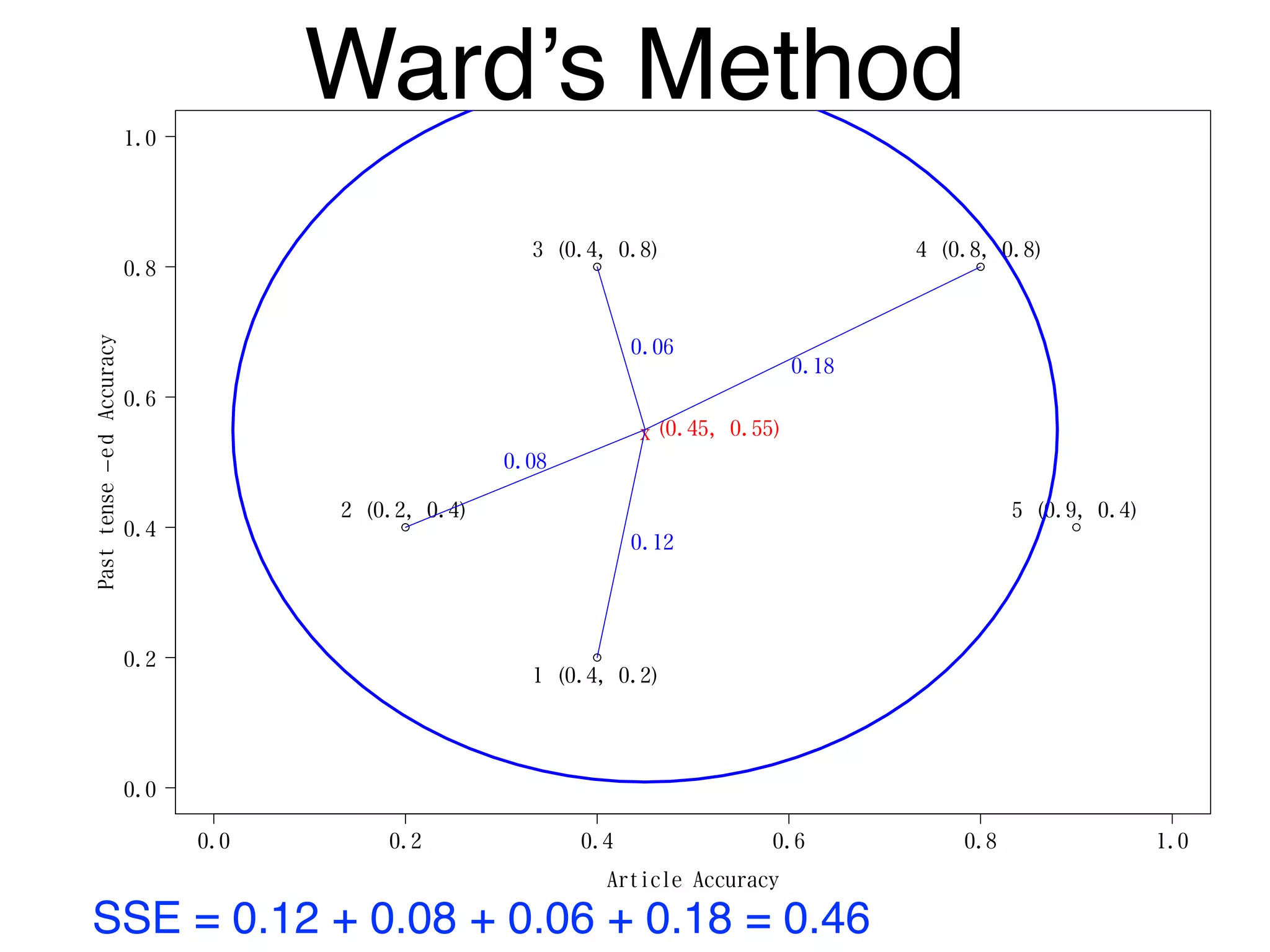

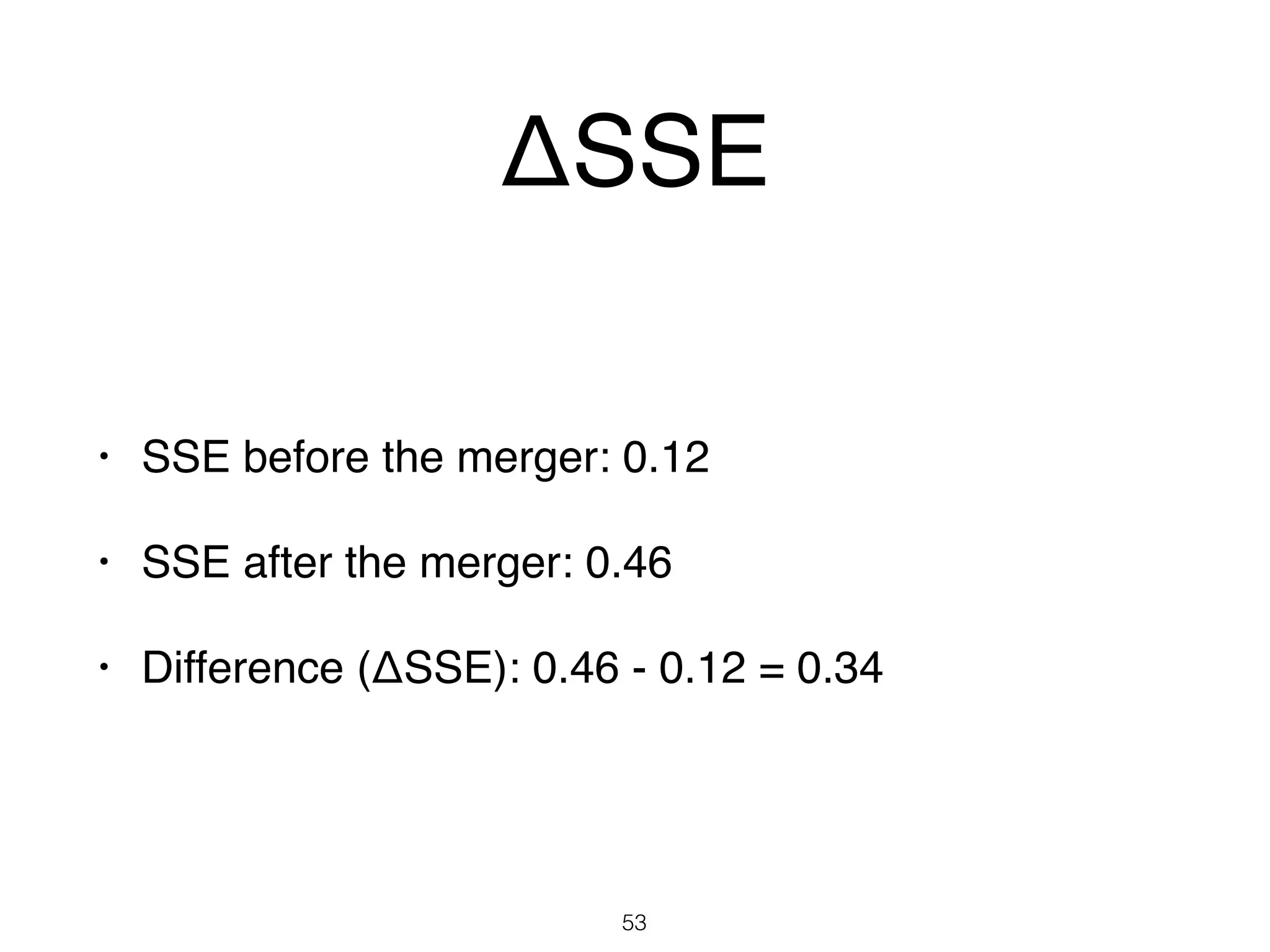

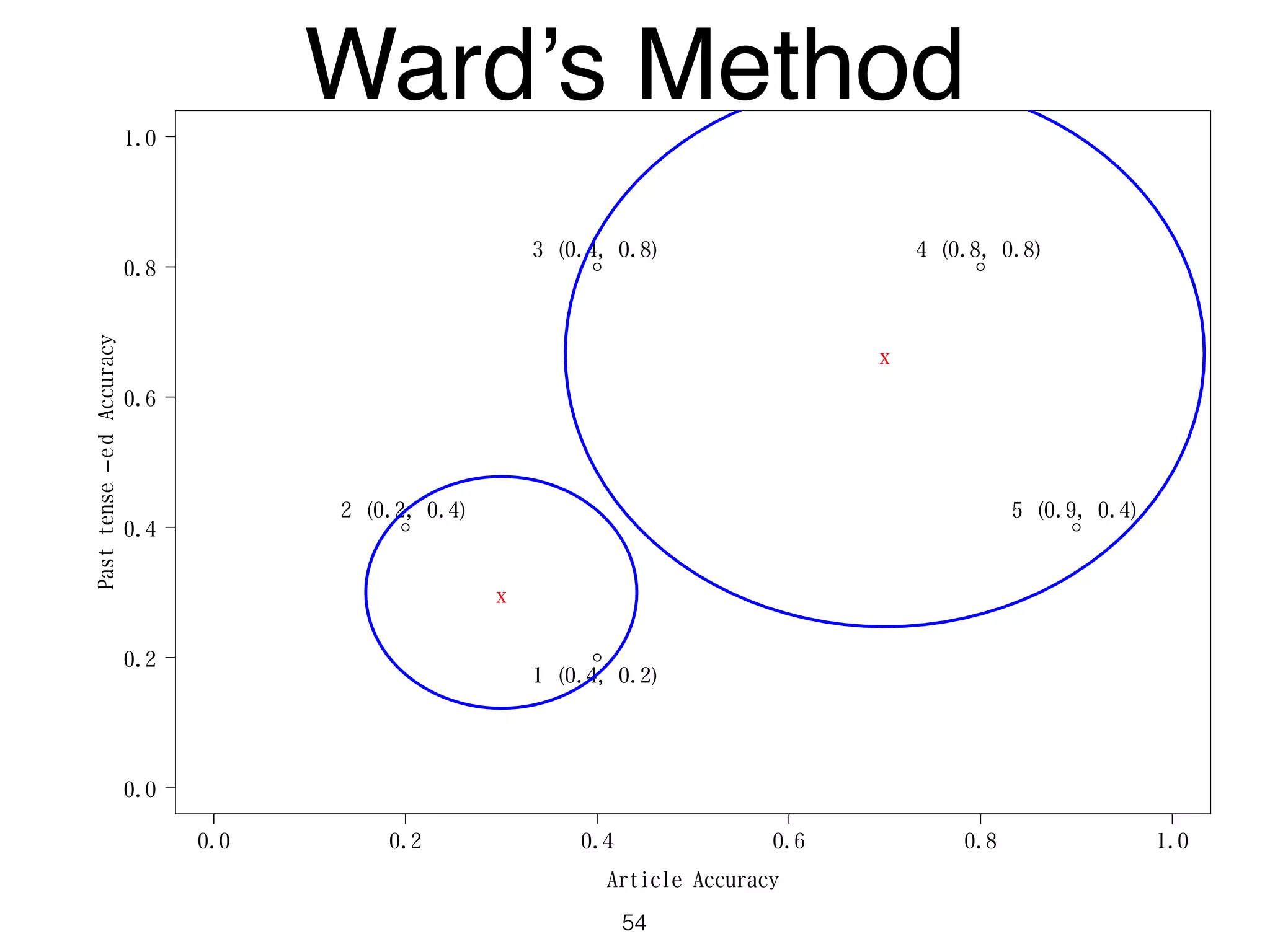

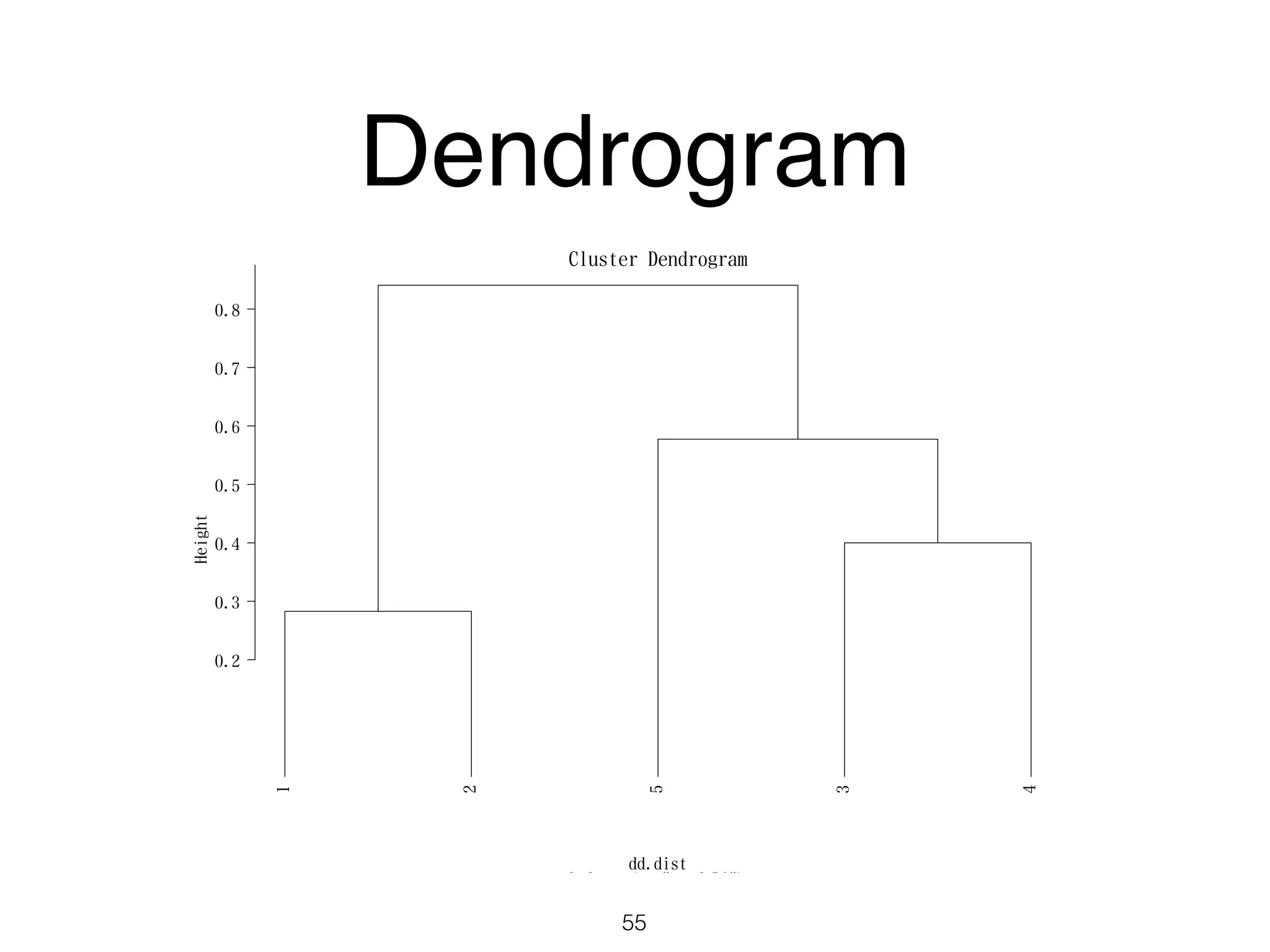

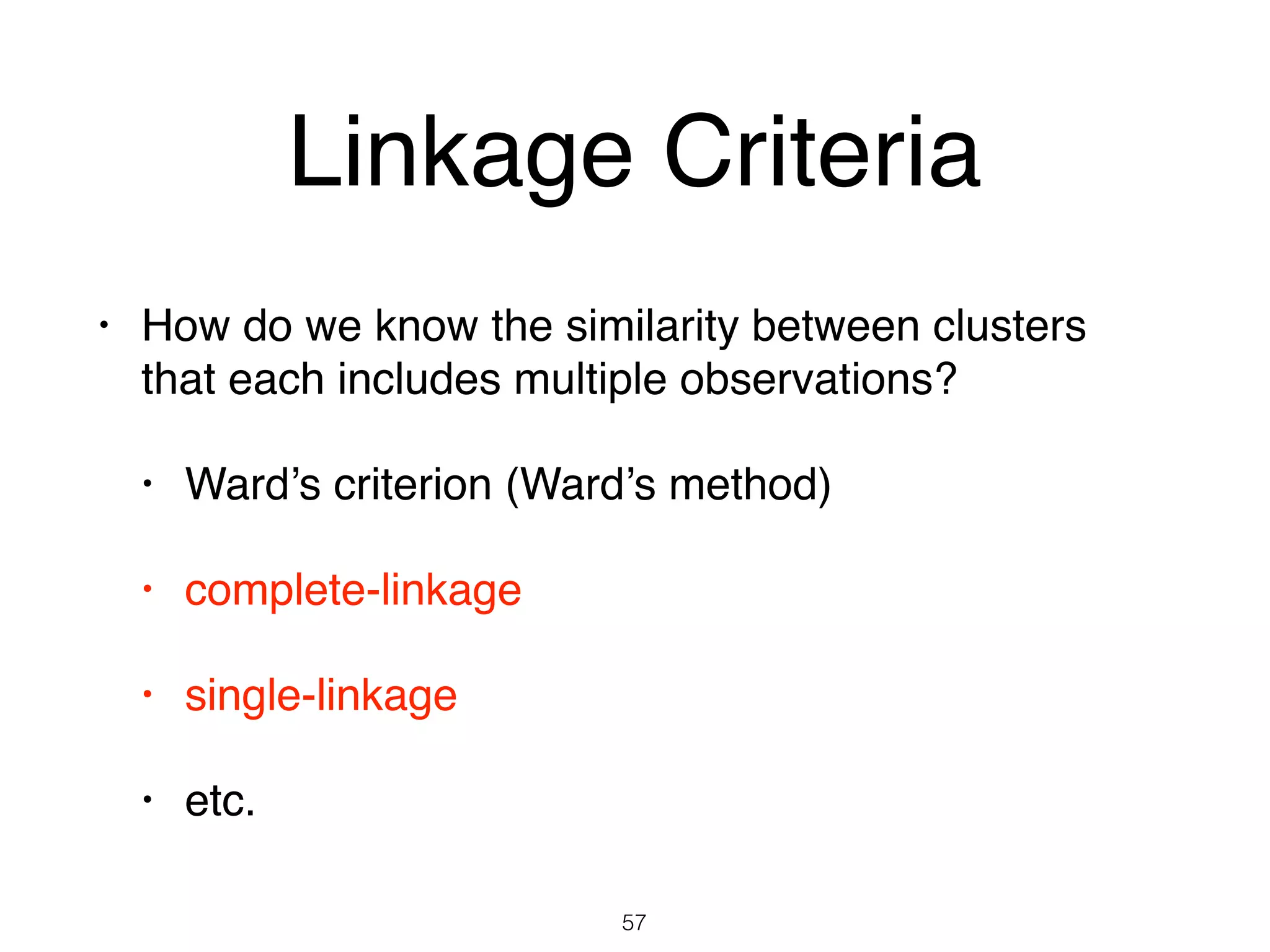

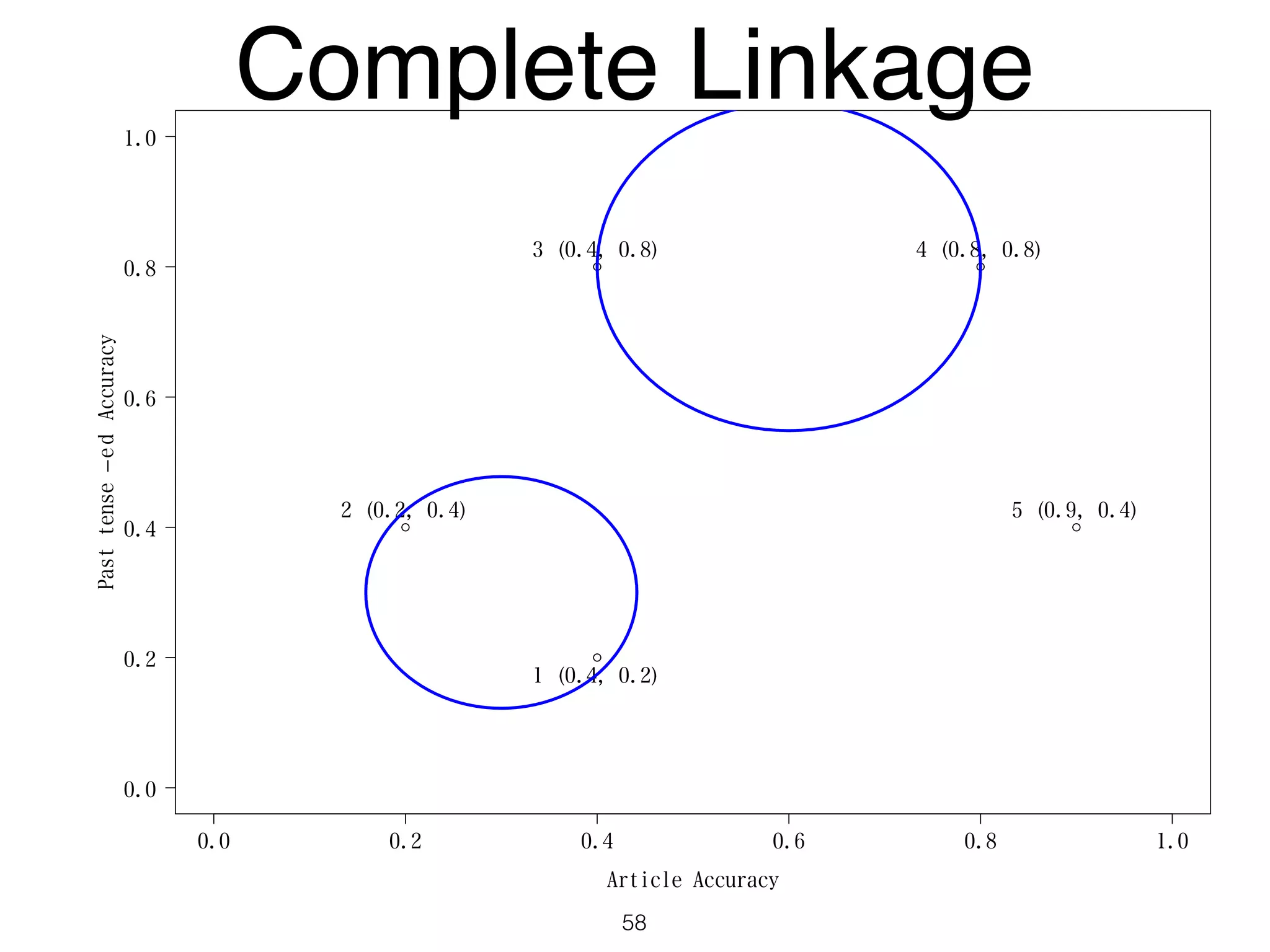

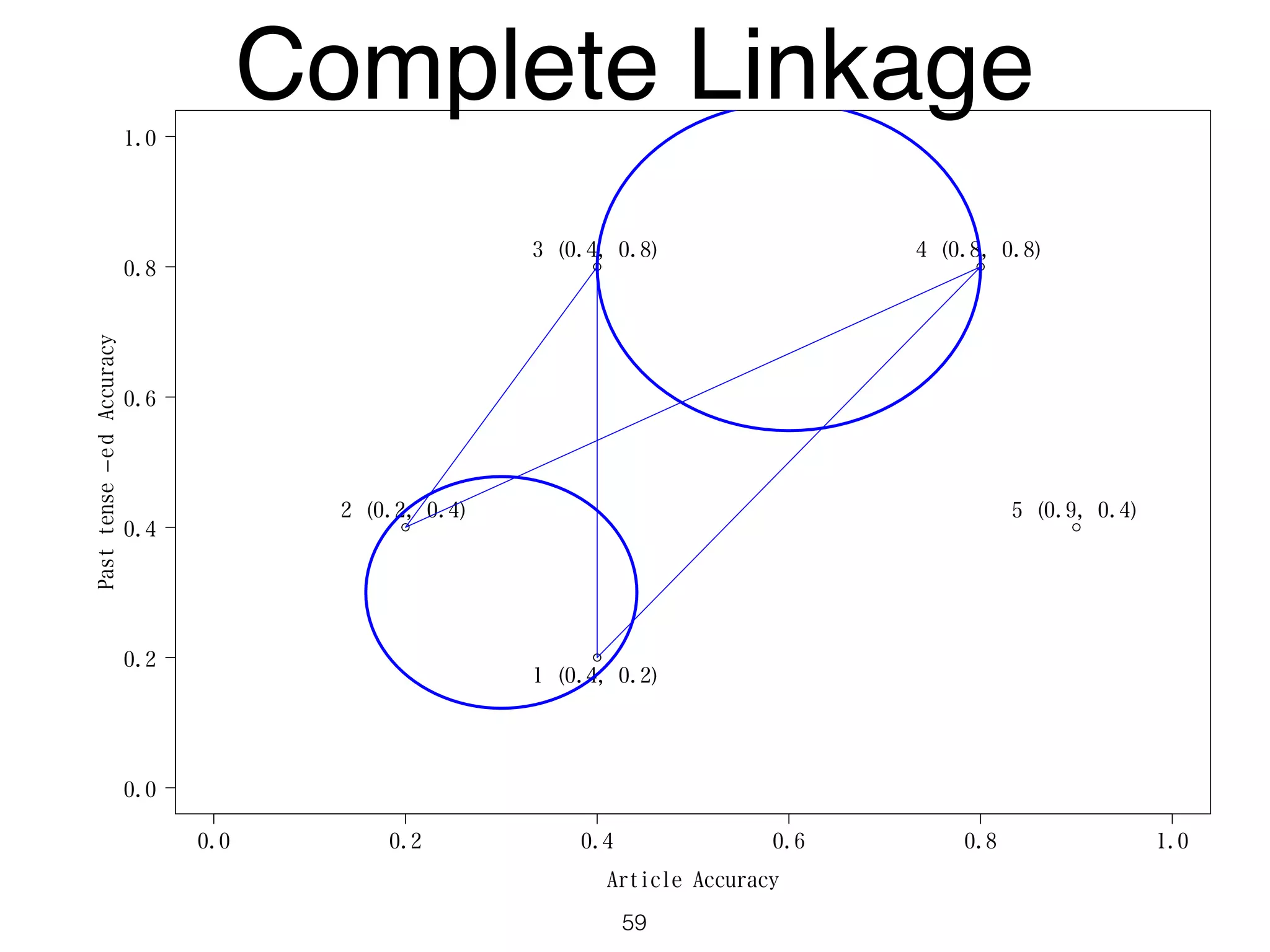

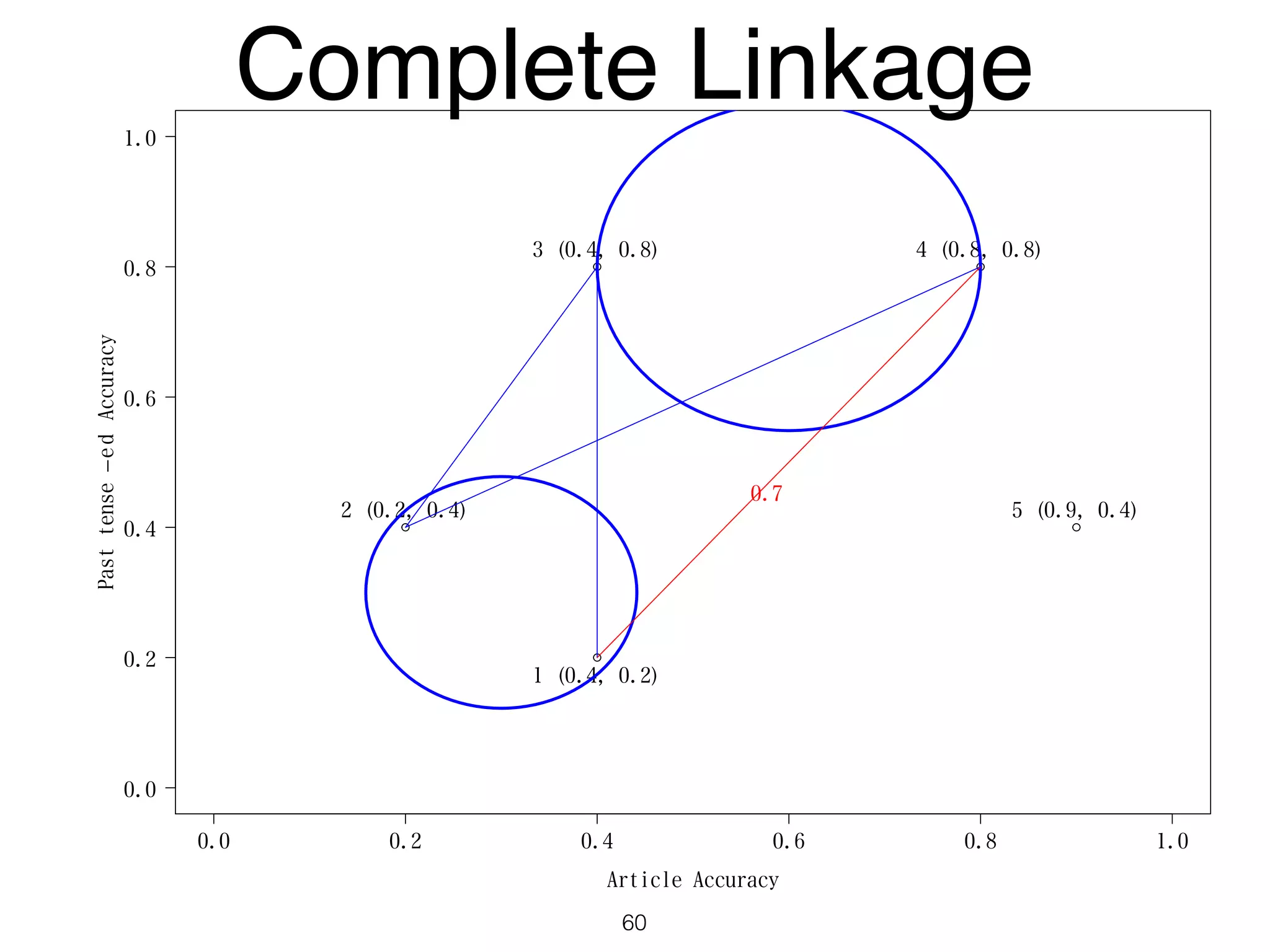

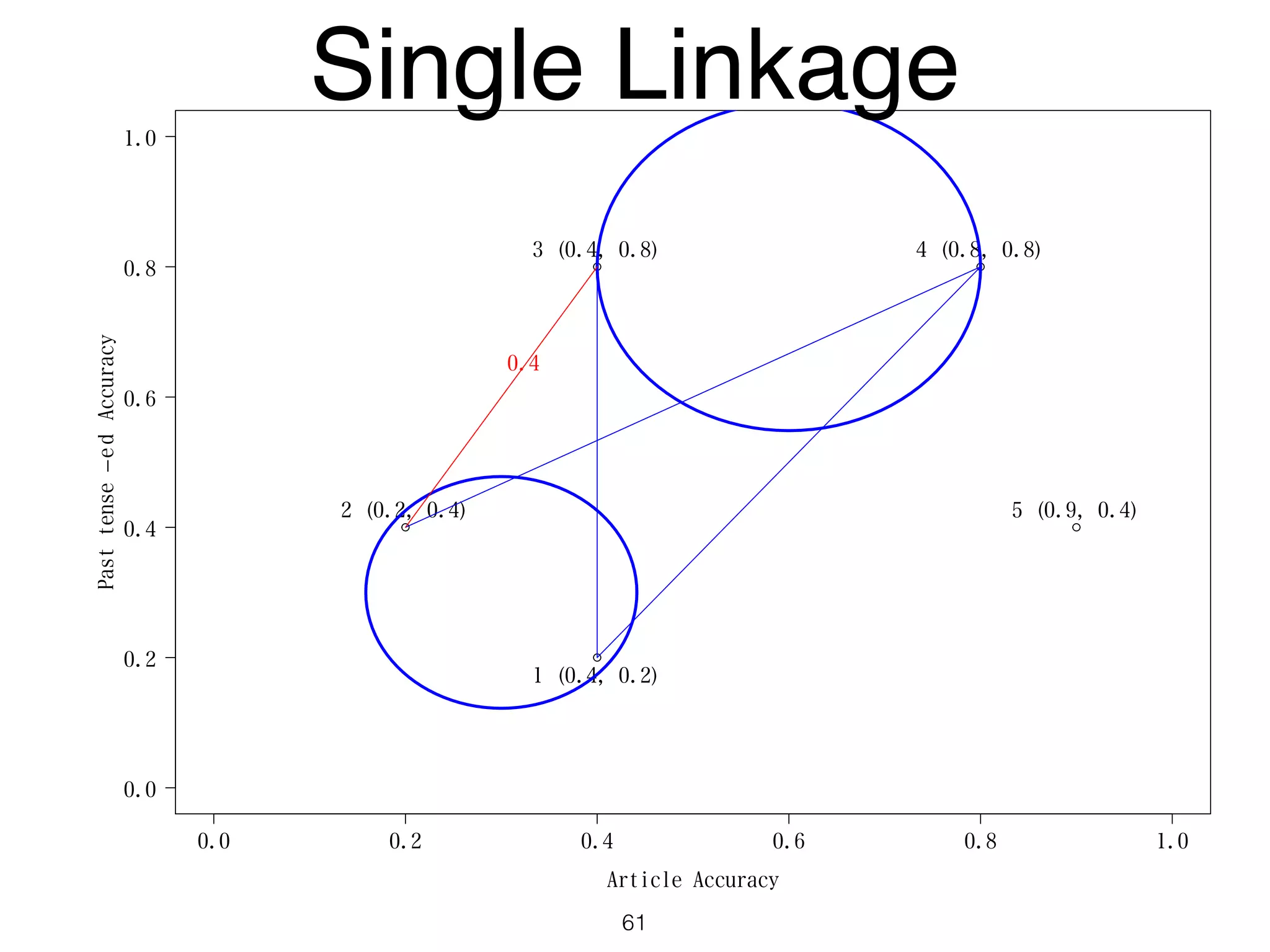

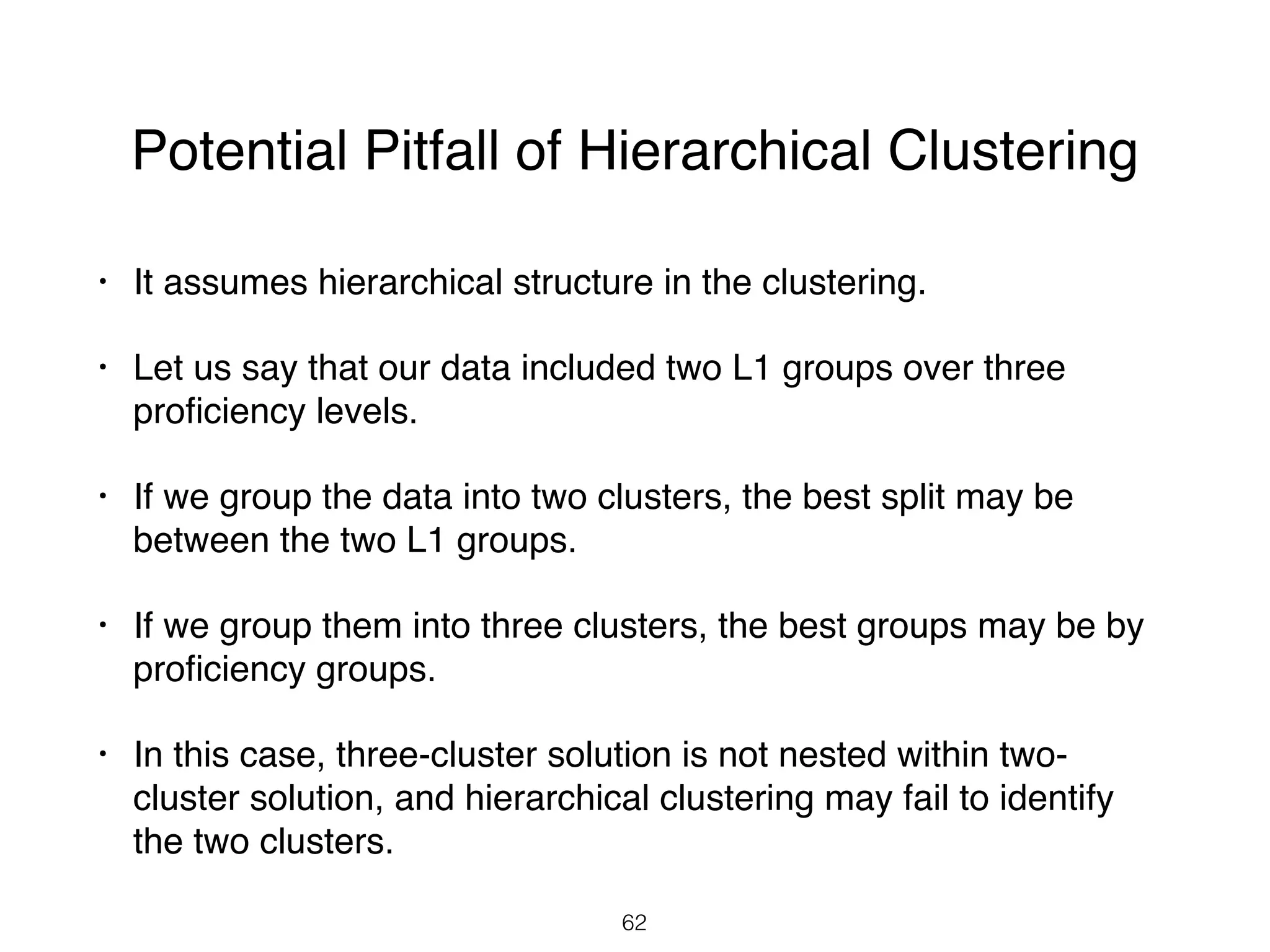

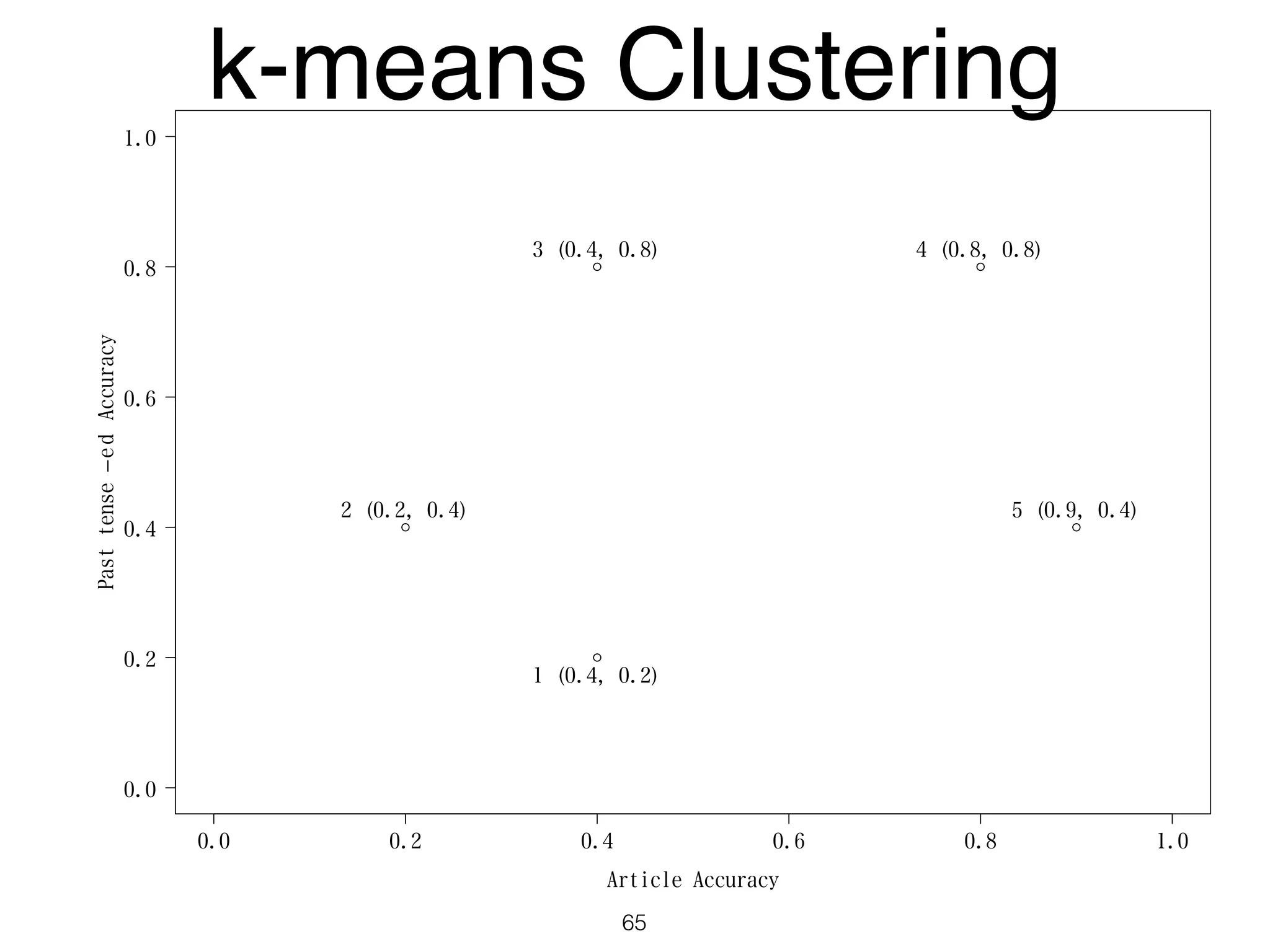

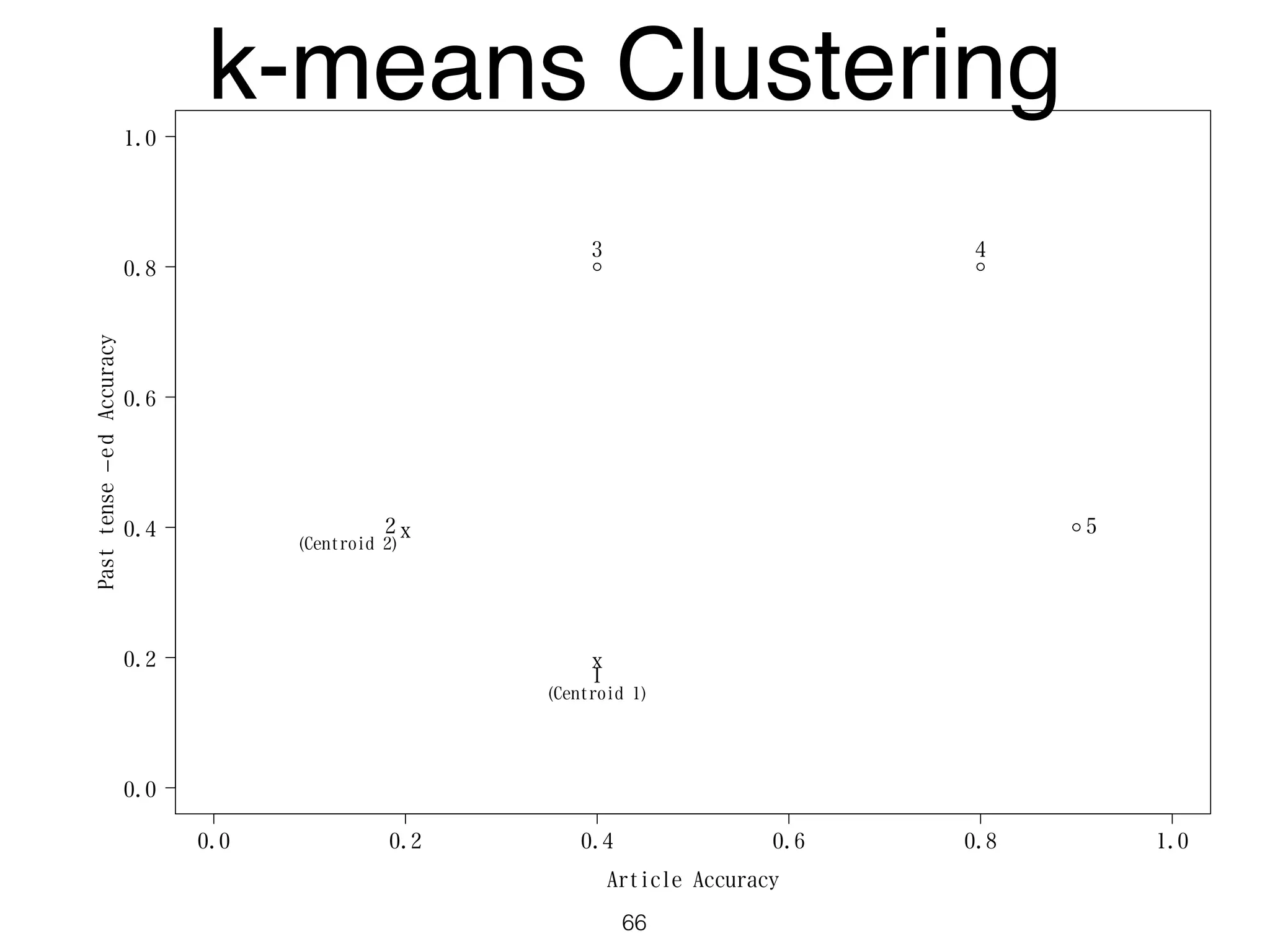

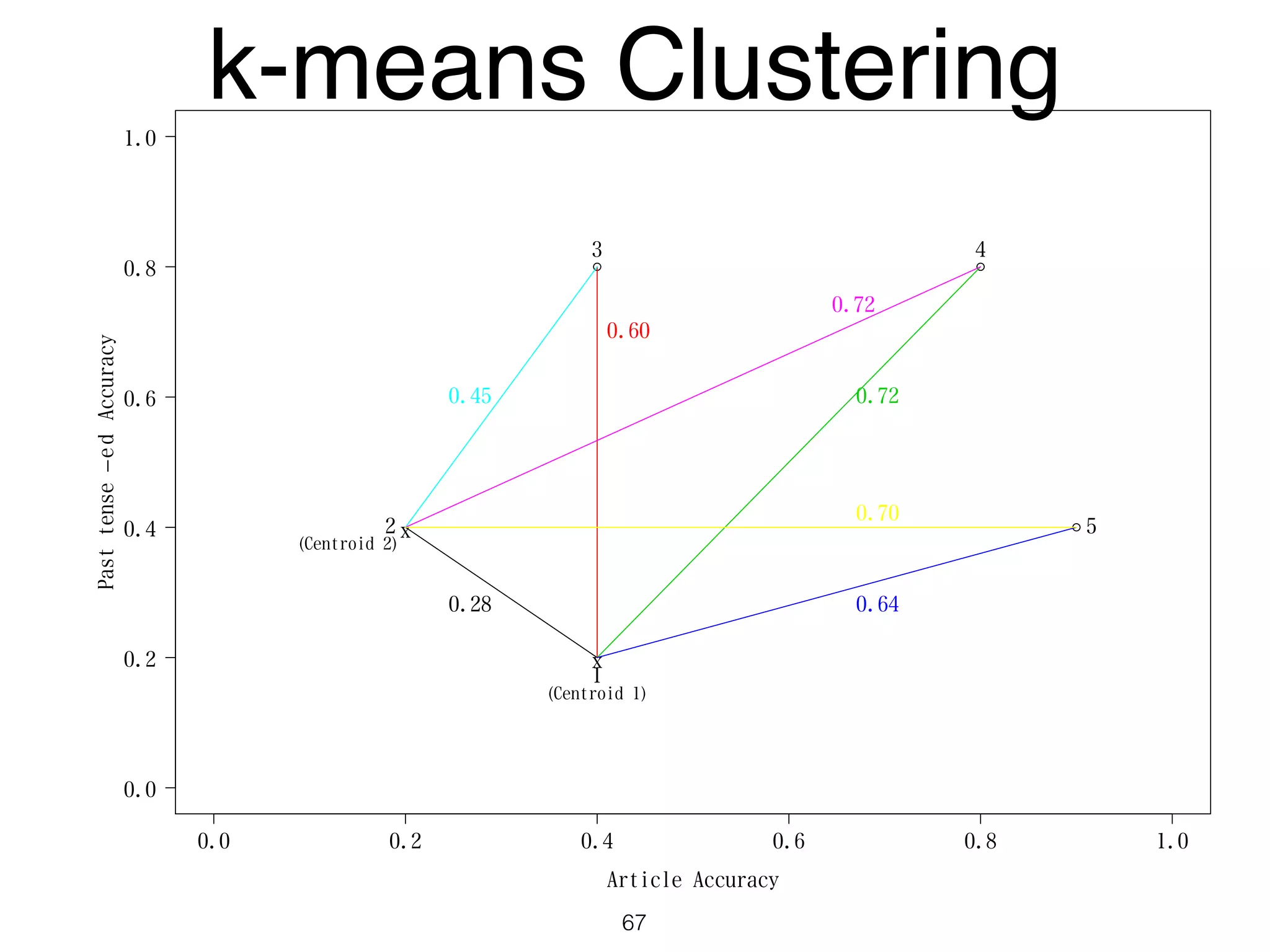

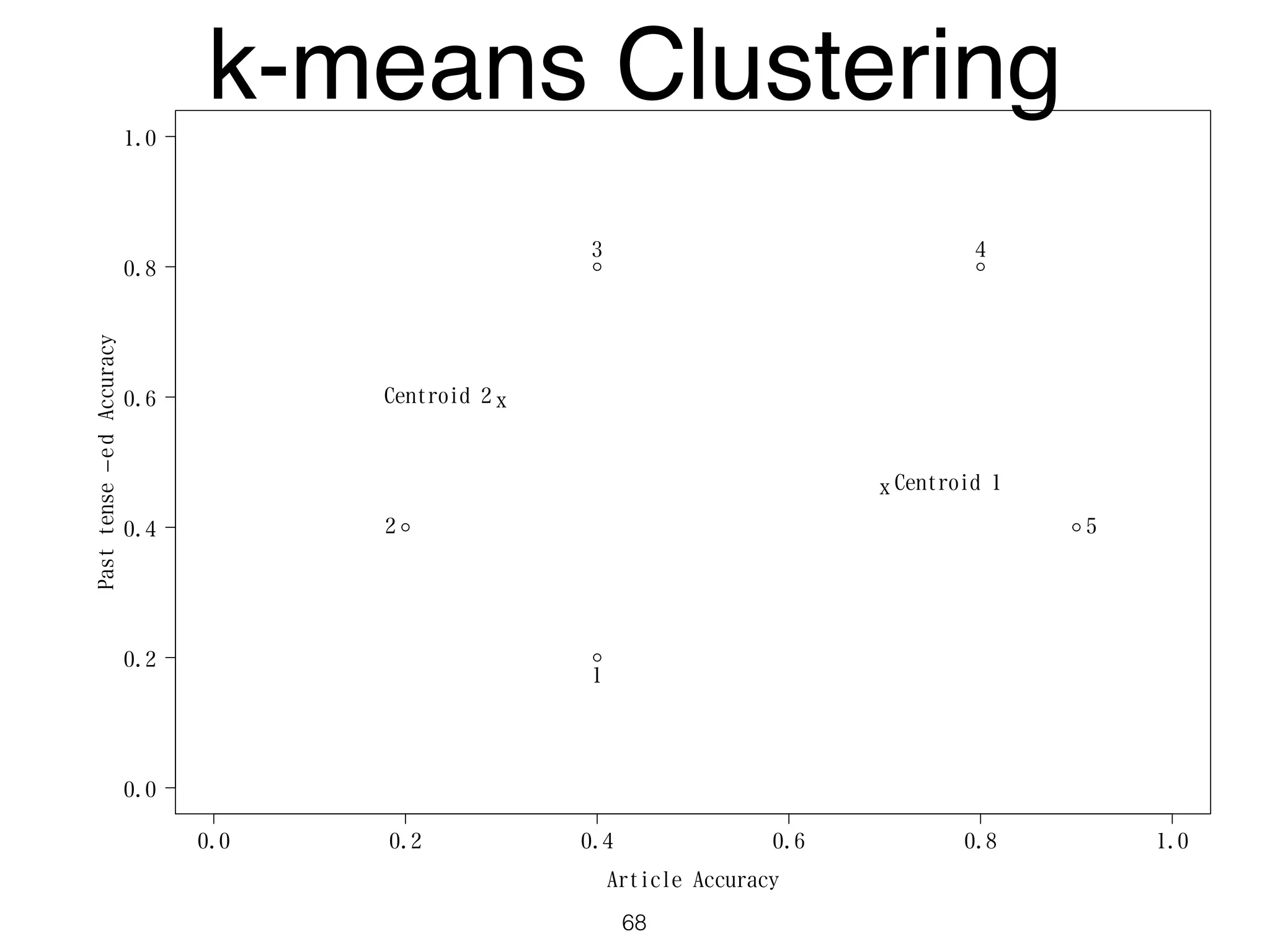

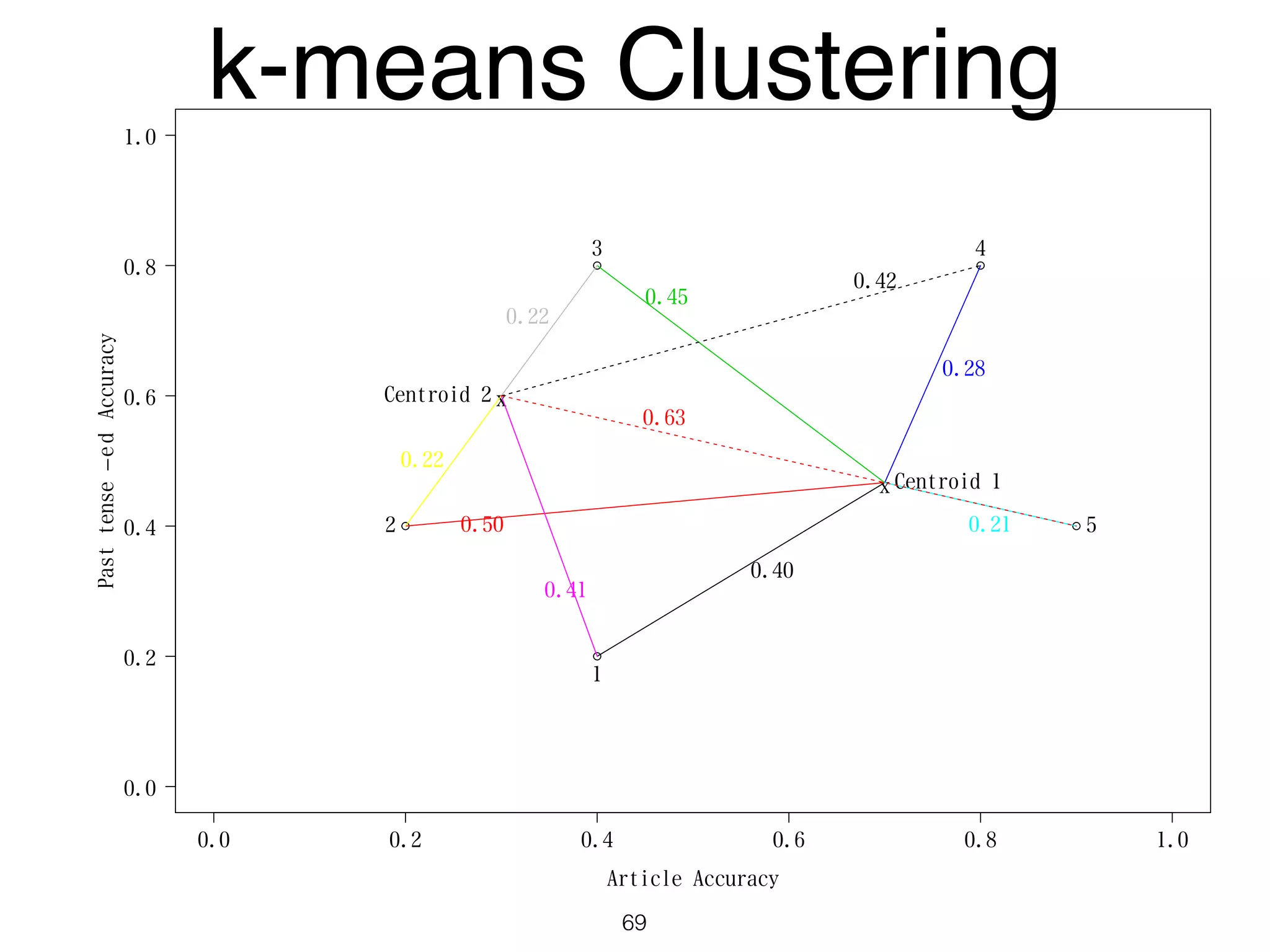

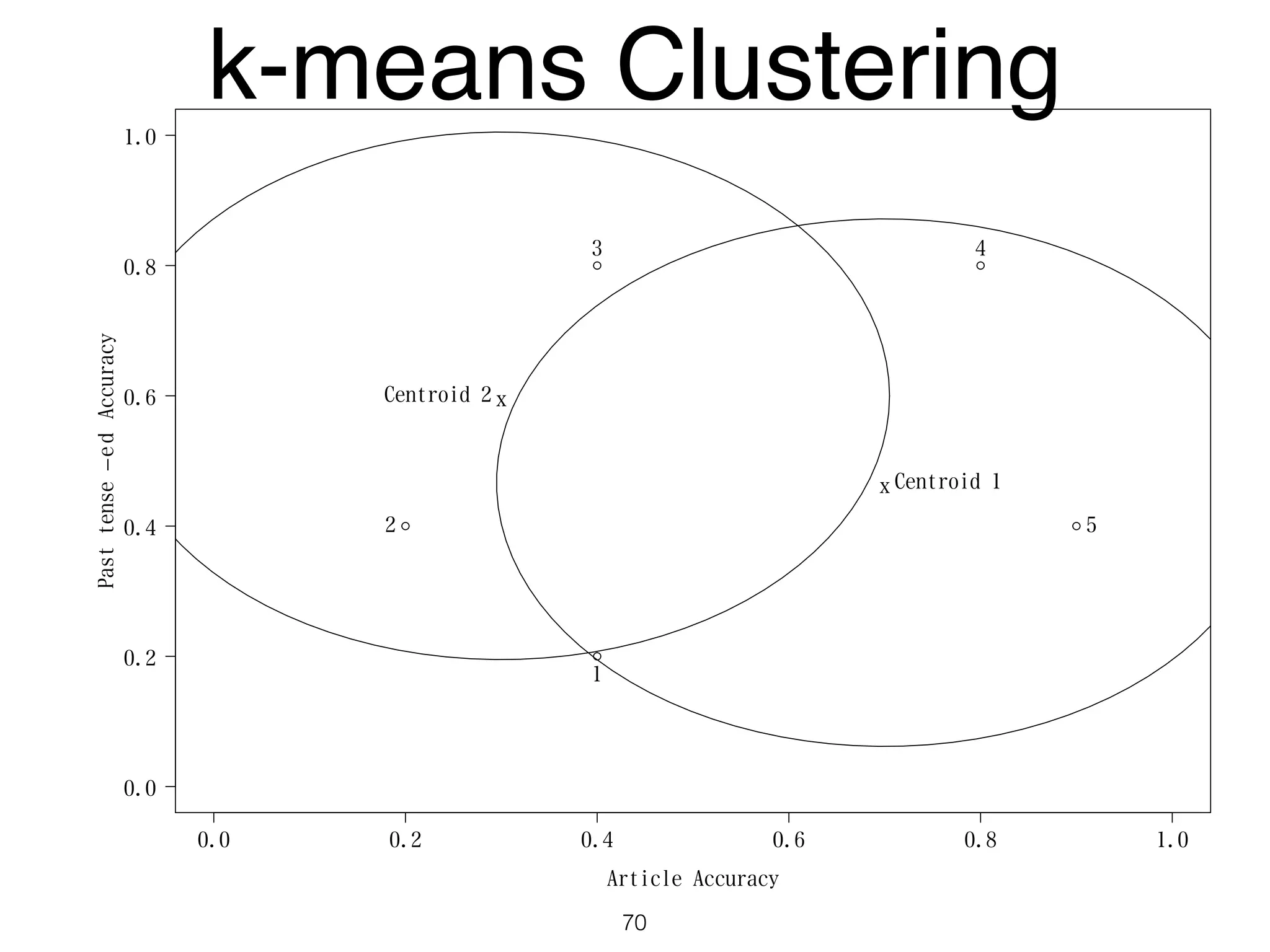

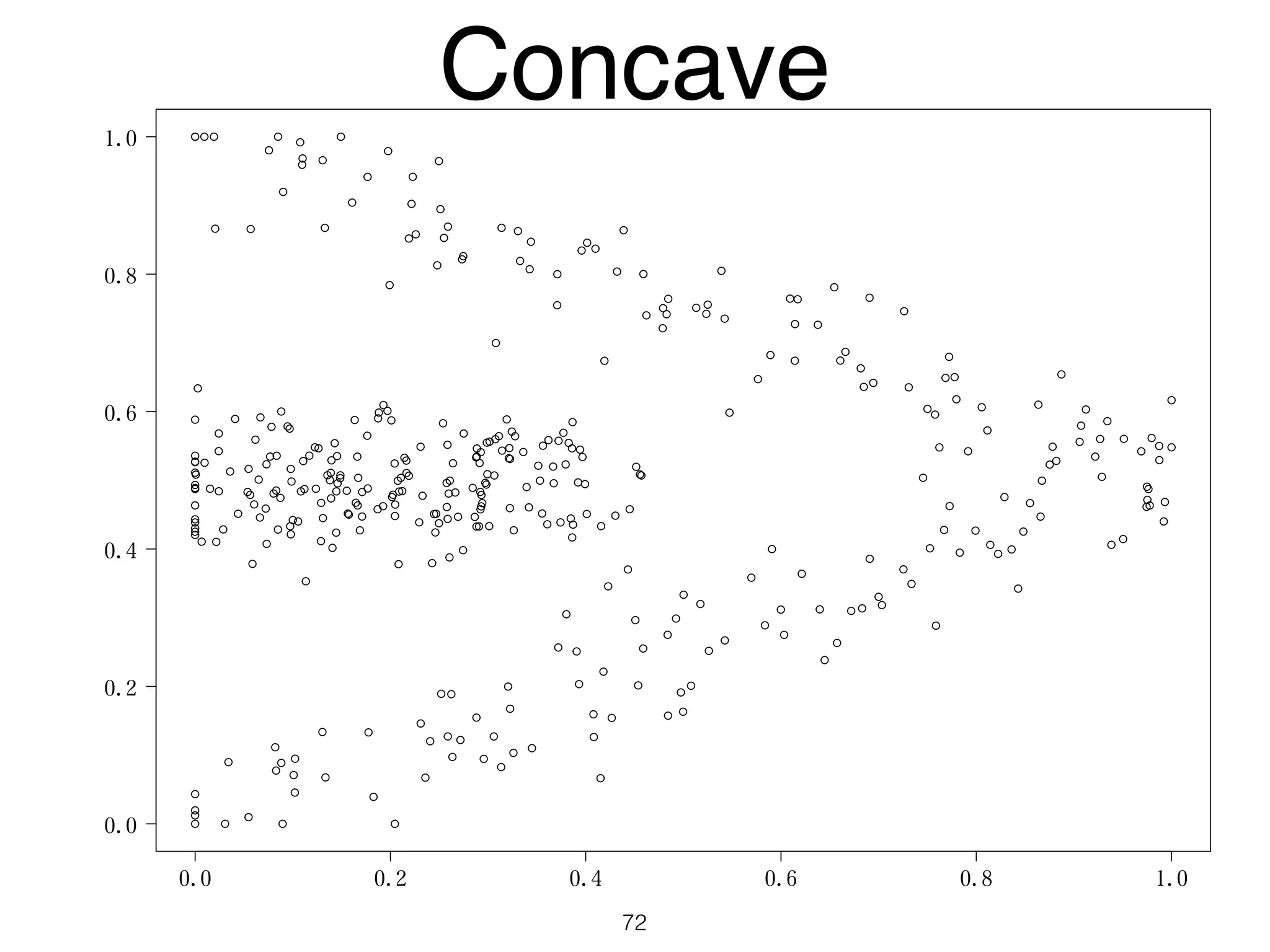

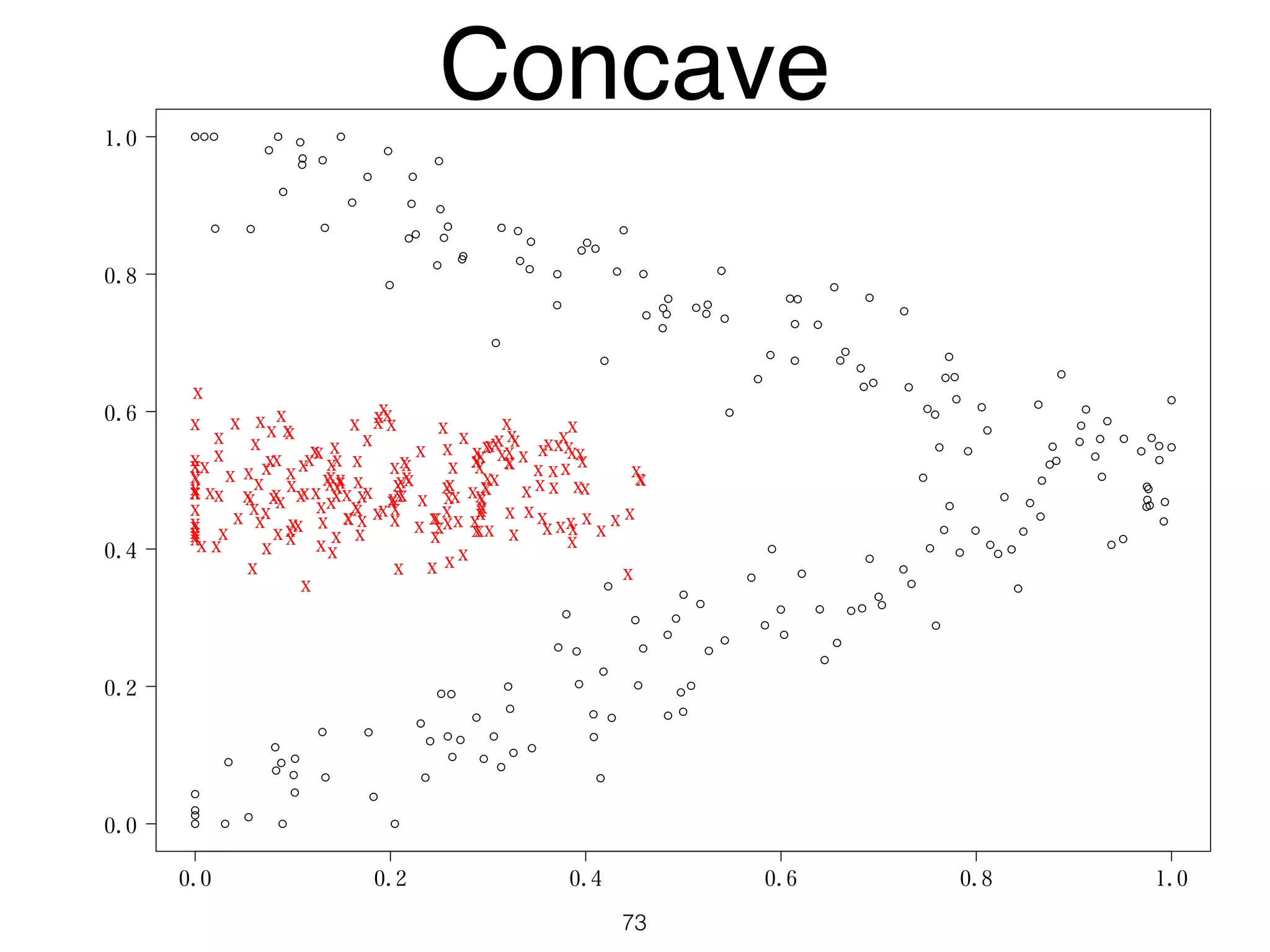

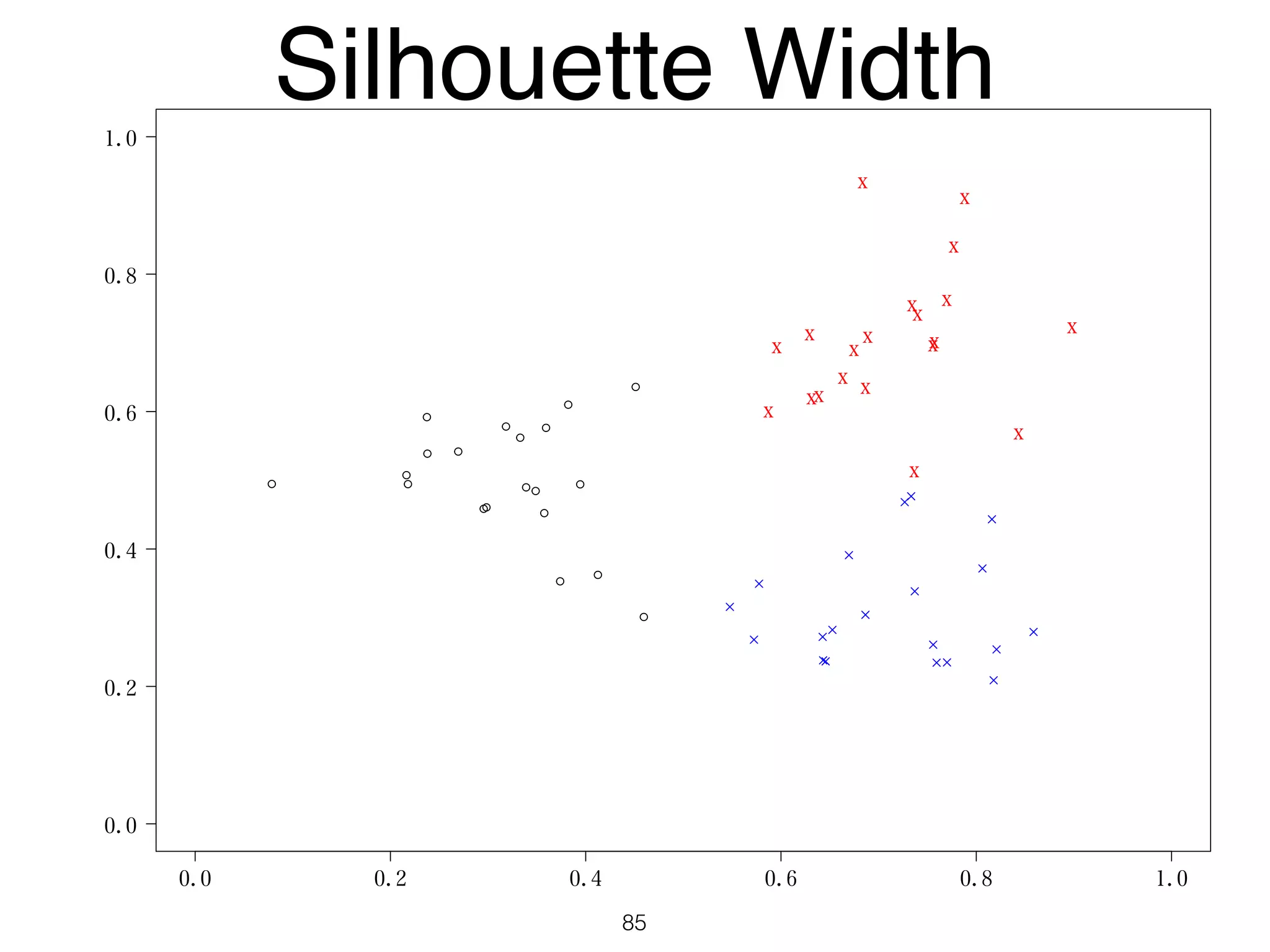

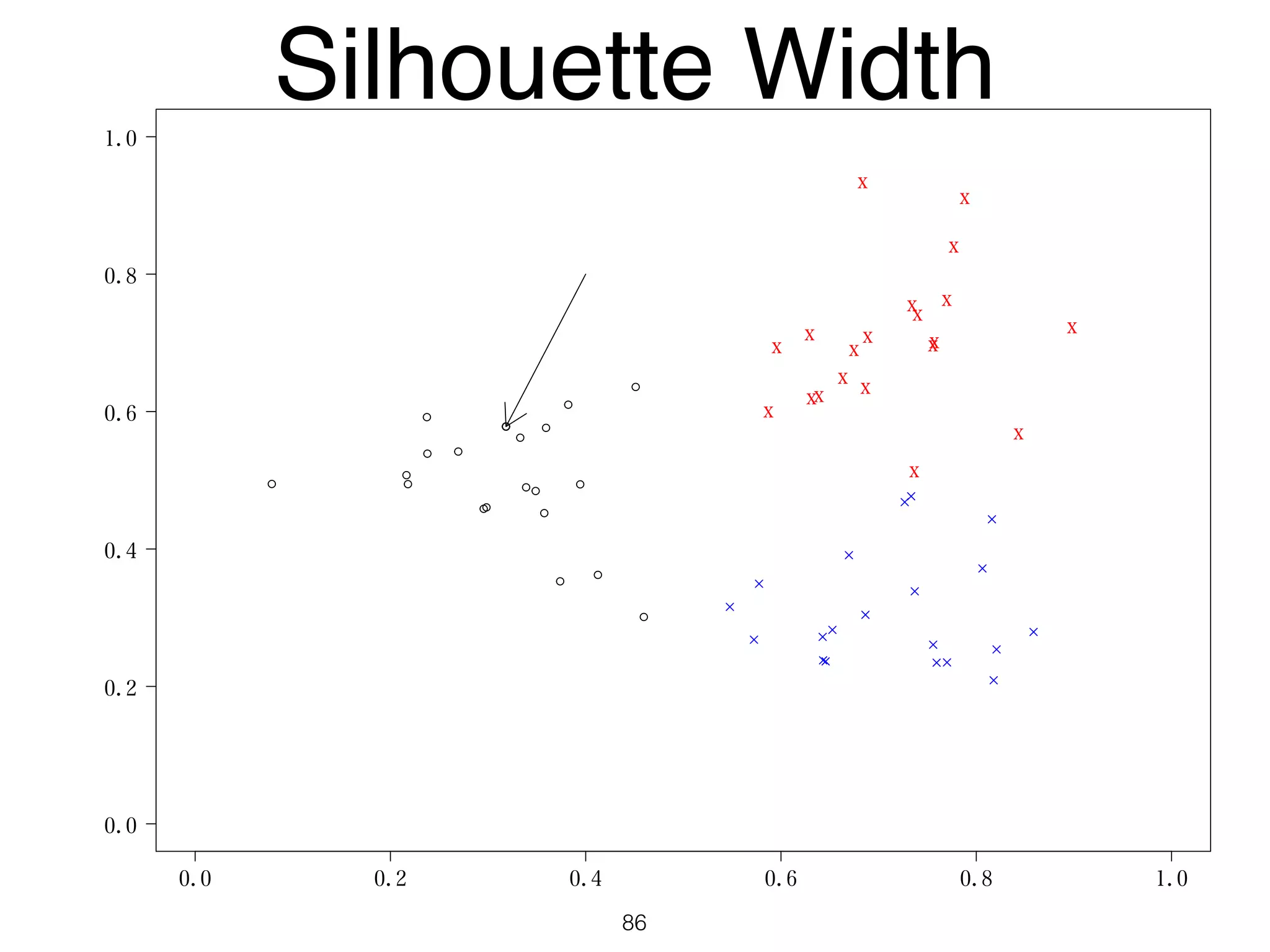

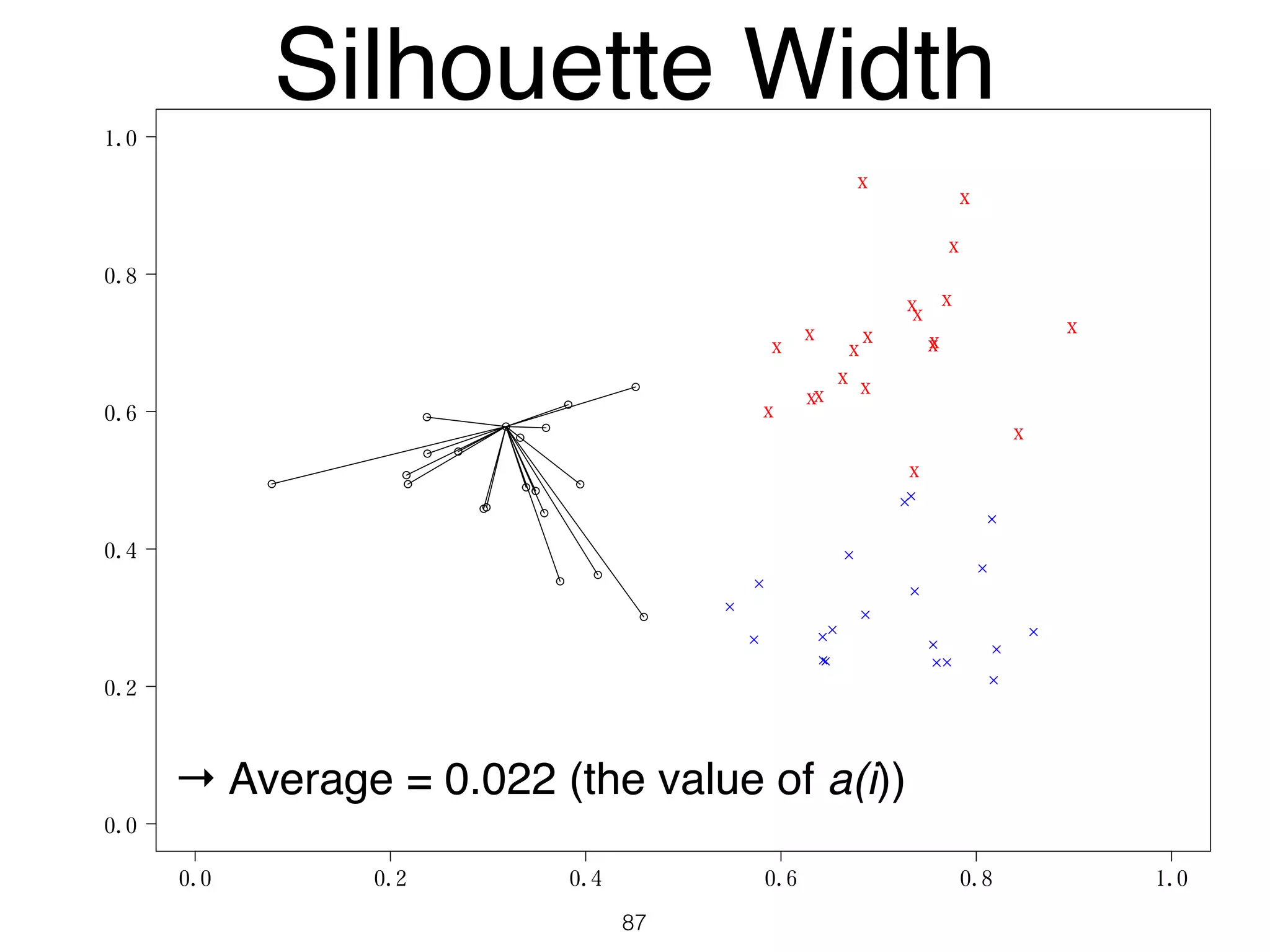

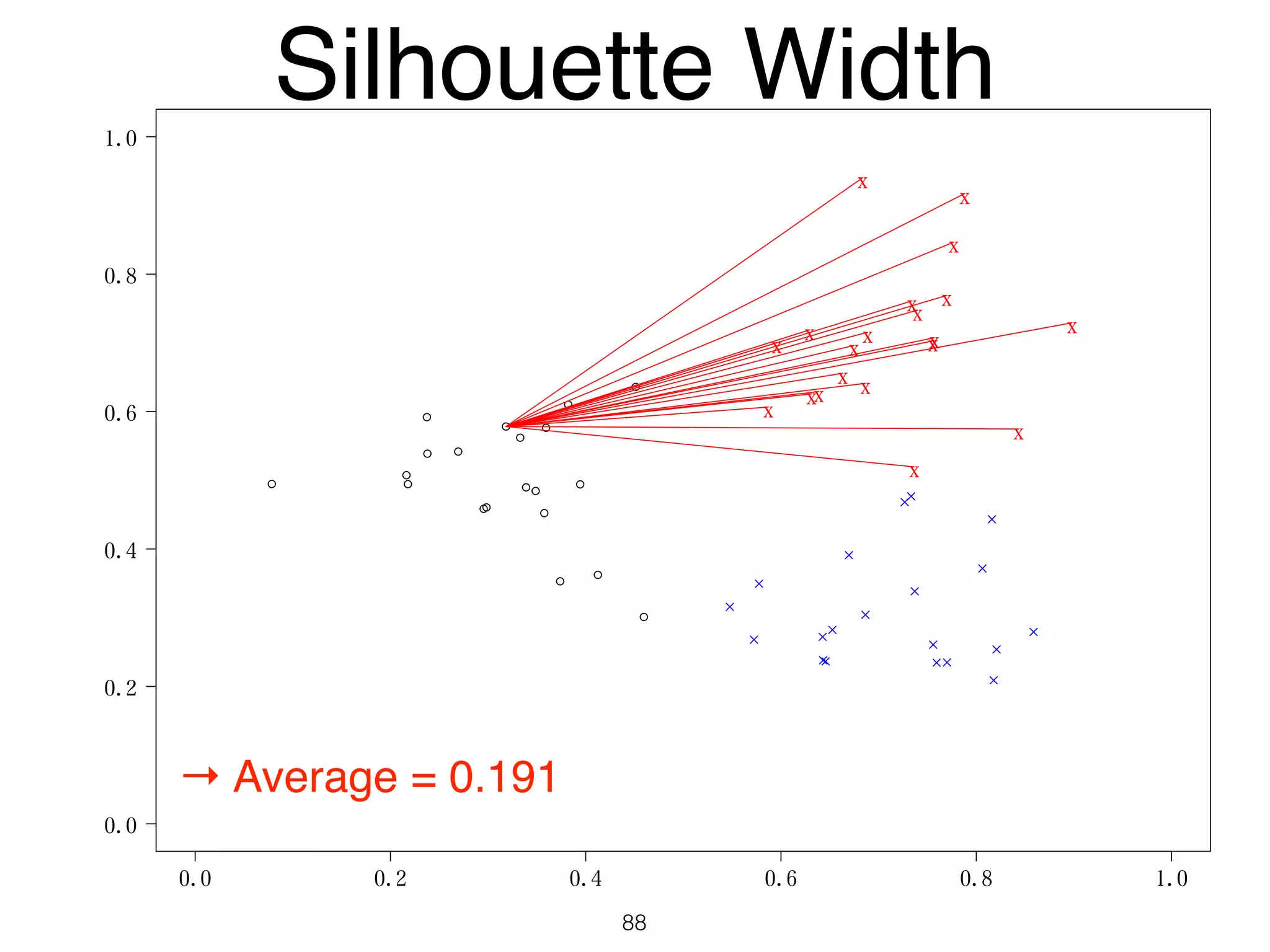

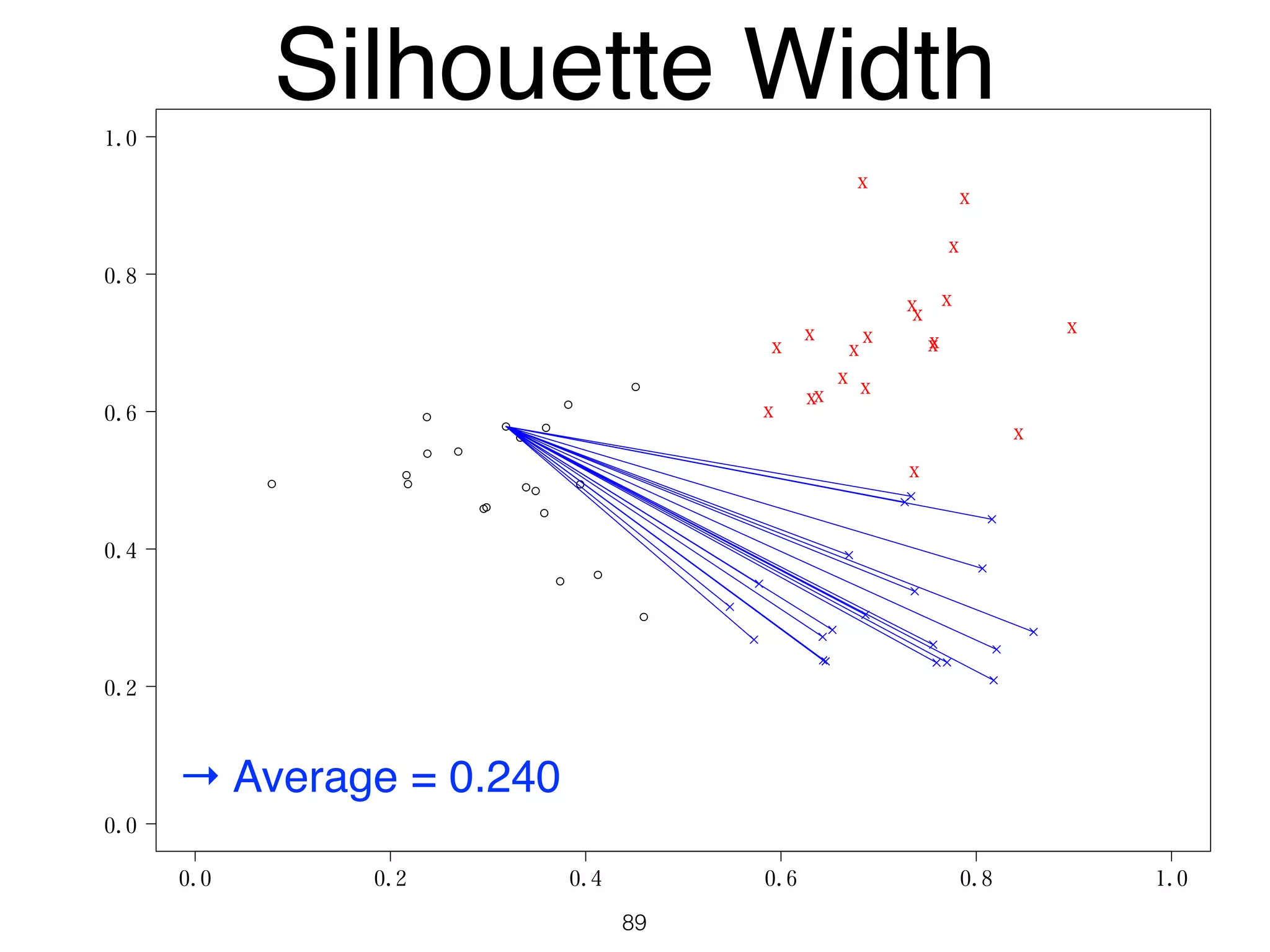

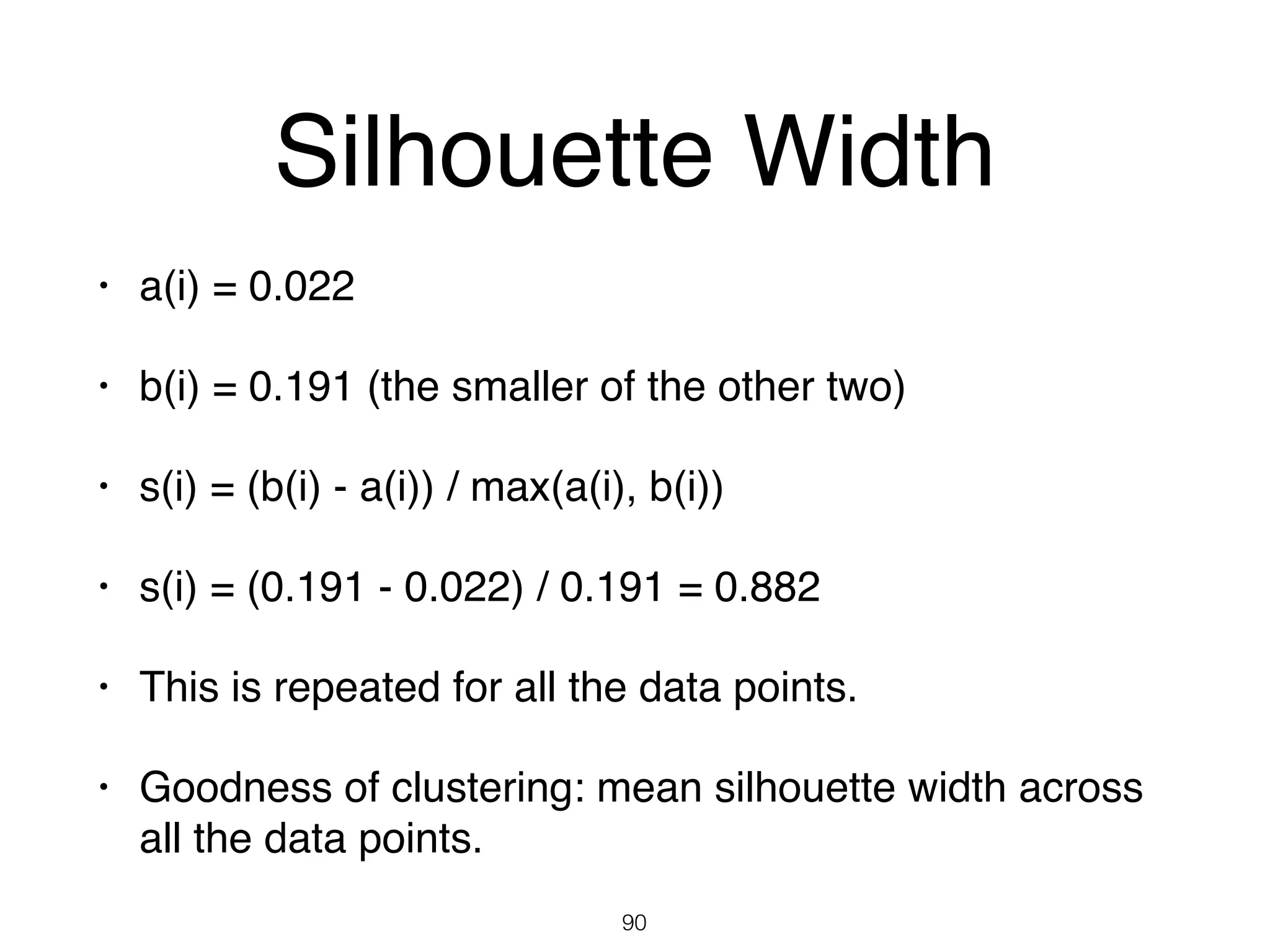

The document discusses clustering methods in R, emphasizing cluster analysis to identify groups based on similarity among data points. Various distance measures and clustering algorithms, such as agglomerative hierarchical clustering and k-means, are examined, including techniques for calculating cluster similarity. The content is particularly focused on applications in second language acquisition, exploring typologies of learner profiles through statistical methods.