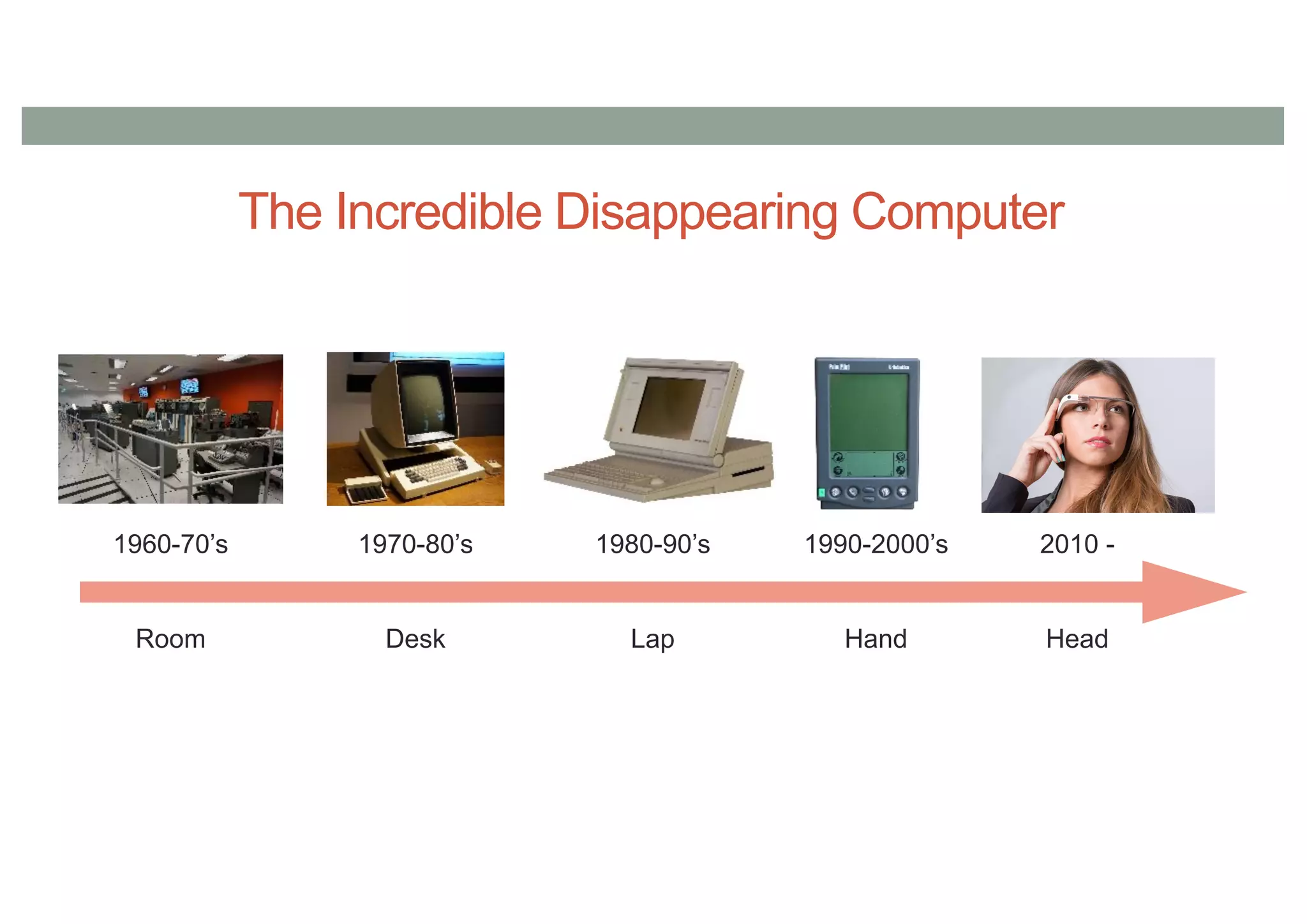

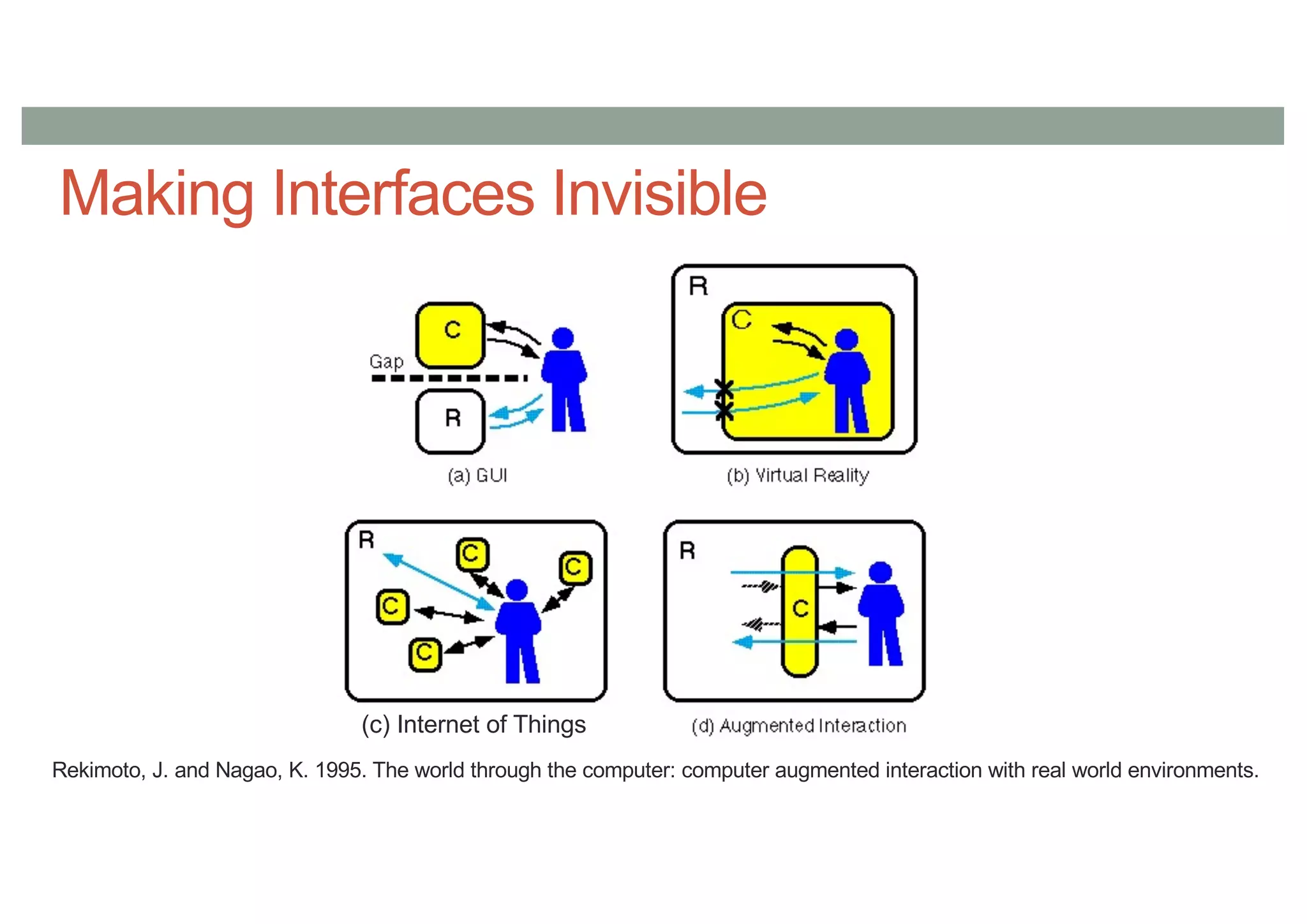

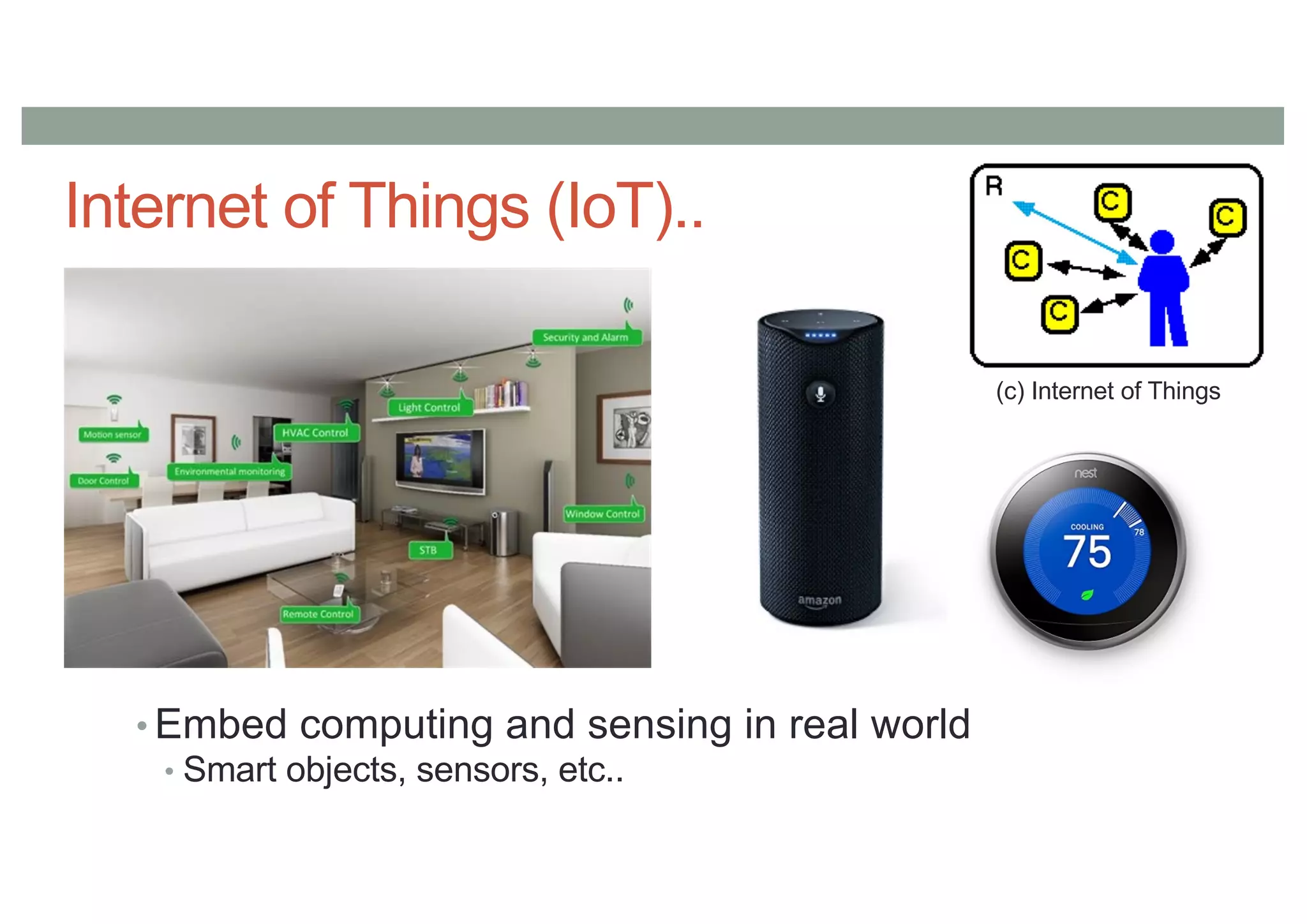

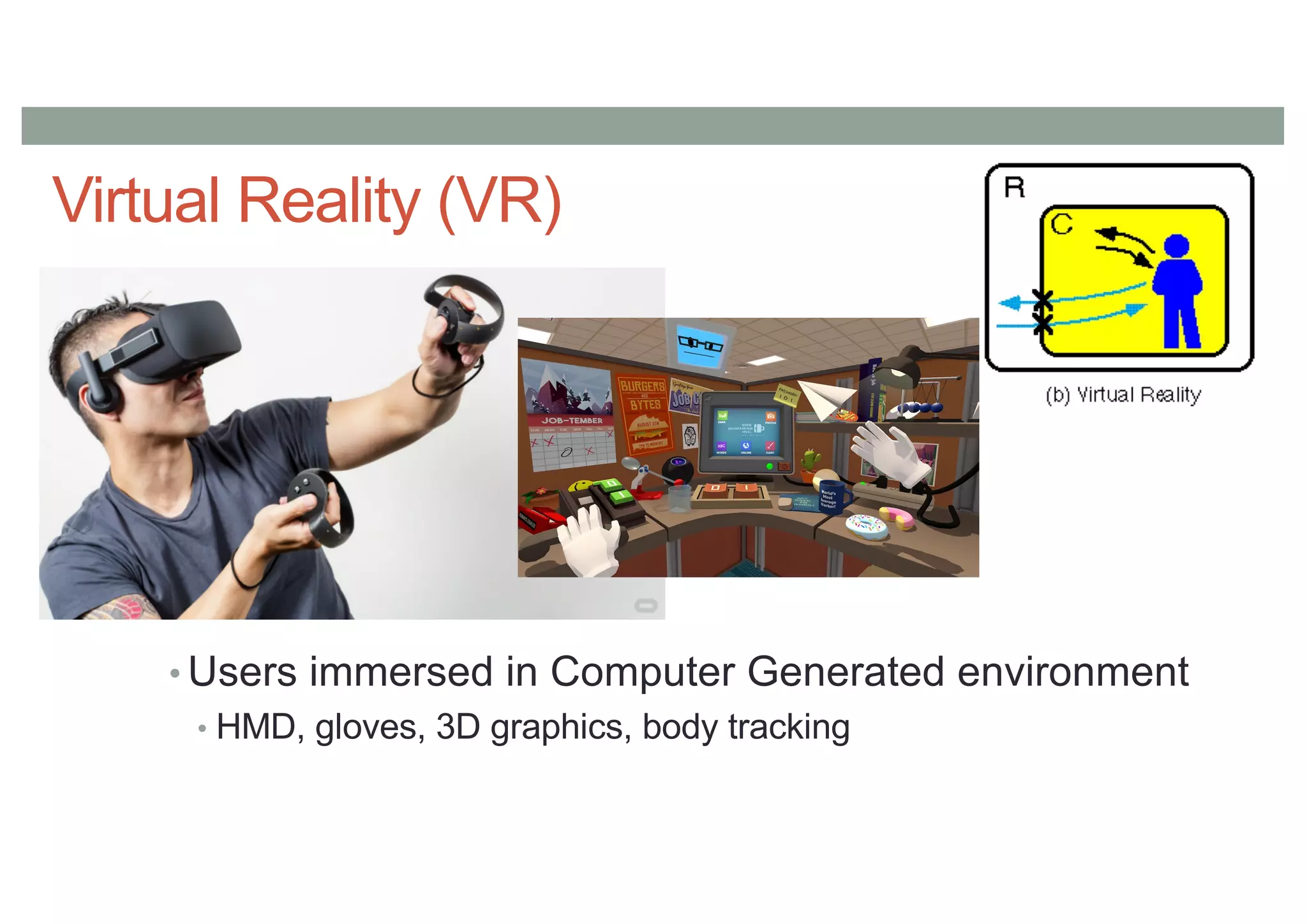

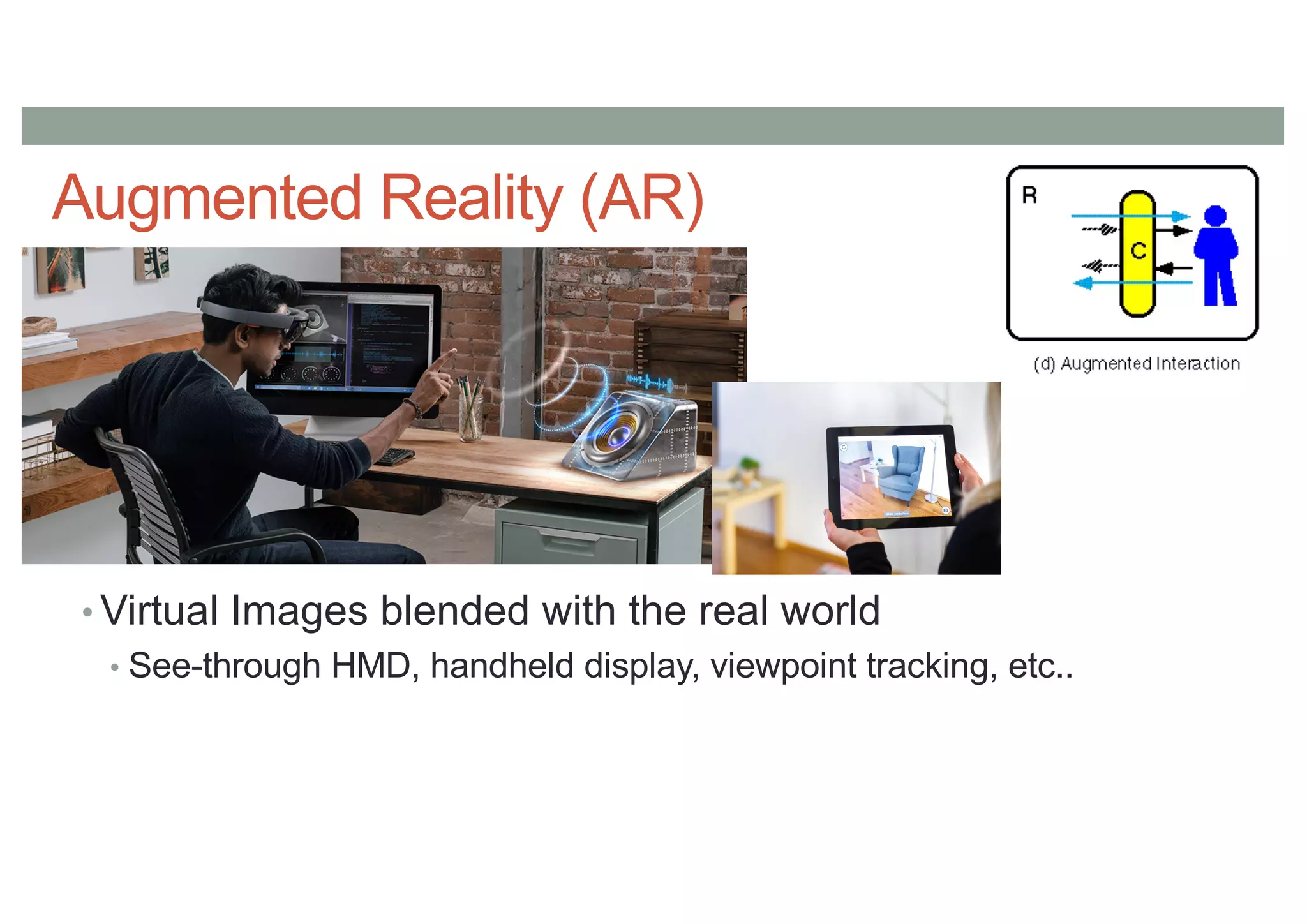

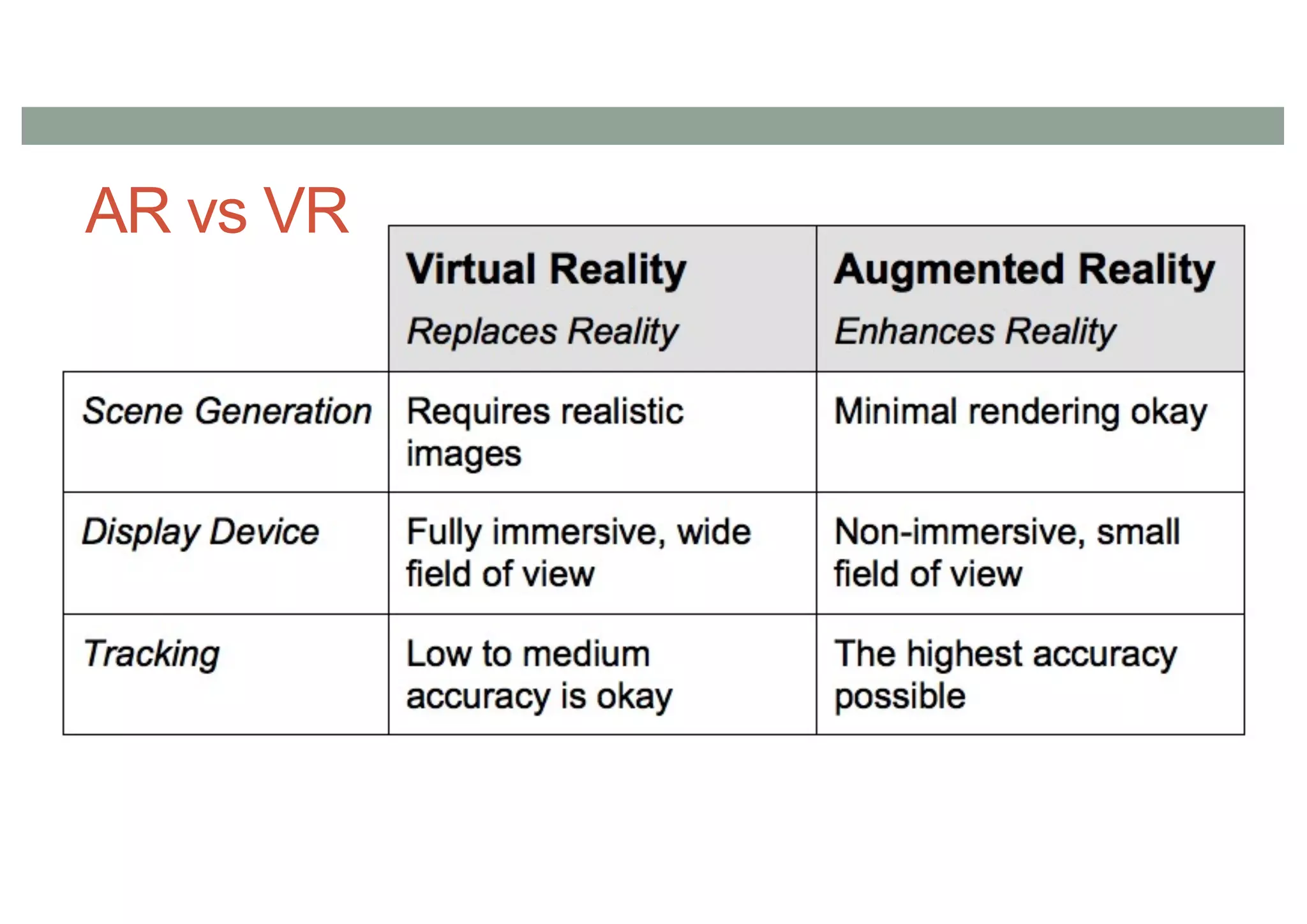

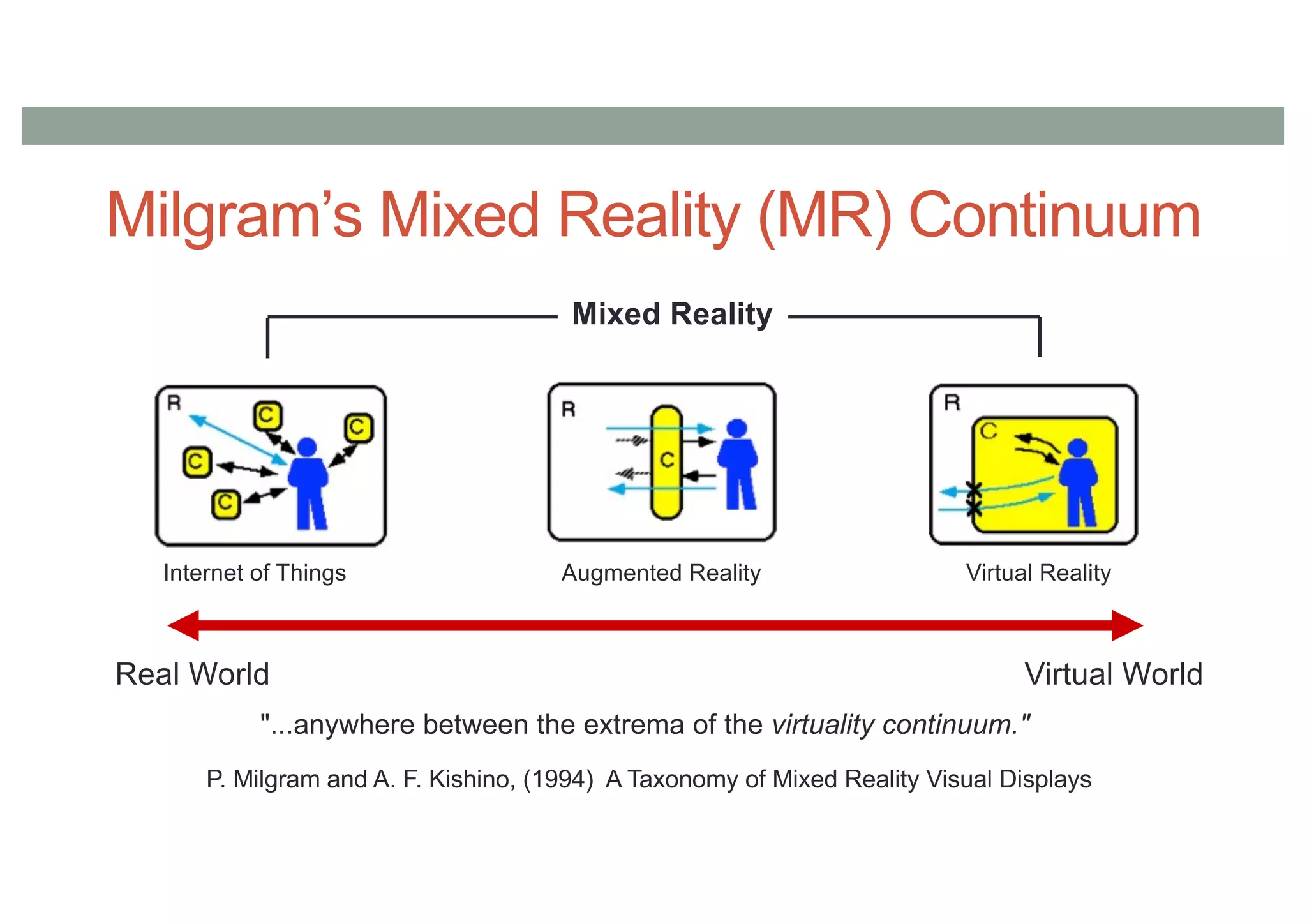

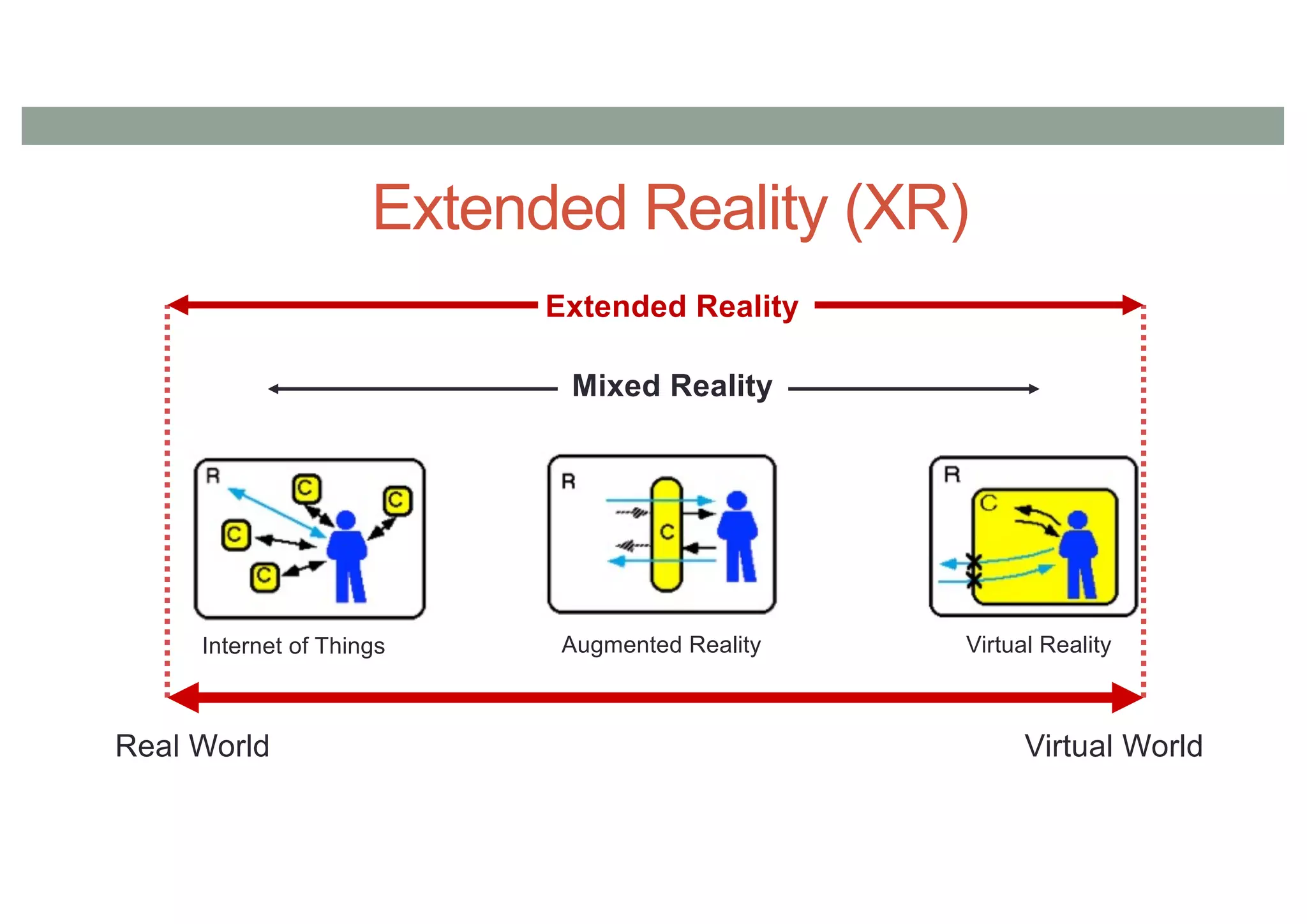

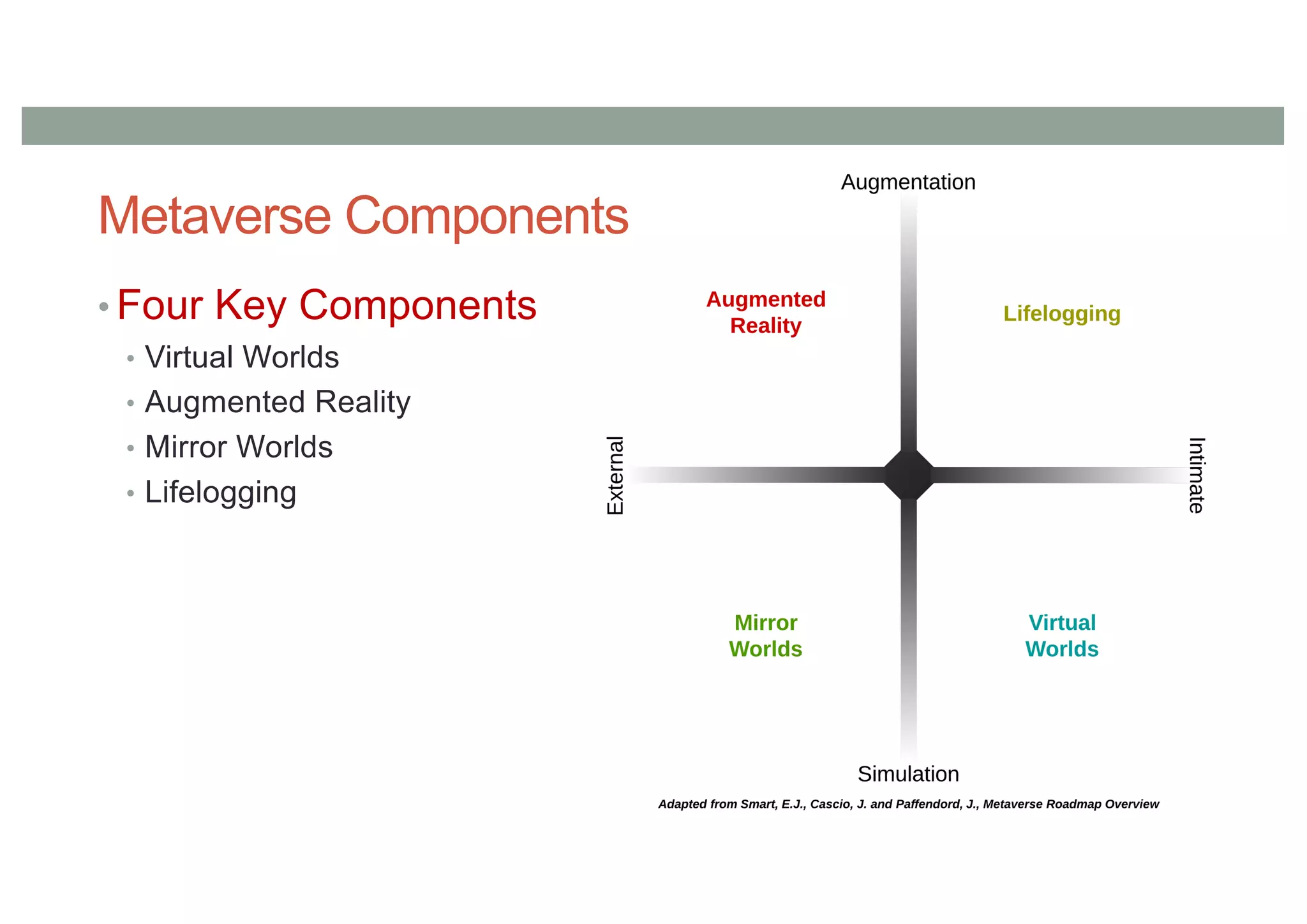

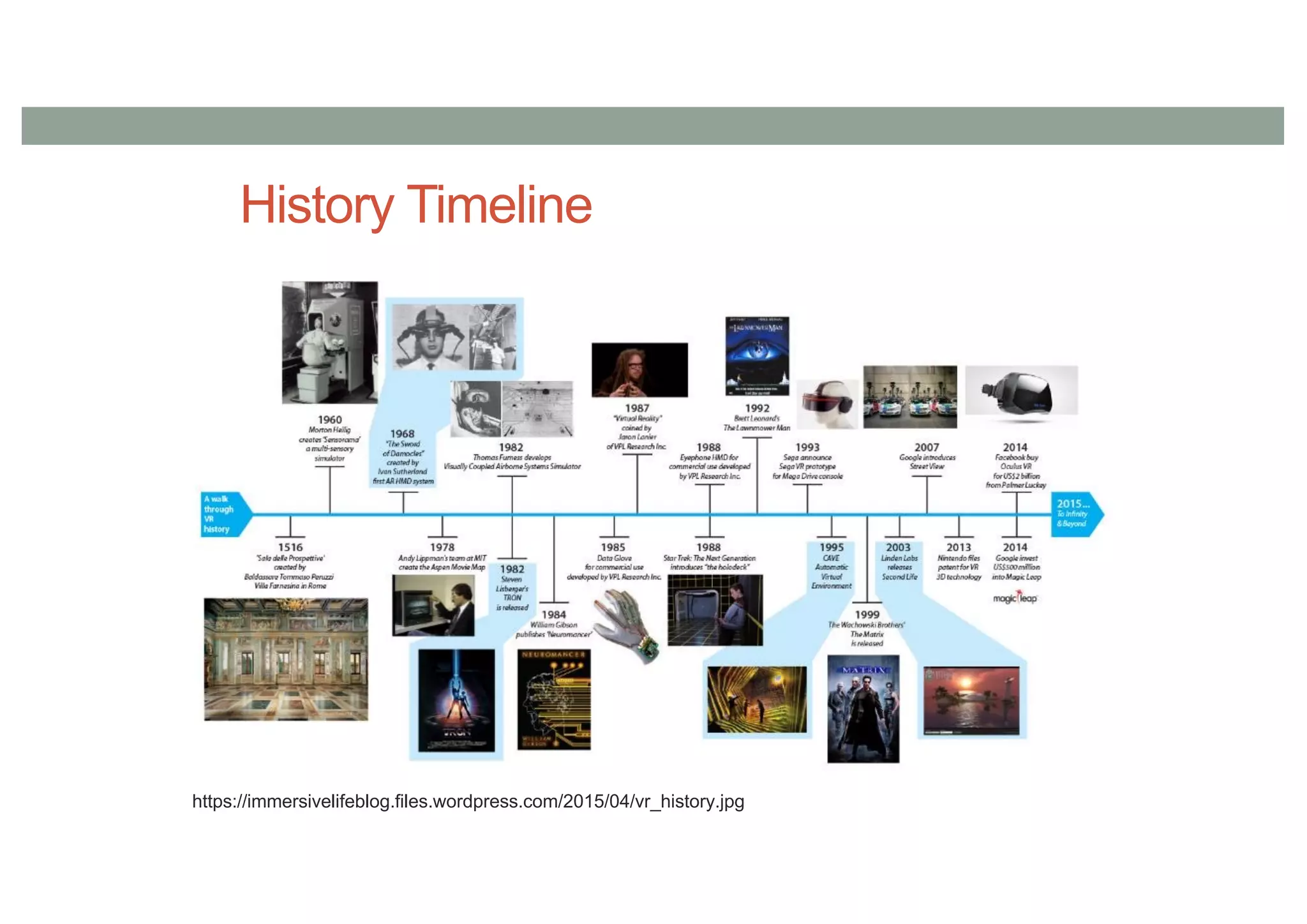

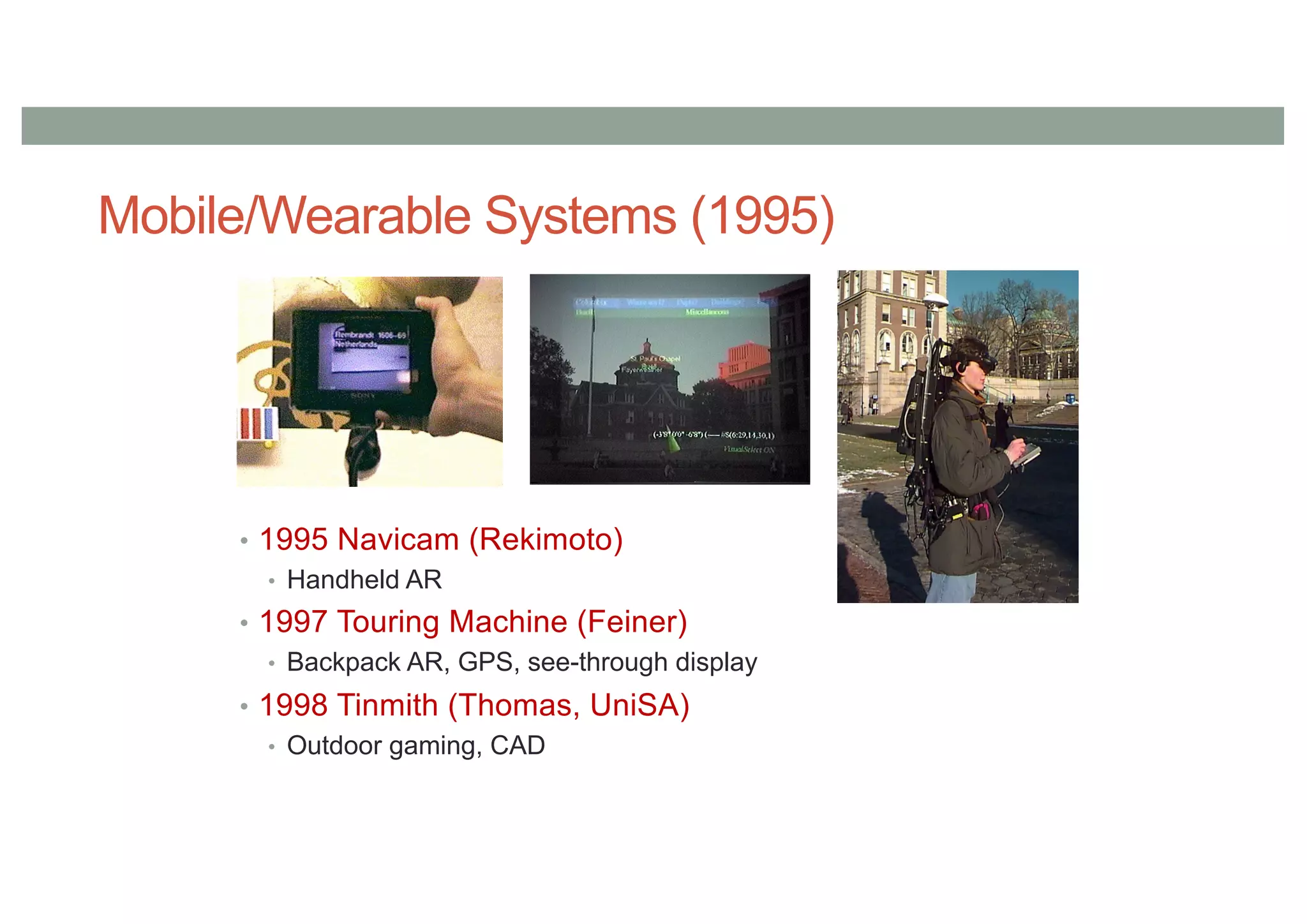

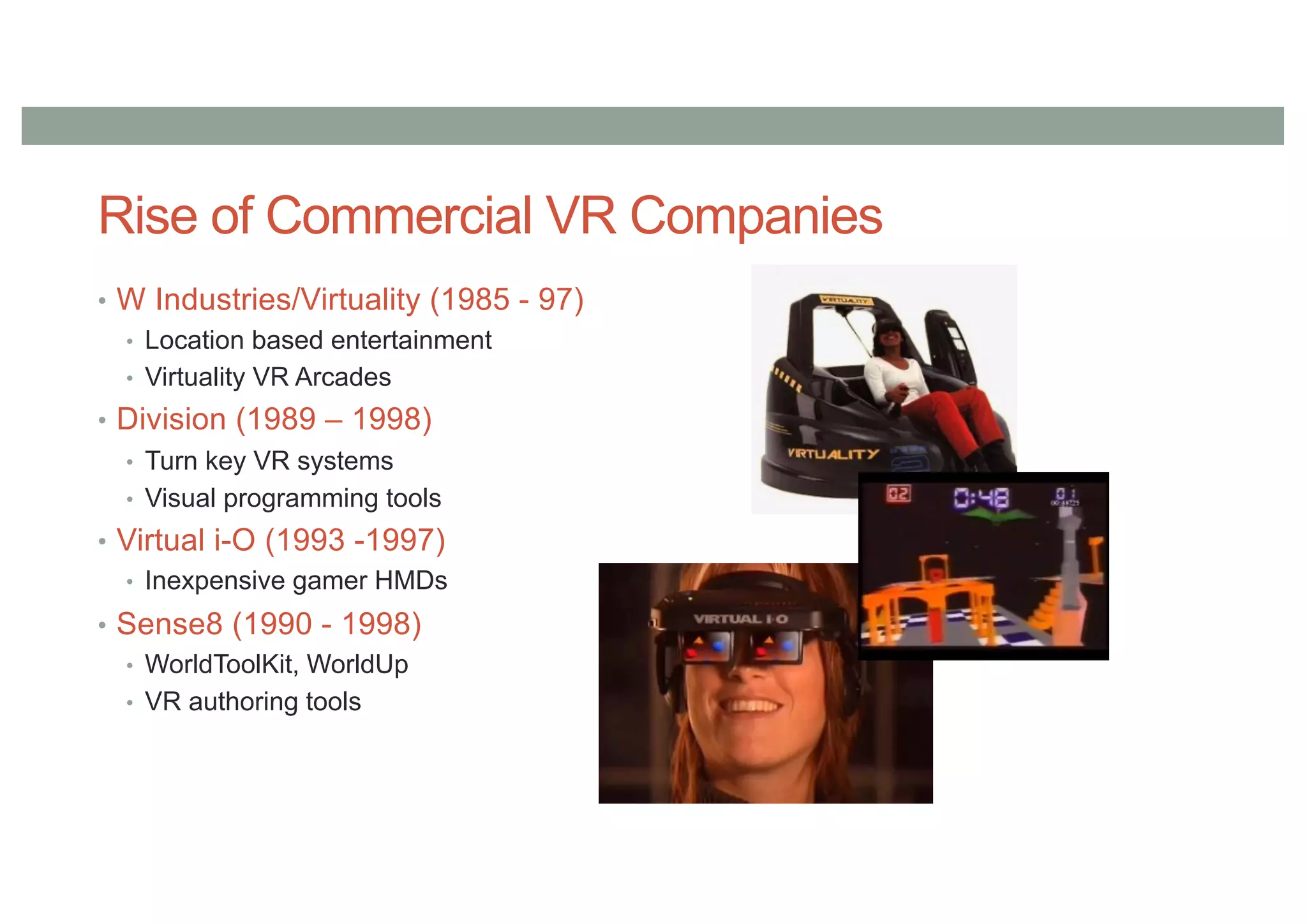

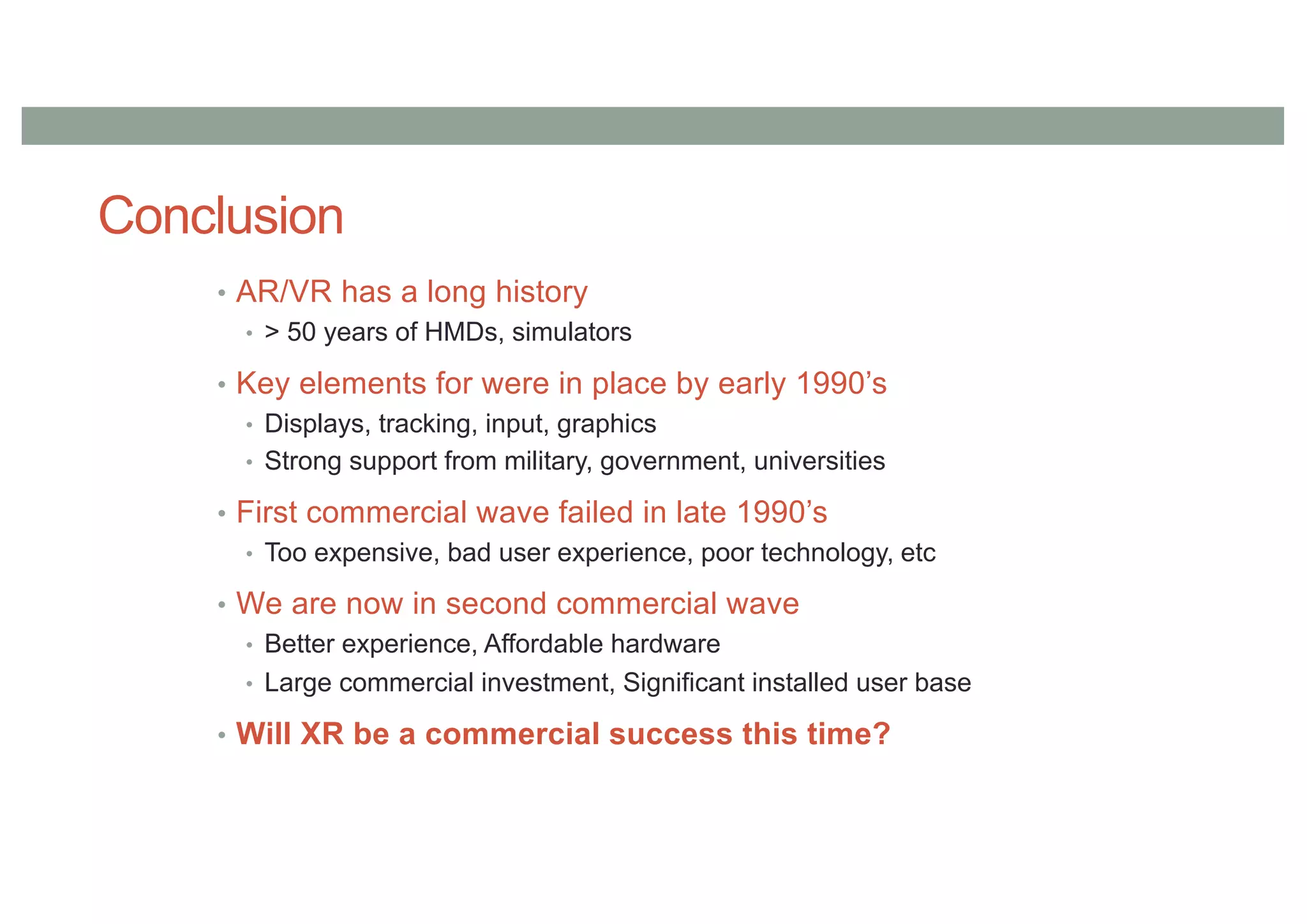

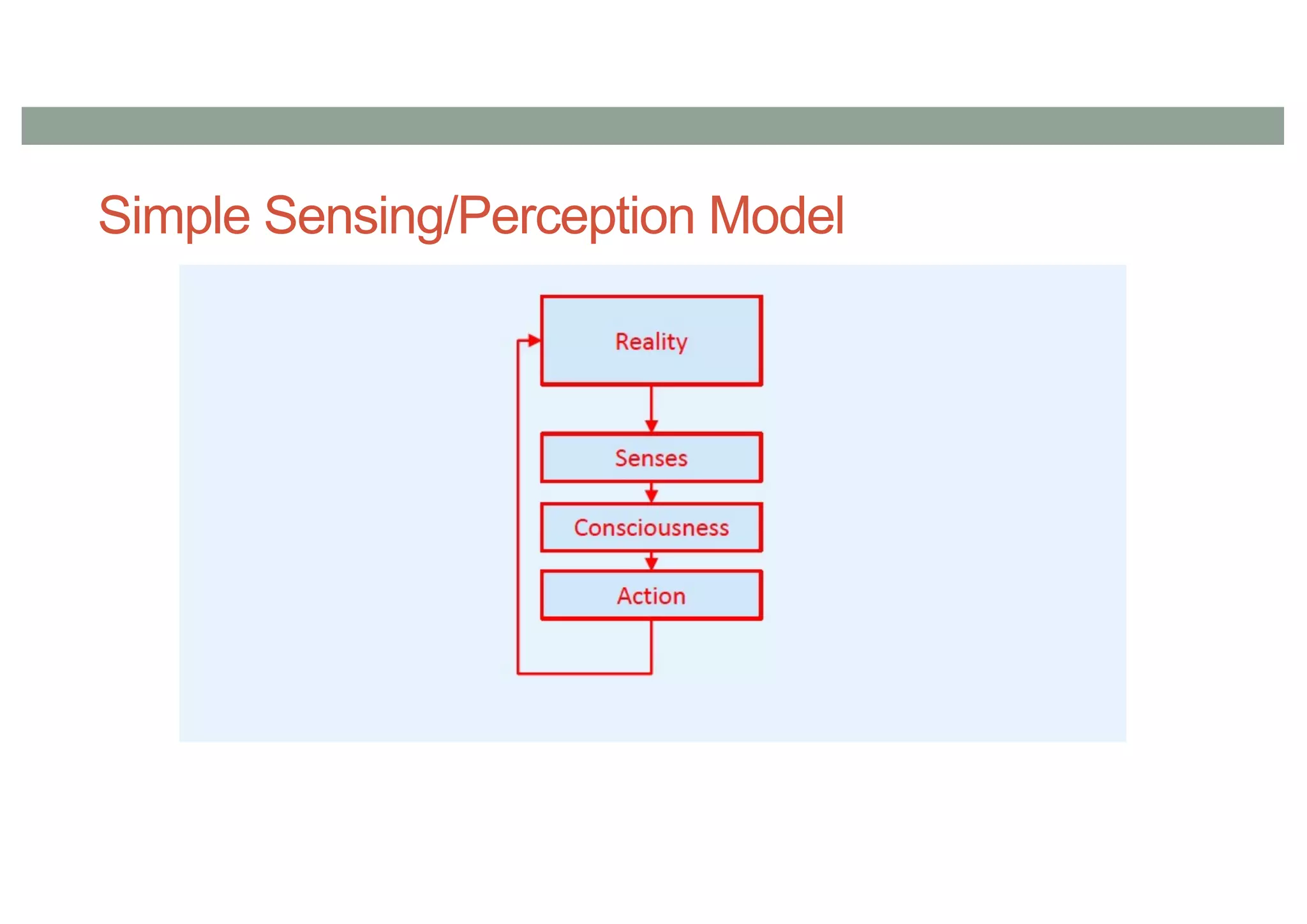

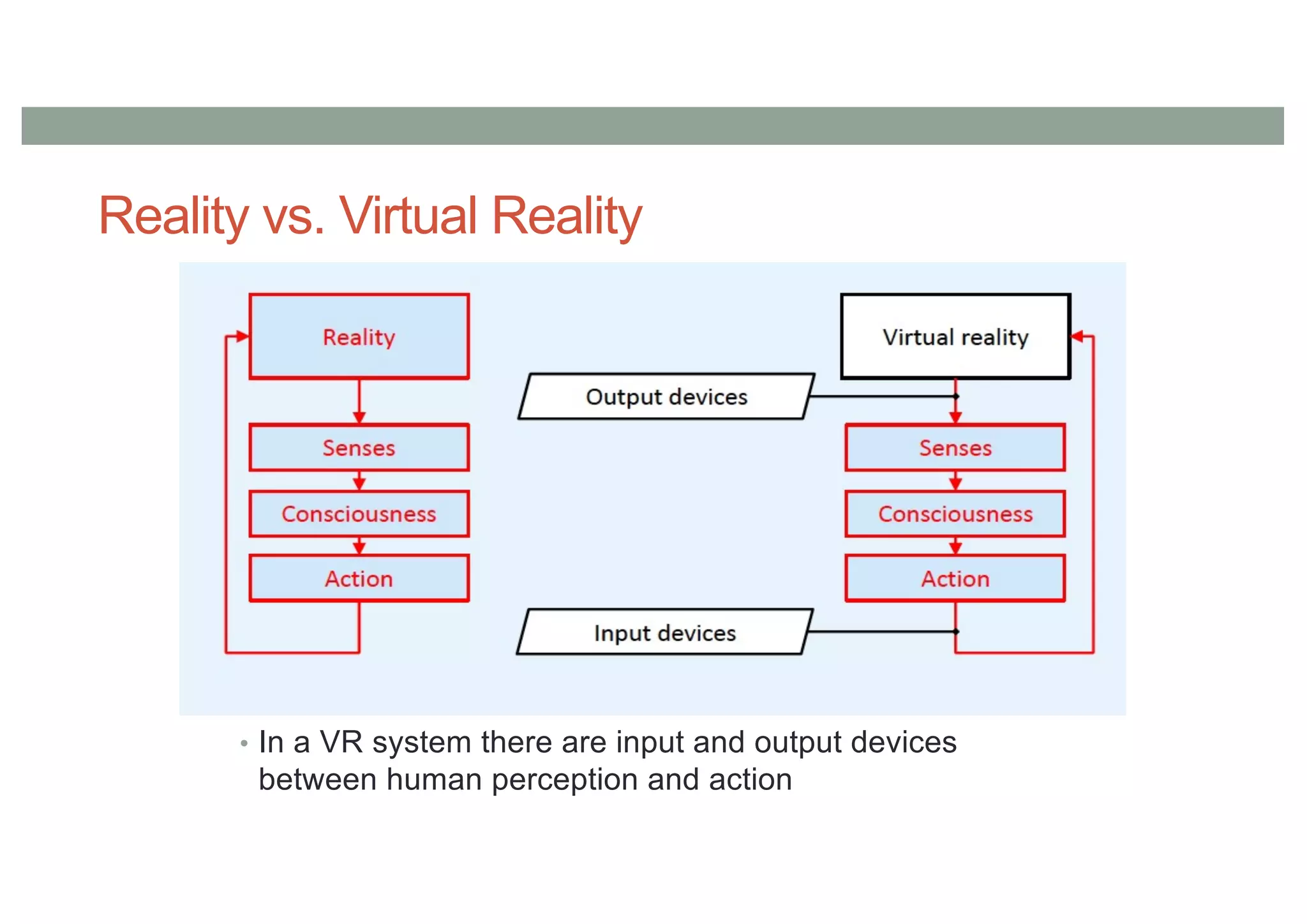

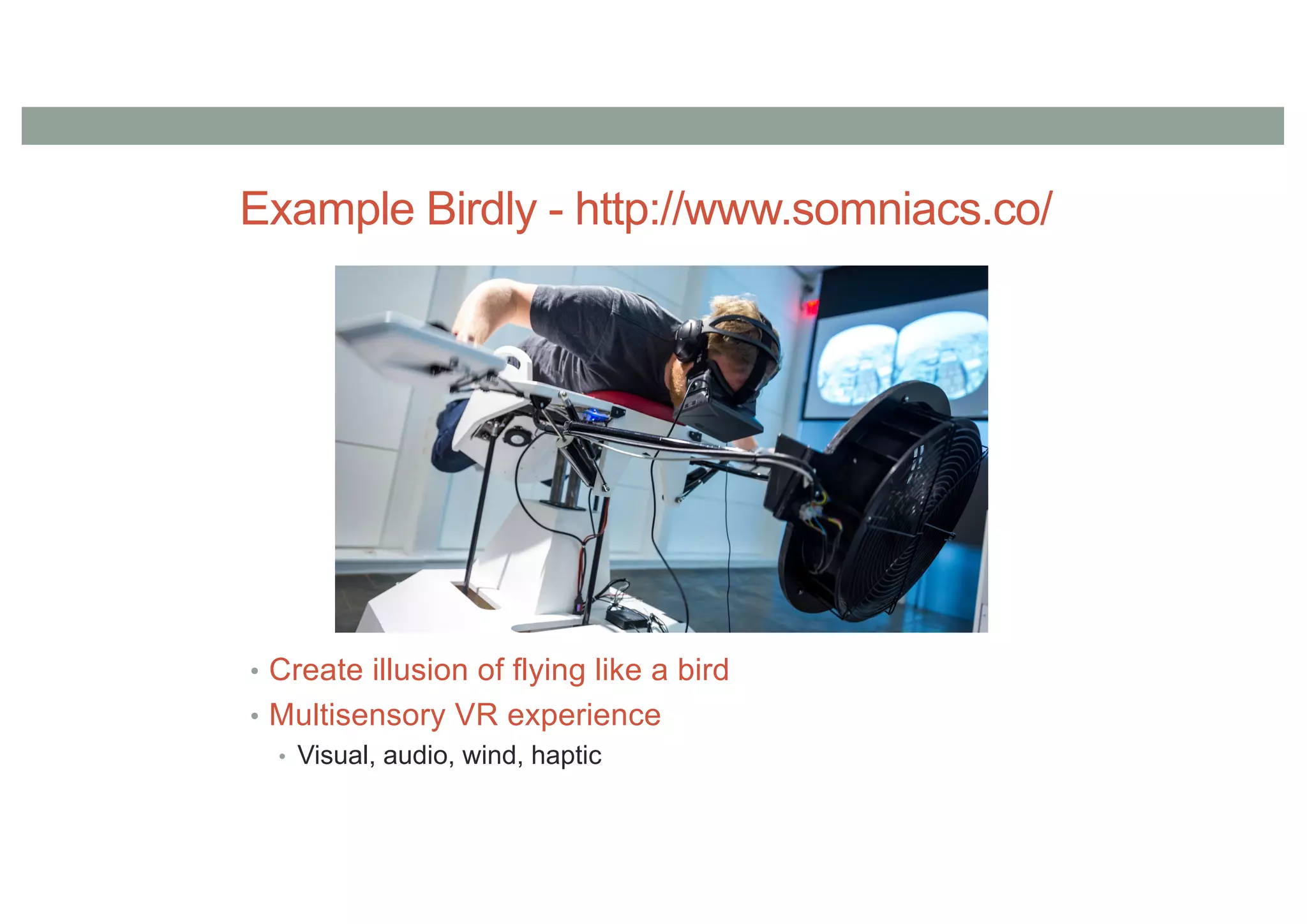

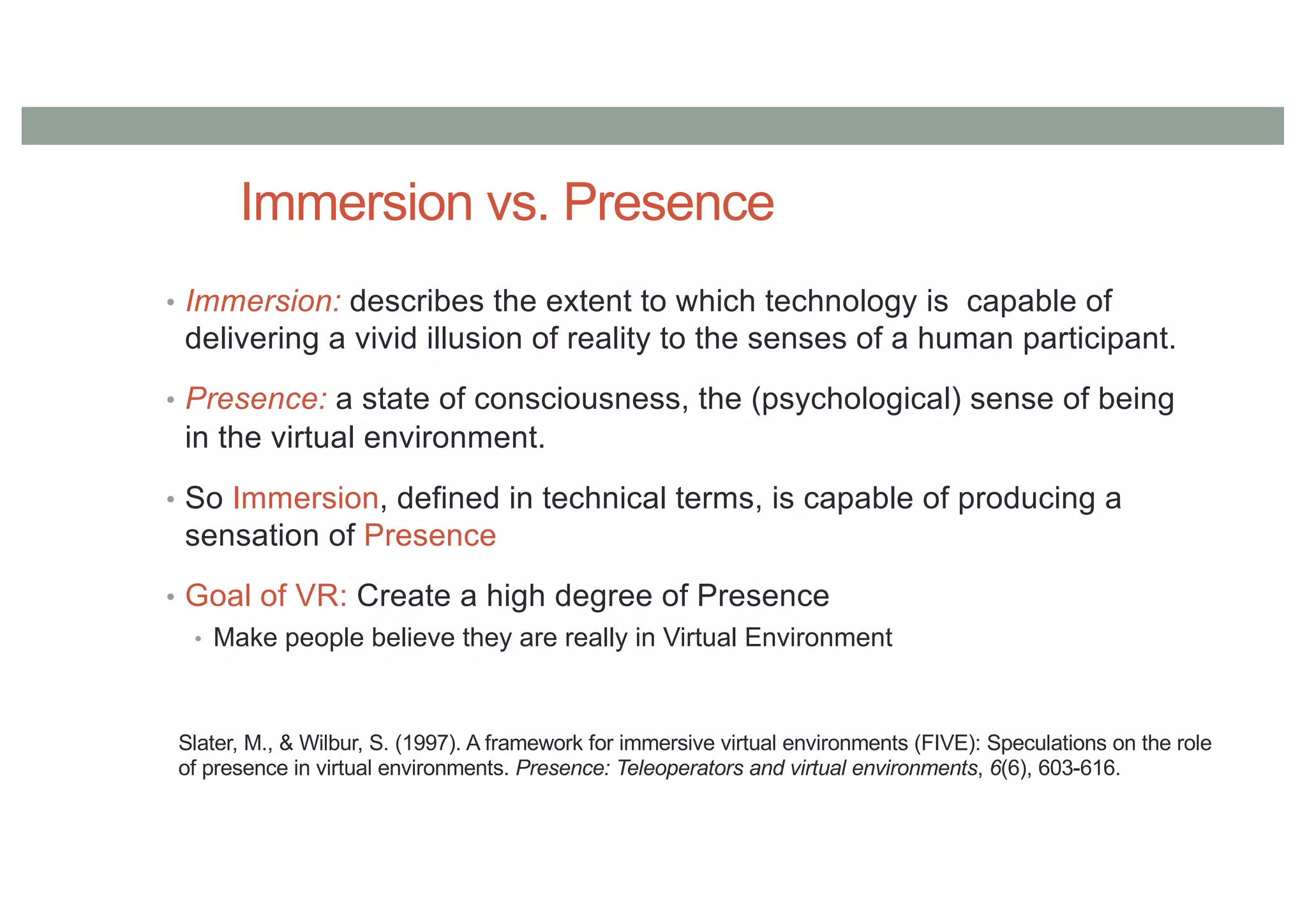

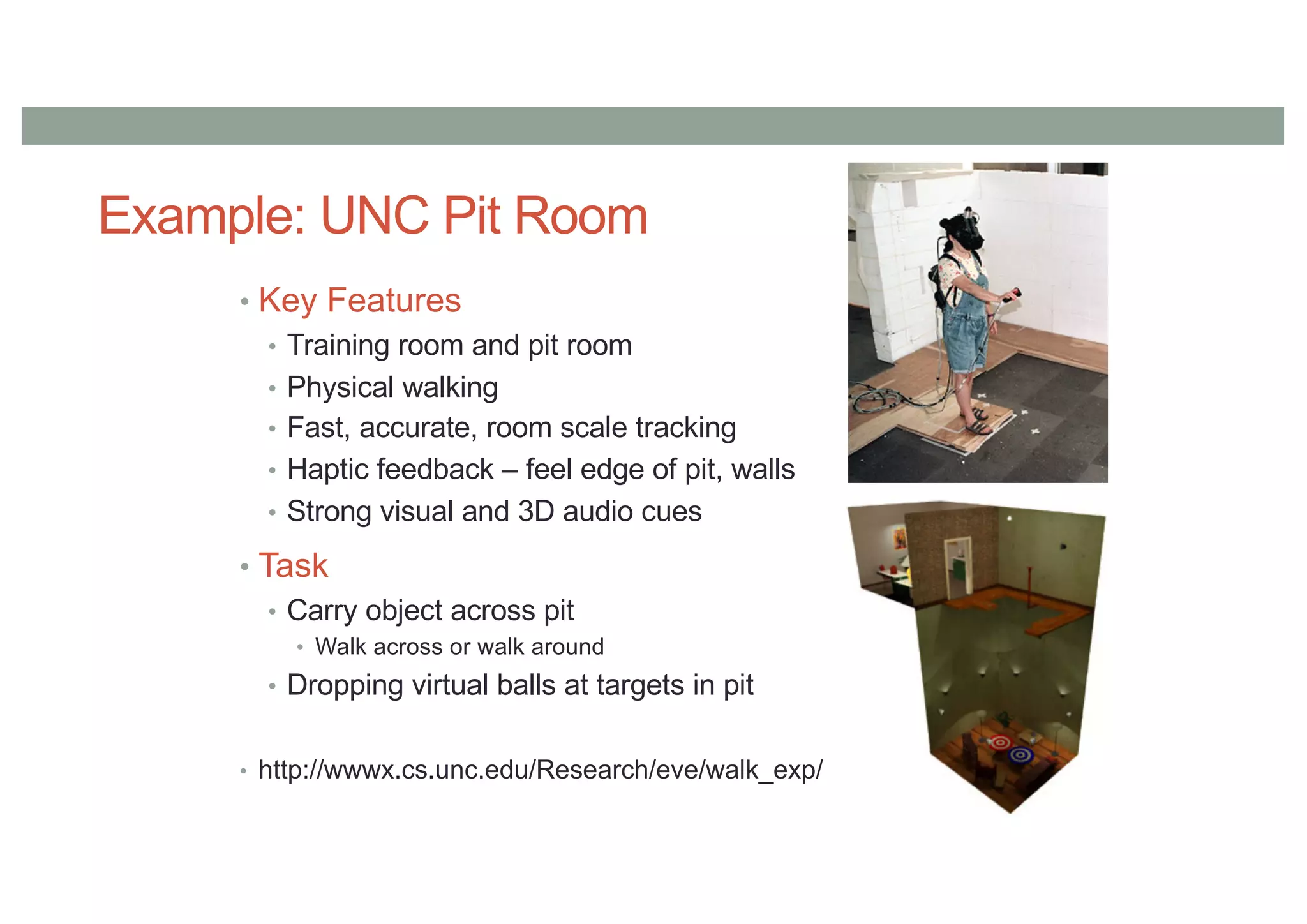

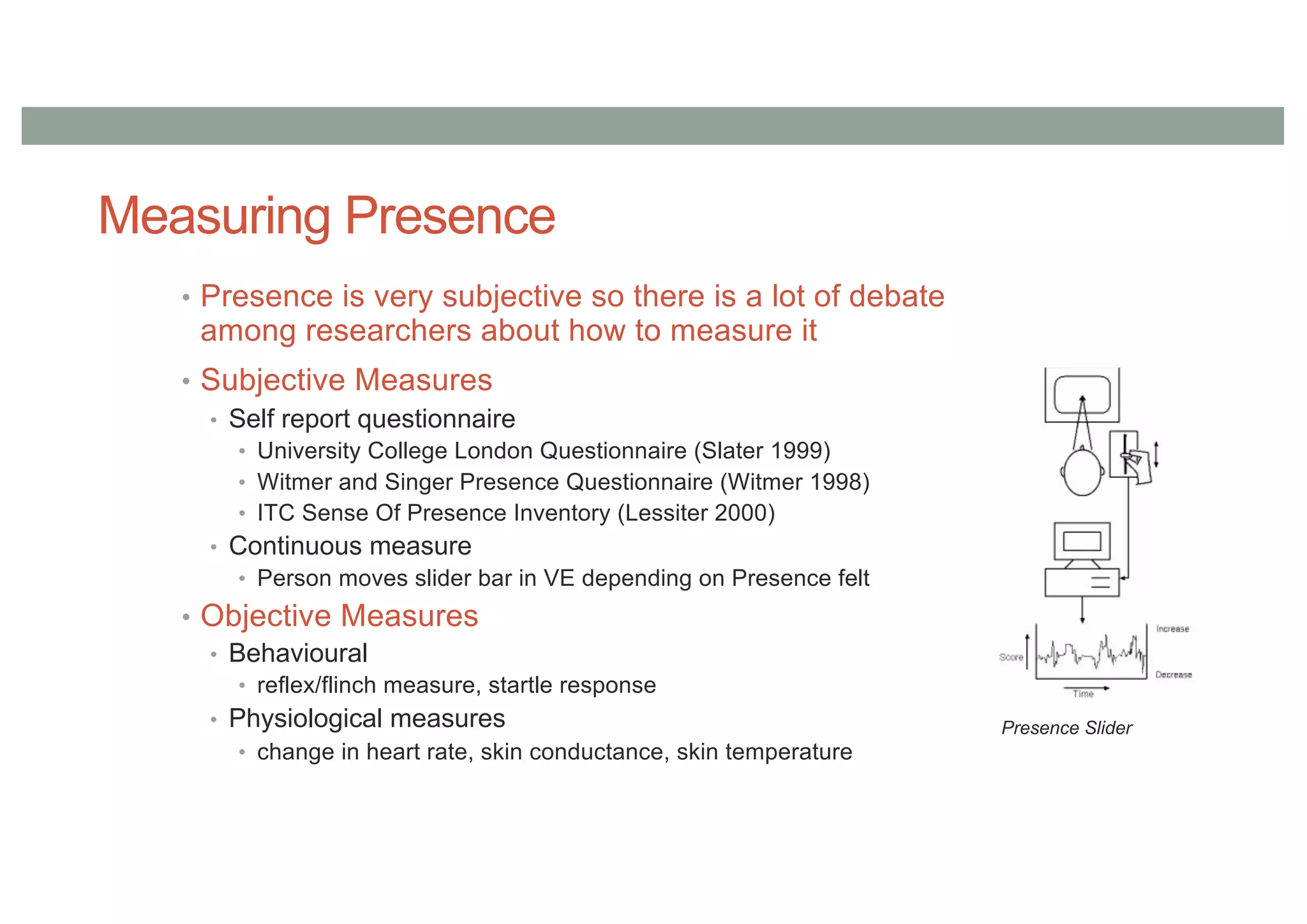

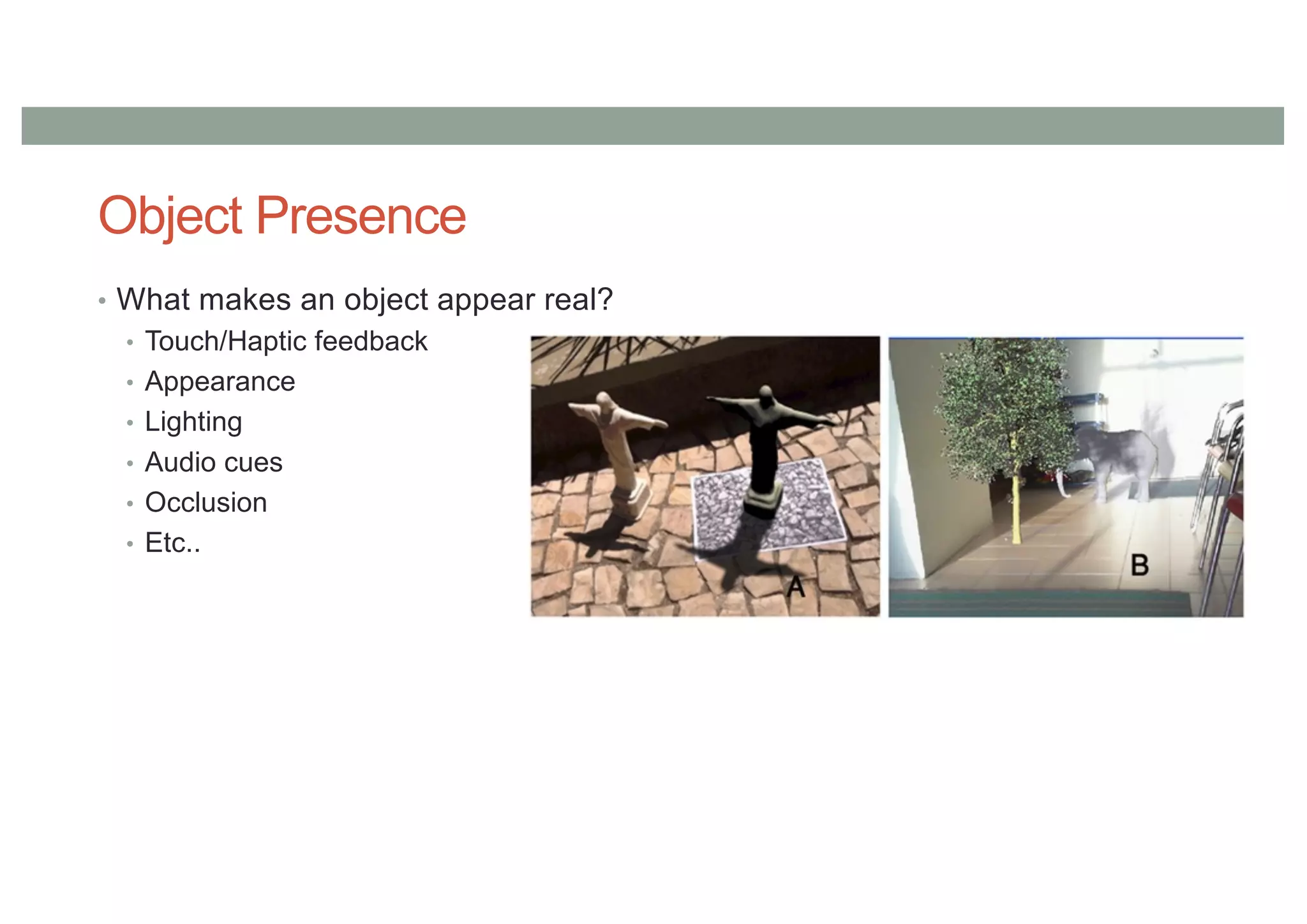

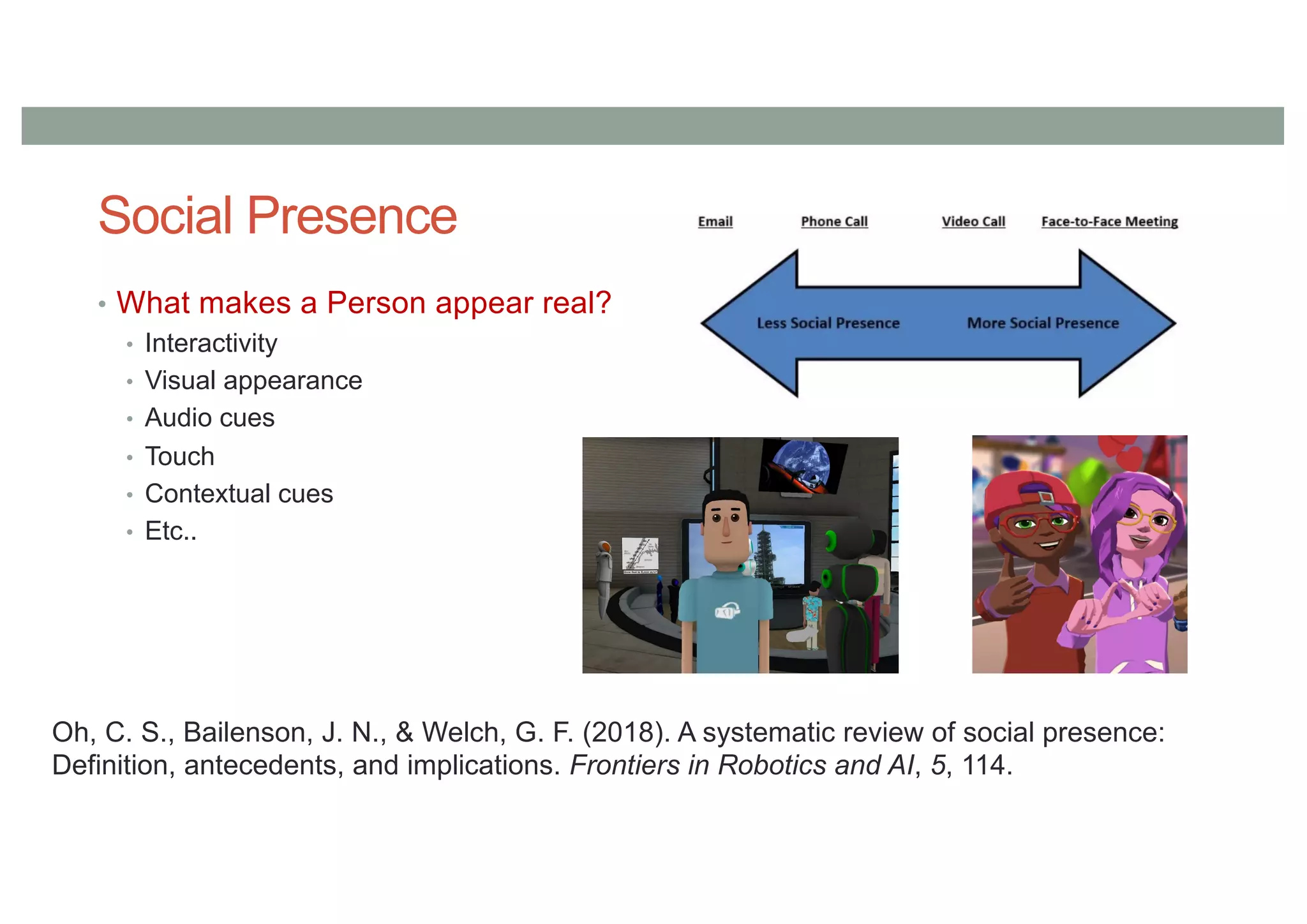

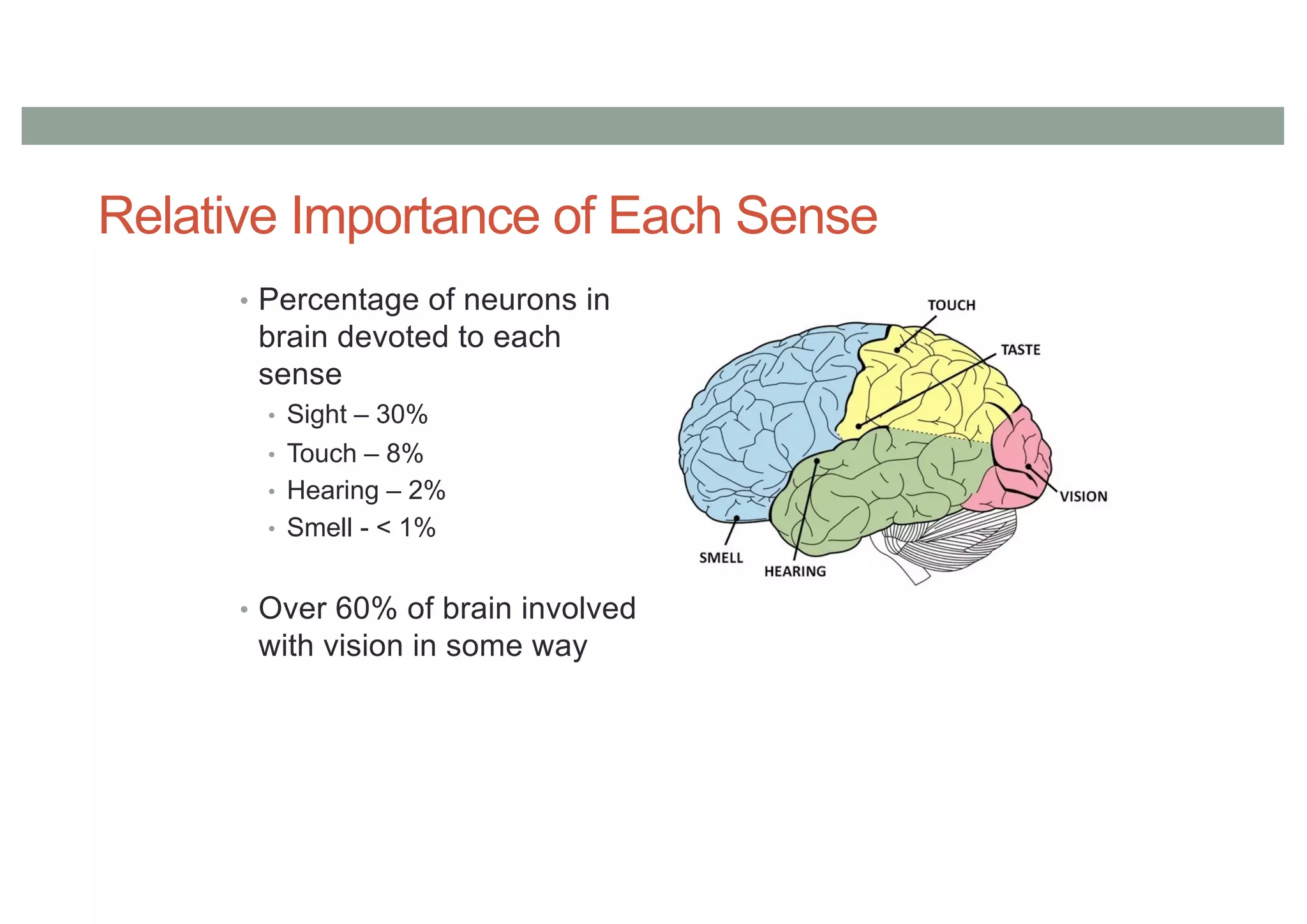

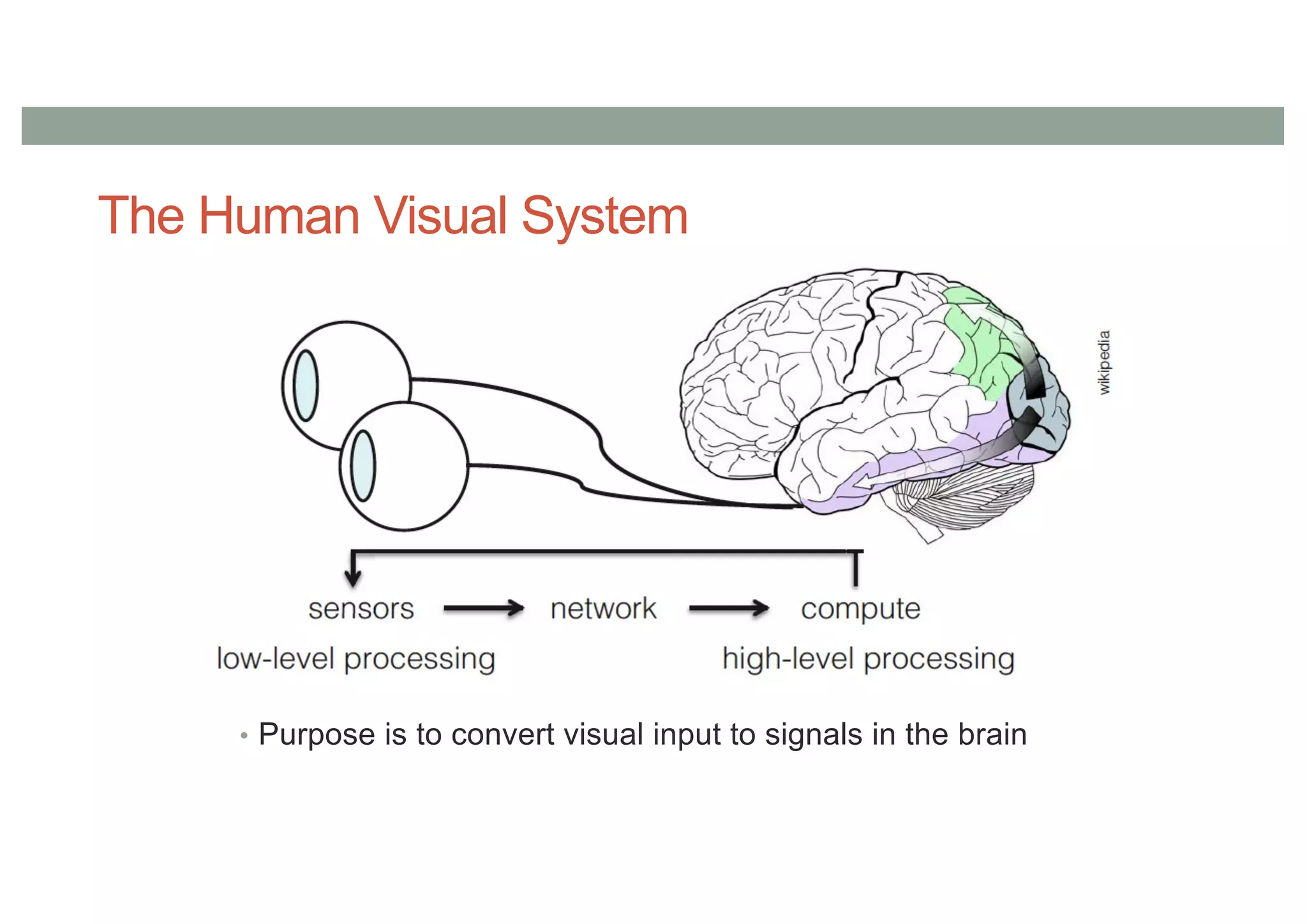

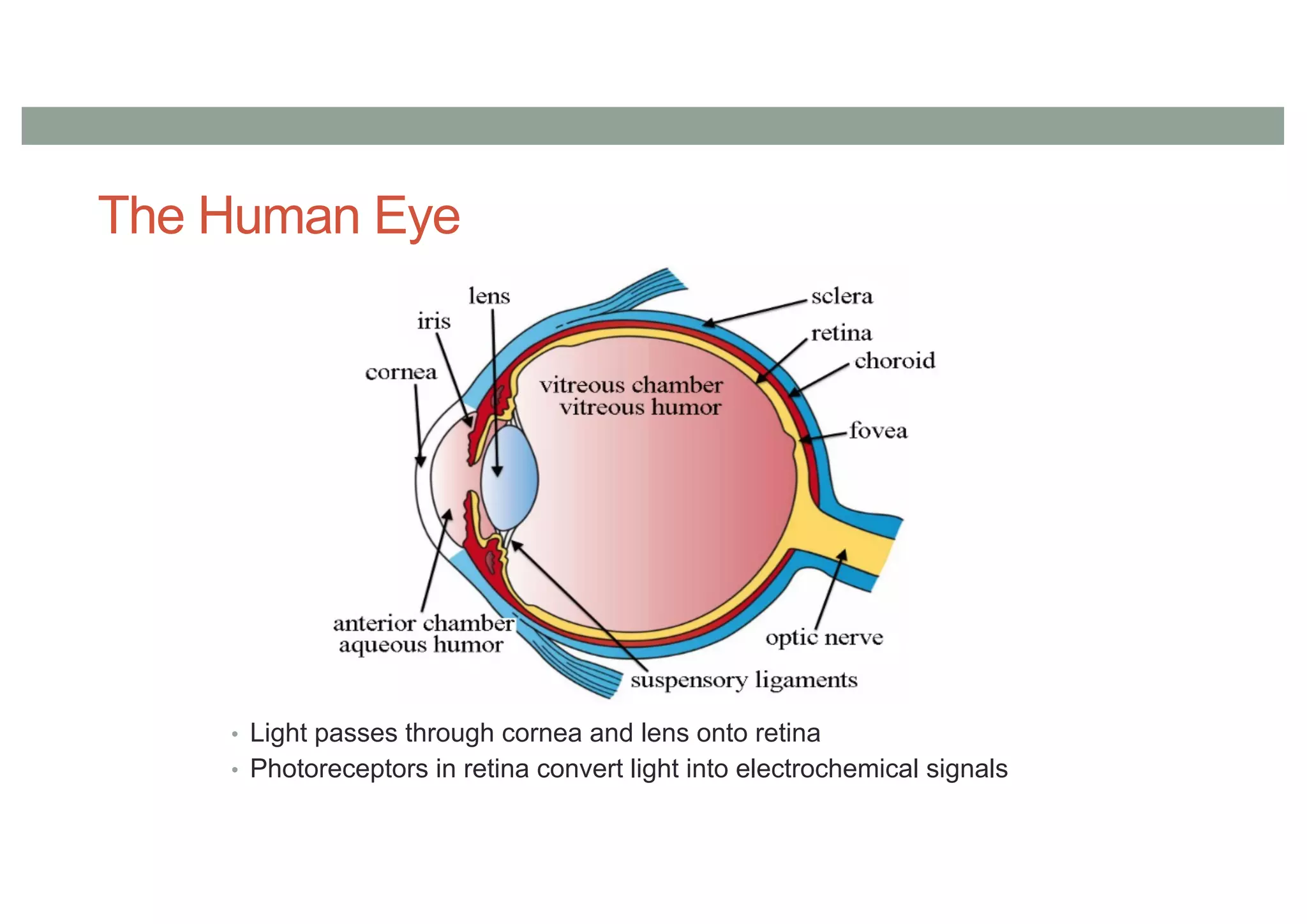

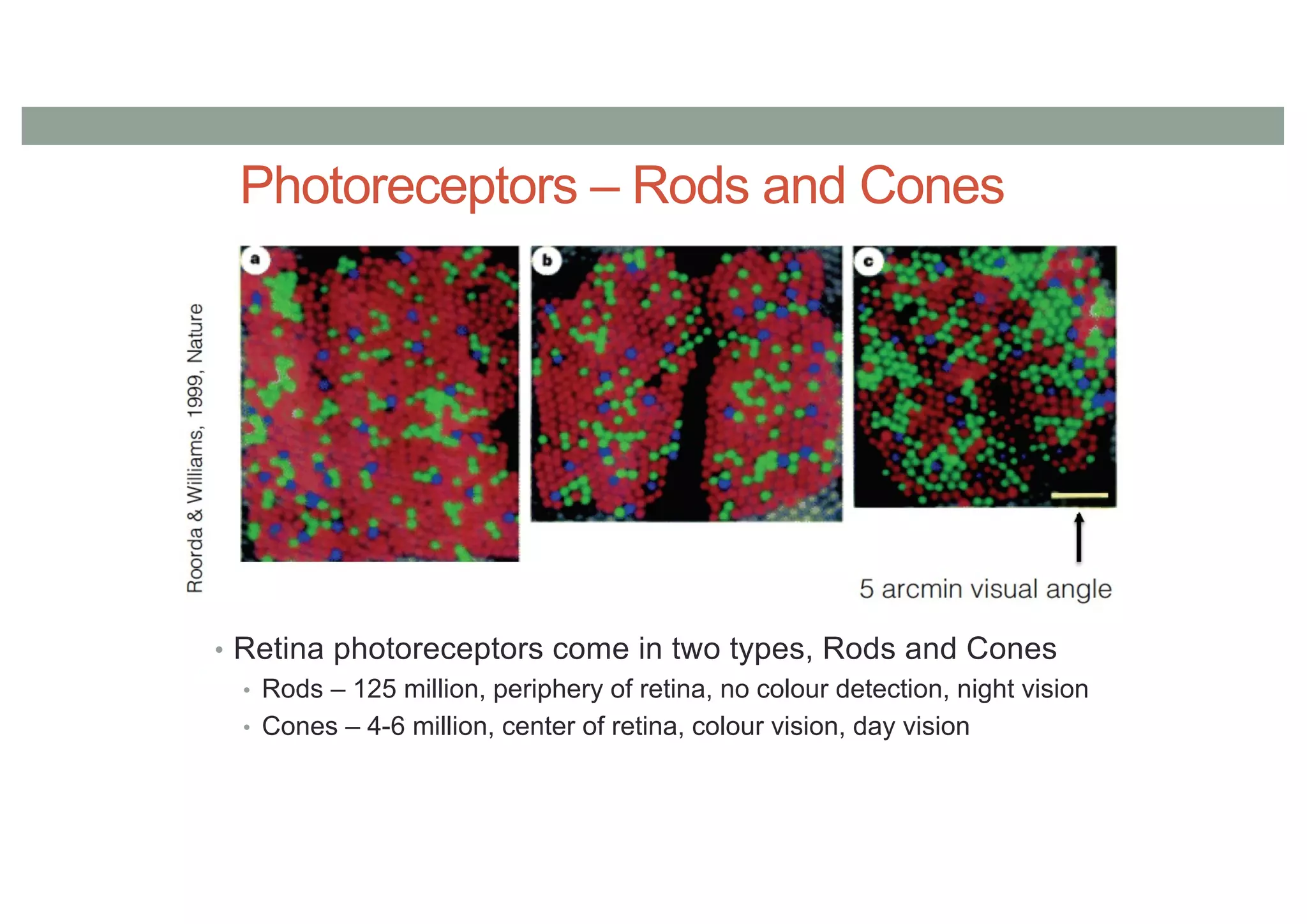

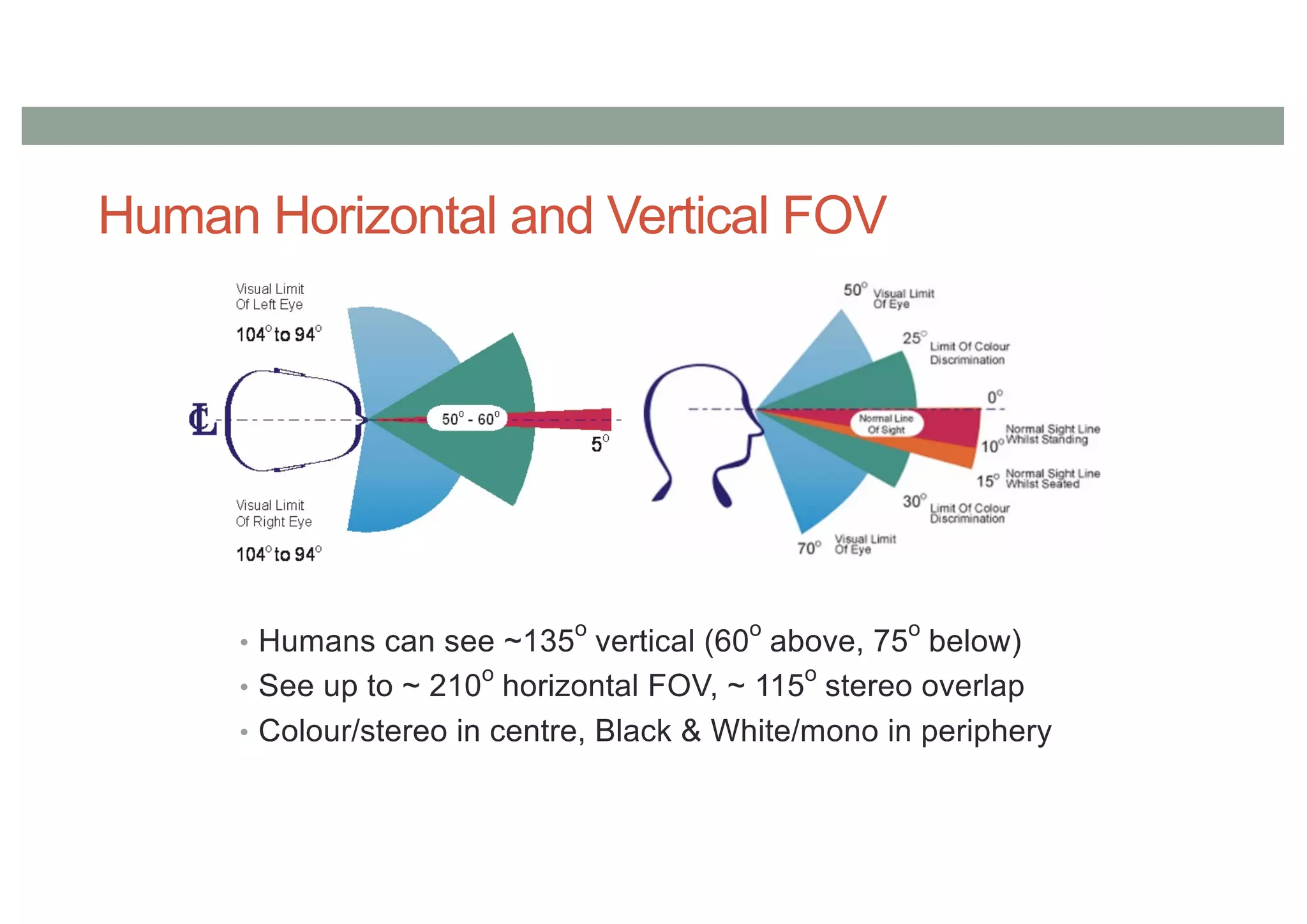

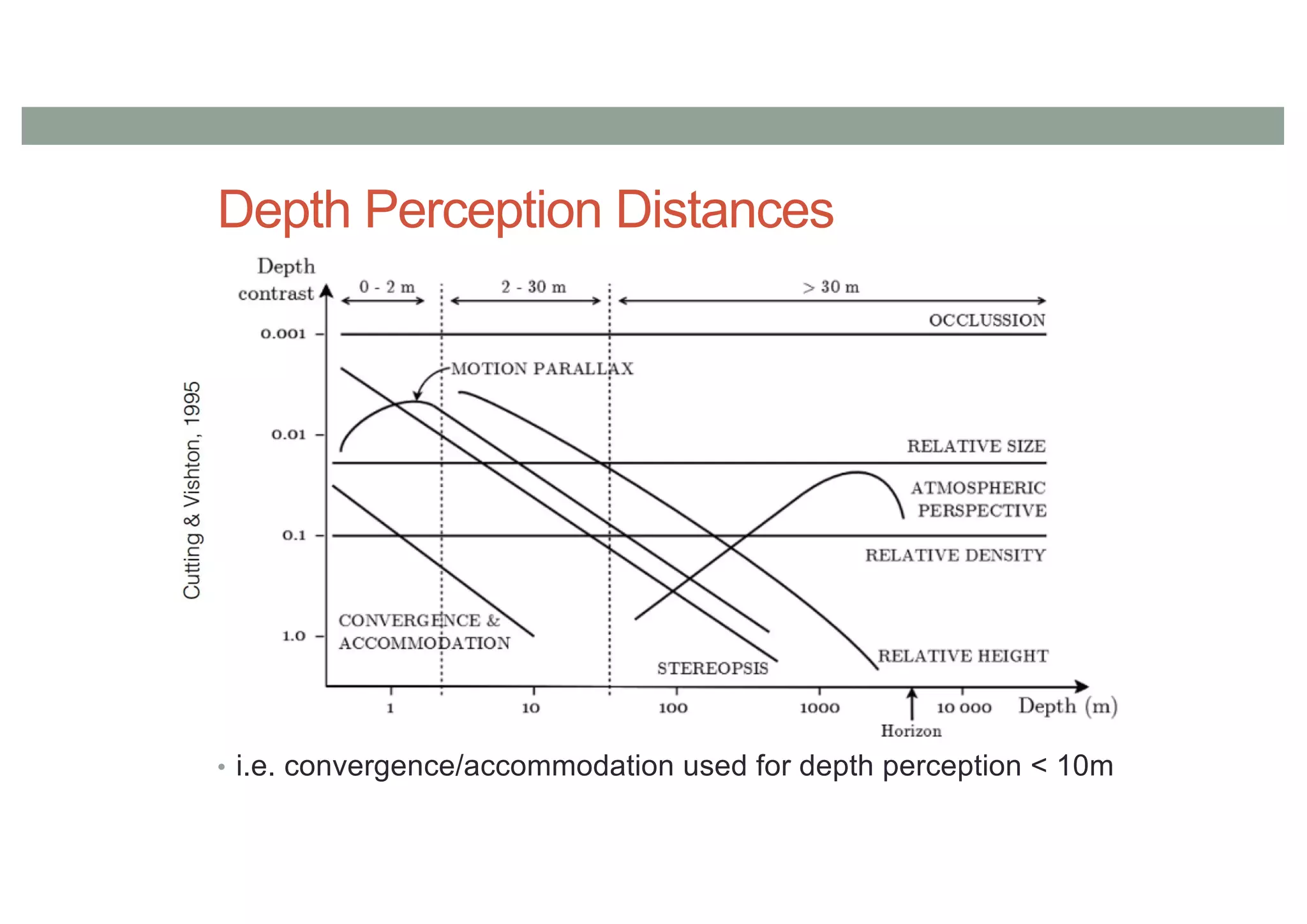

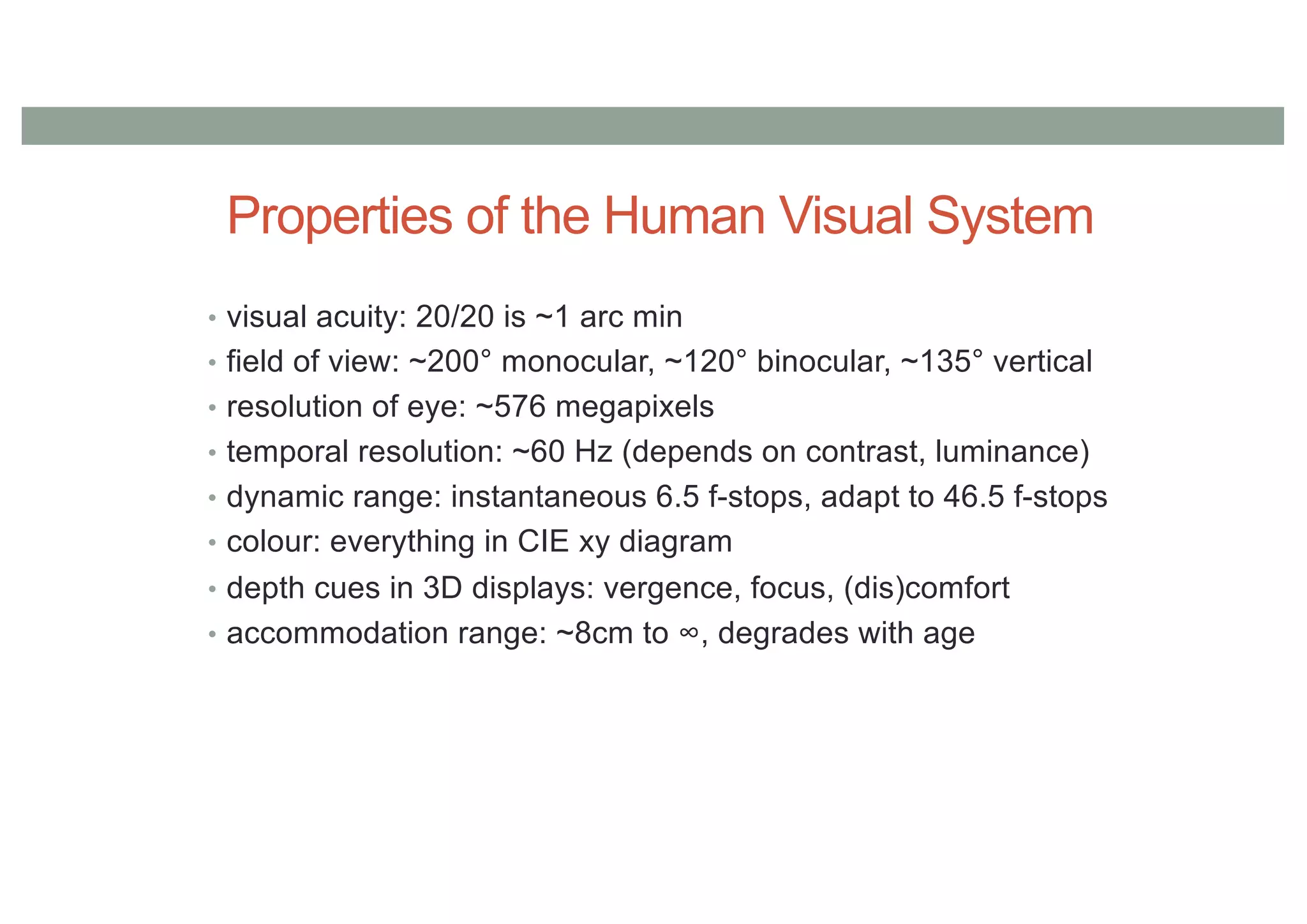

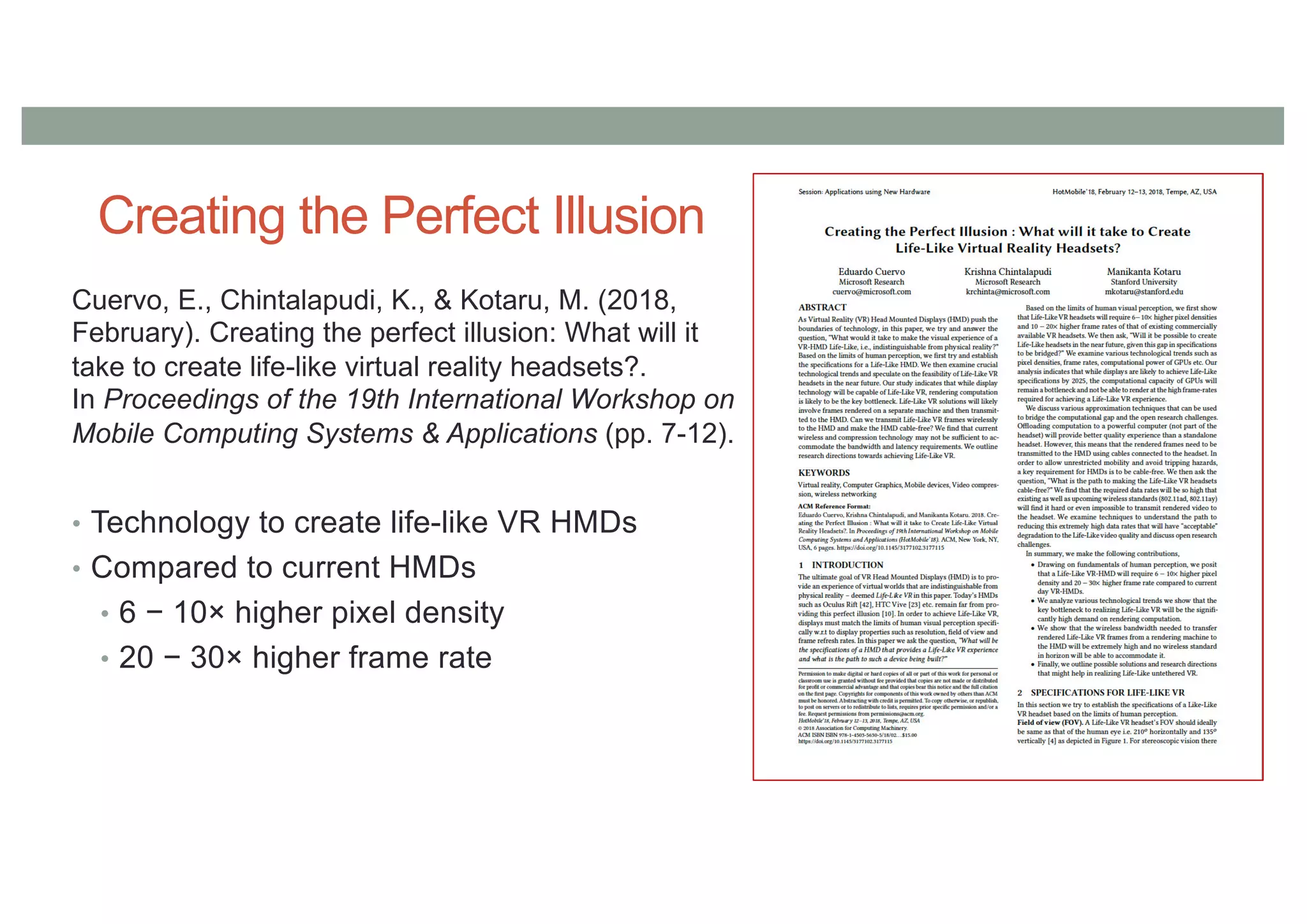

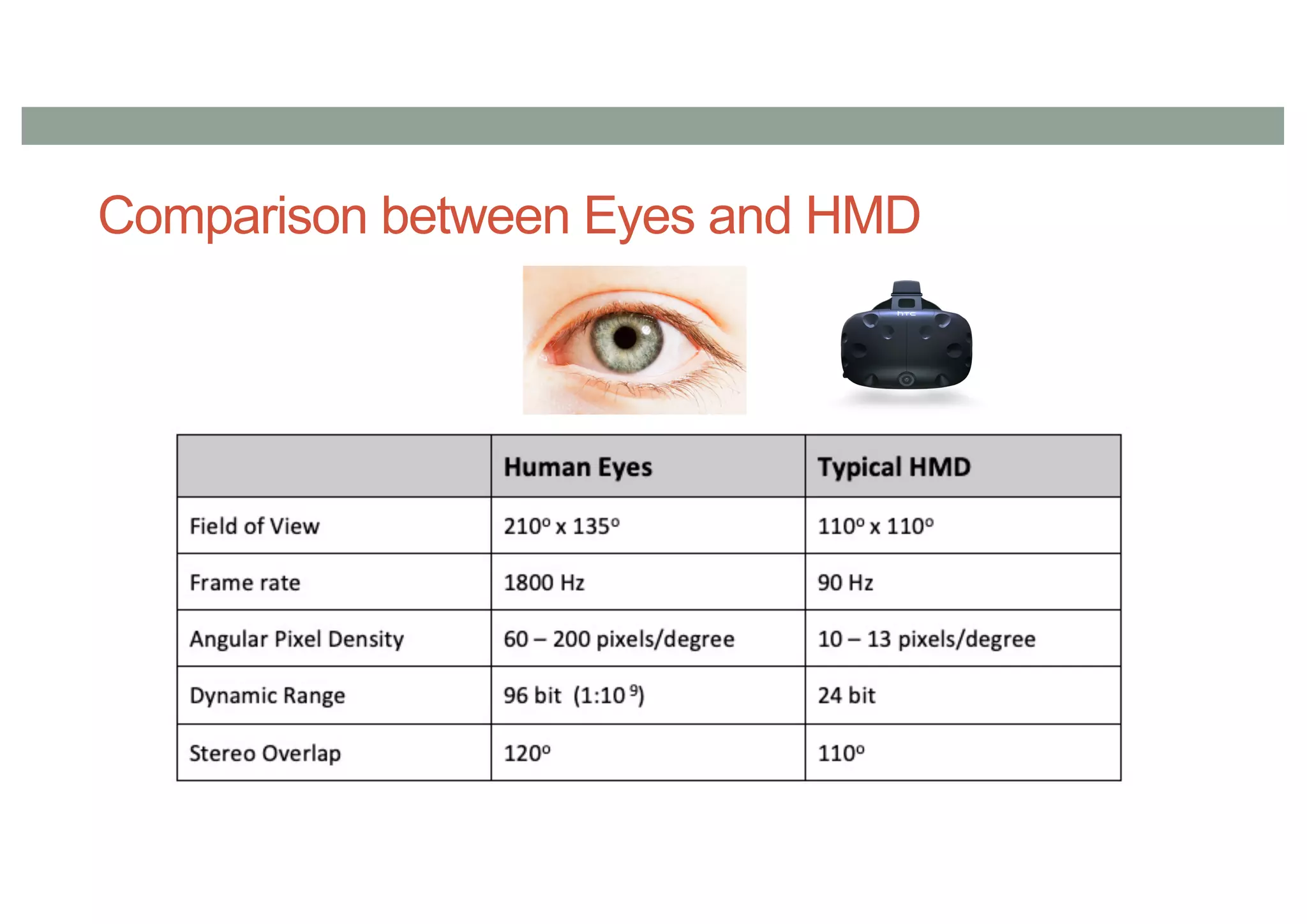

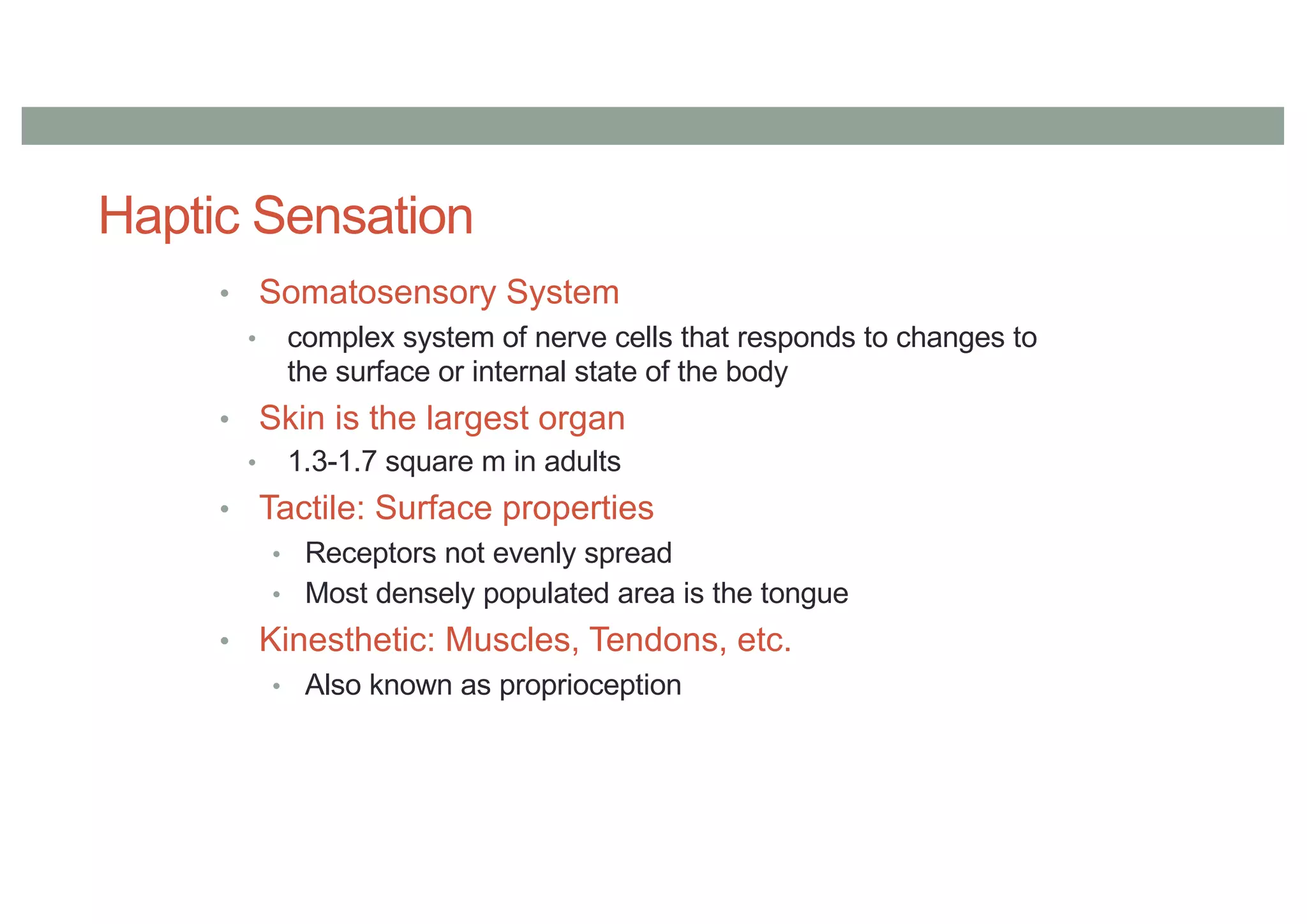

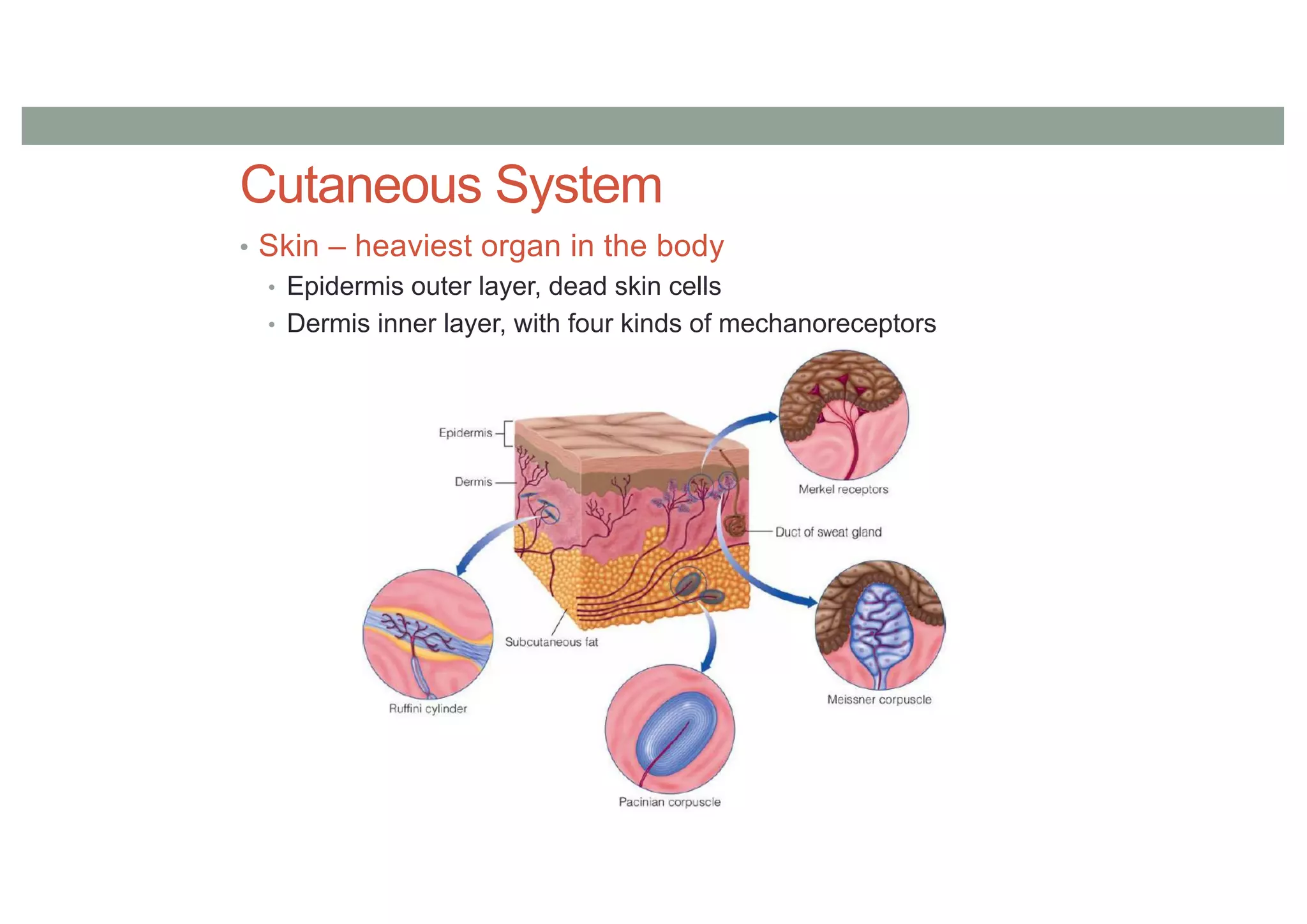

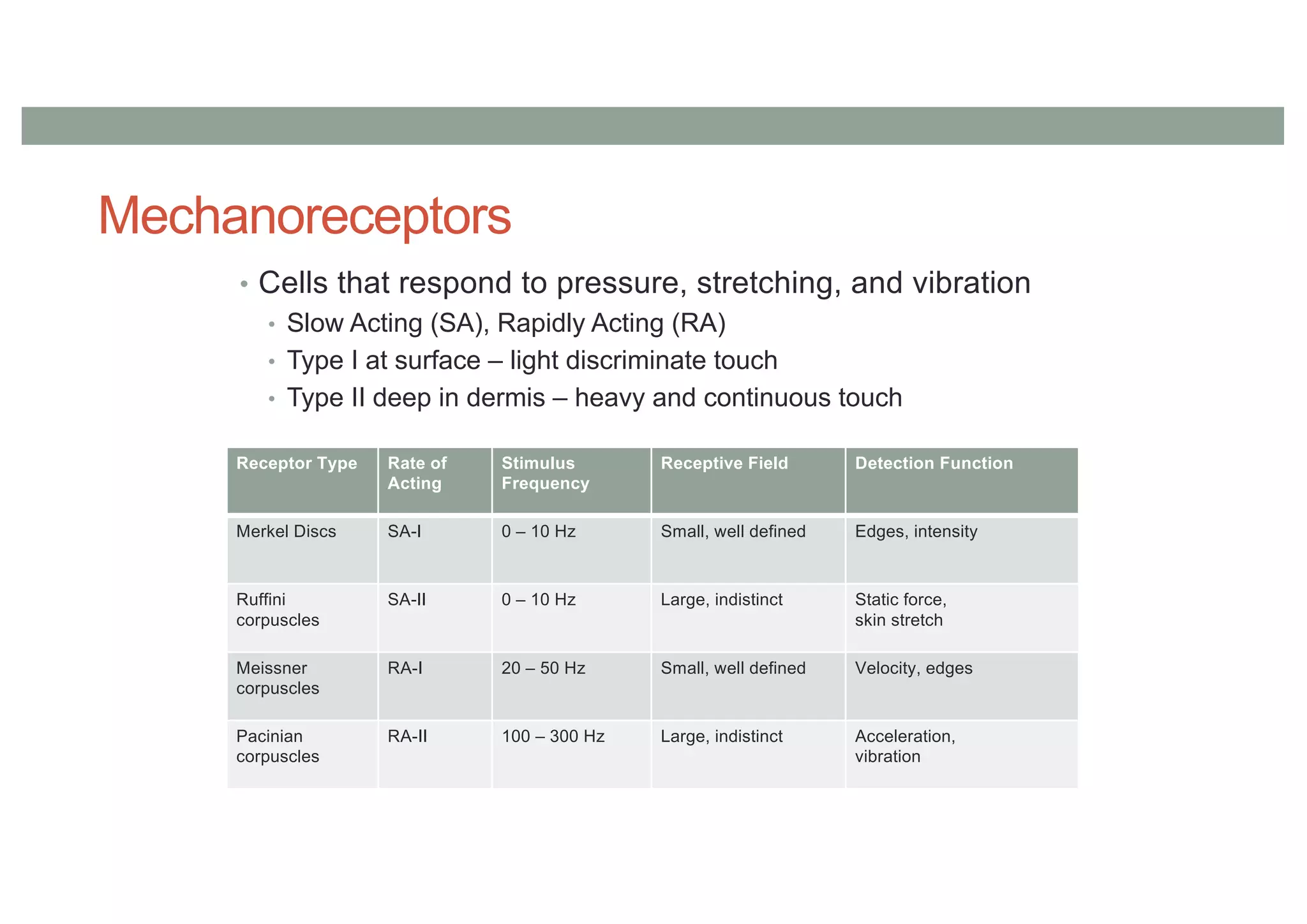

This document provides a summary of a lecture on perception in augmented and virtual reality. It discusses the history of disappearing computers from room-sized to handheld. It reviews the key concepts of augmented reality, virtual reality, and mixed reality on Milgram's continuum. It discusses how perception of reality works through our senses and how virtual reality aims to create an illusion of reality. It covers factors that influence the sense of presence such as immersion, interaction, and realism.