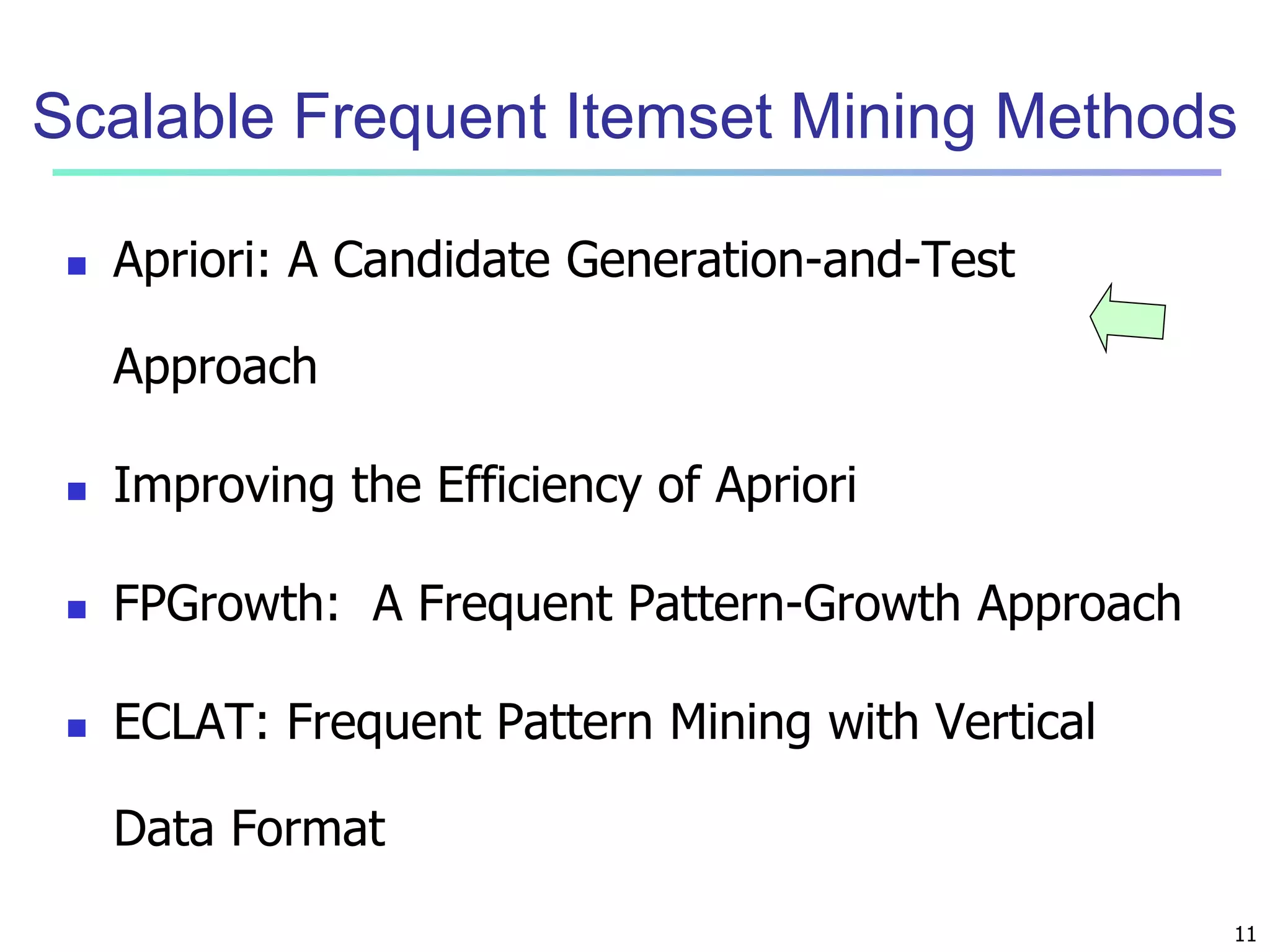

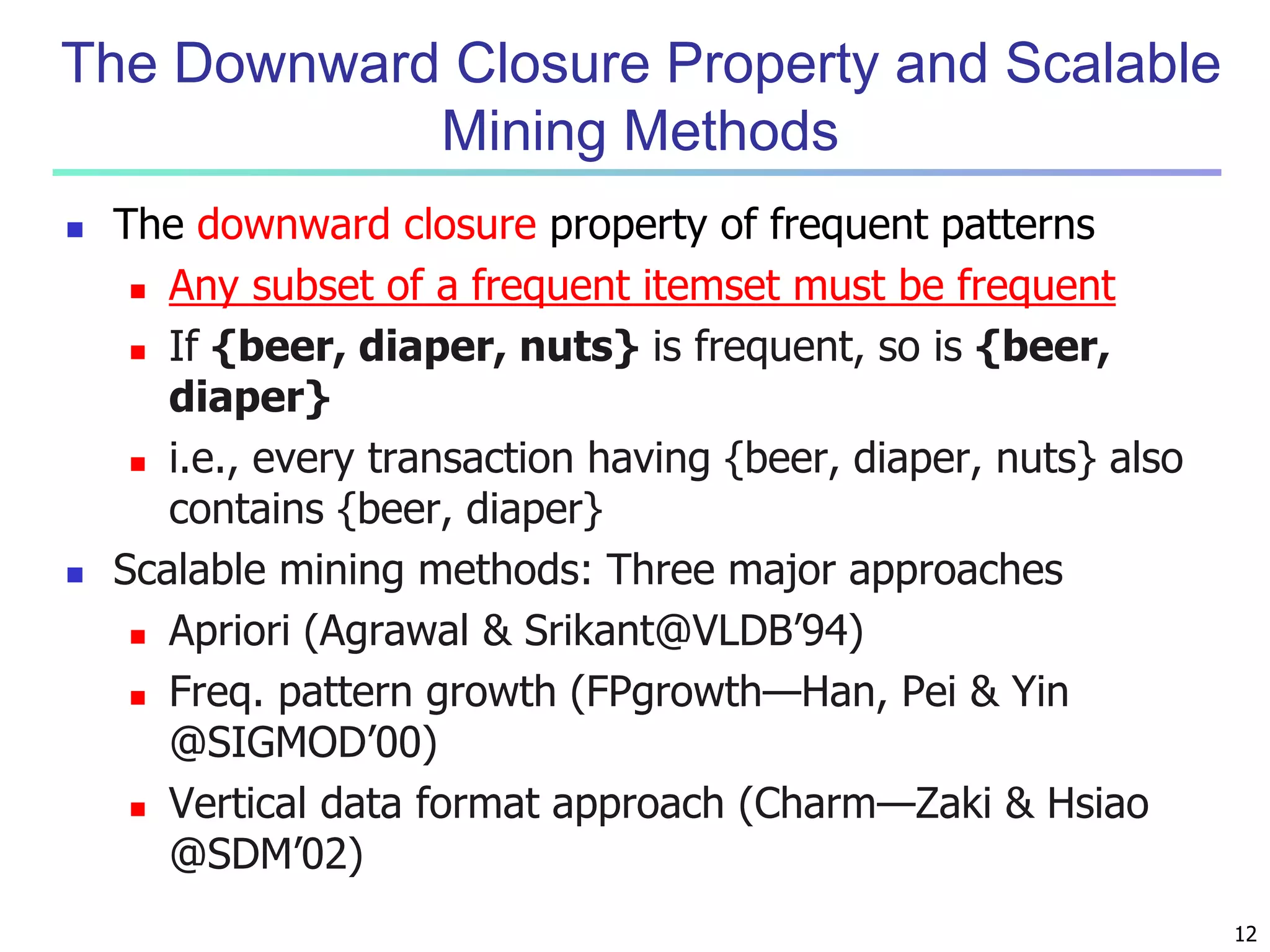

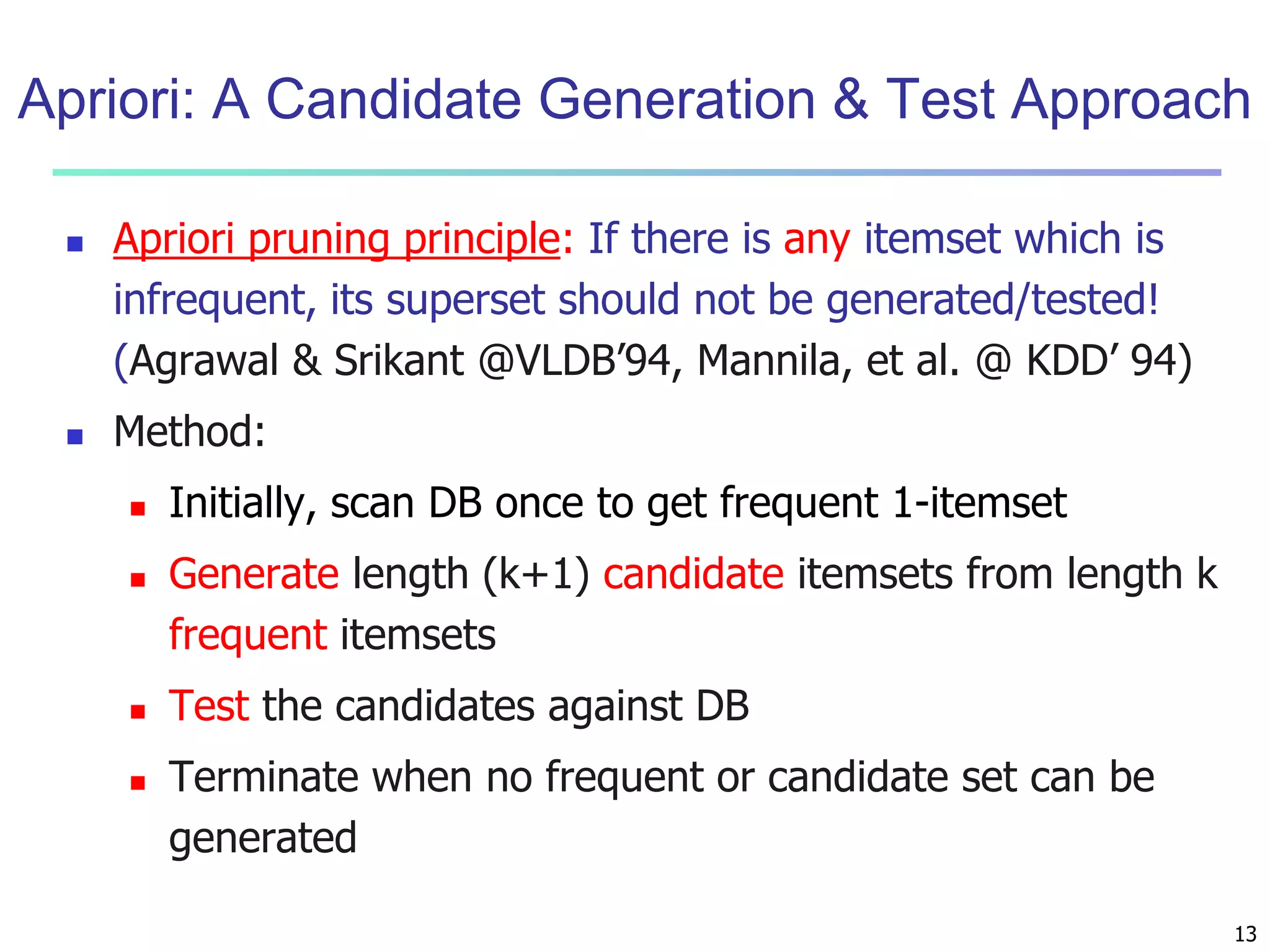

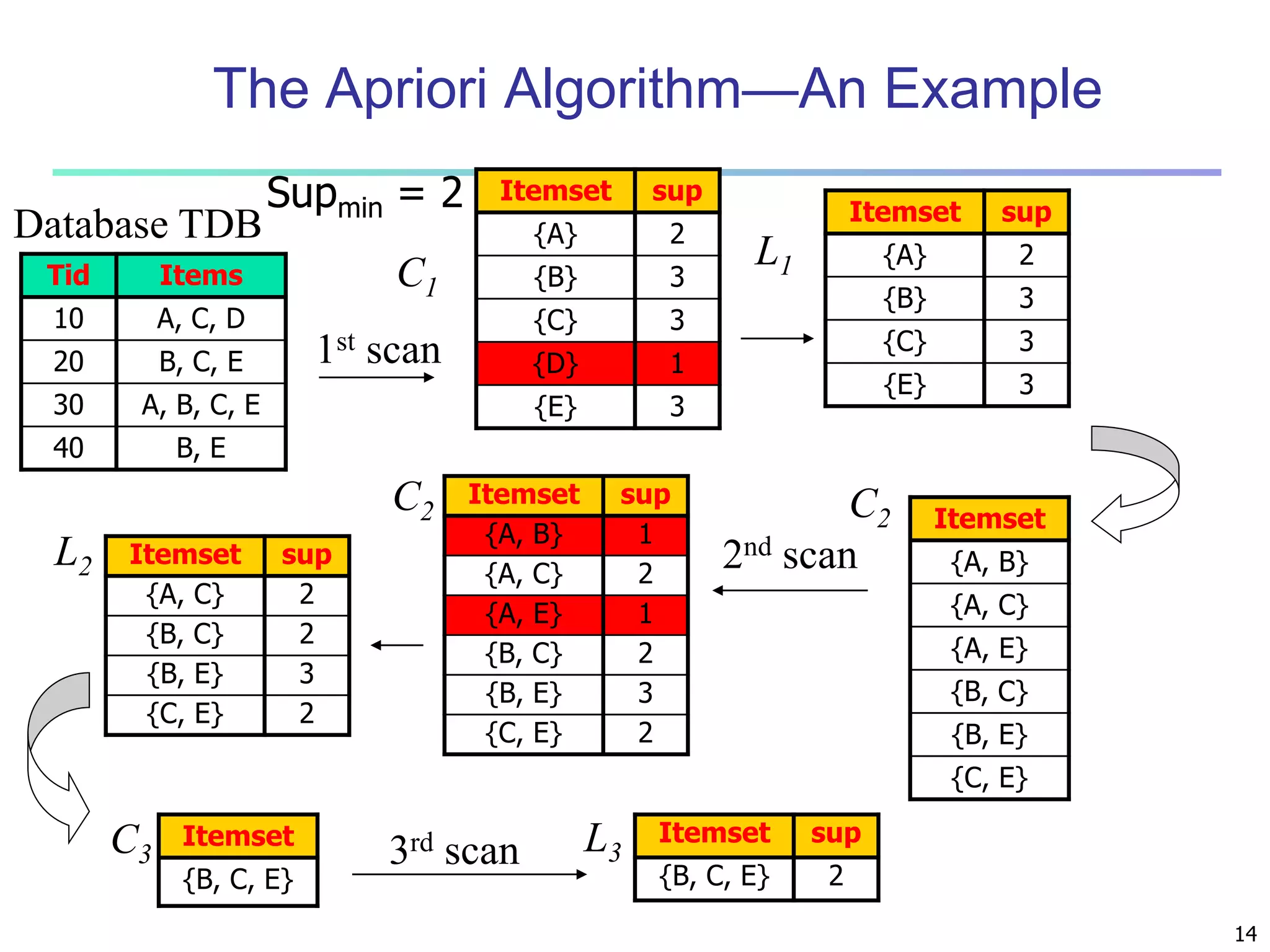

Chapter 6 of 'Data Mining: Concepts and Techniques' covers the mining of frequent patterns, associations, and correlations. It introduces key concepts such as frequent itemsets, support, association rules, and scalable mining methods like the Apriori and FP-Growth algorithms. The chapter emphasizes the importance of frequent pattern analysis in revealing inherent regularities in data and driving various applications, from market analysis to bioinformatics.

![4

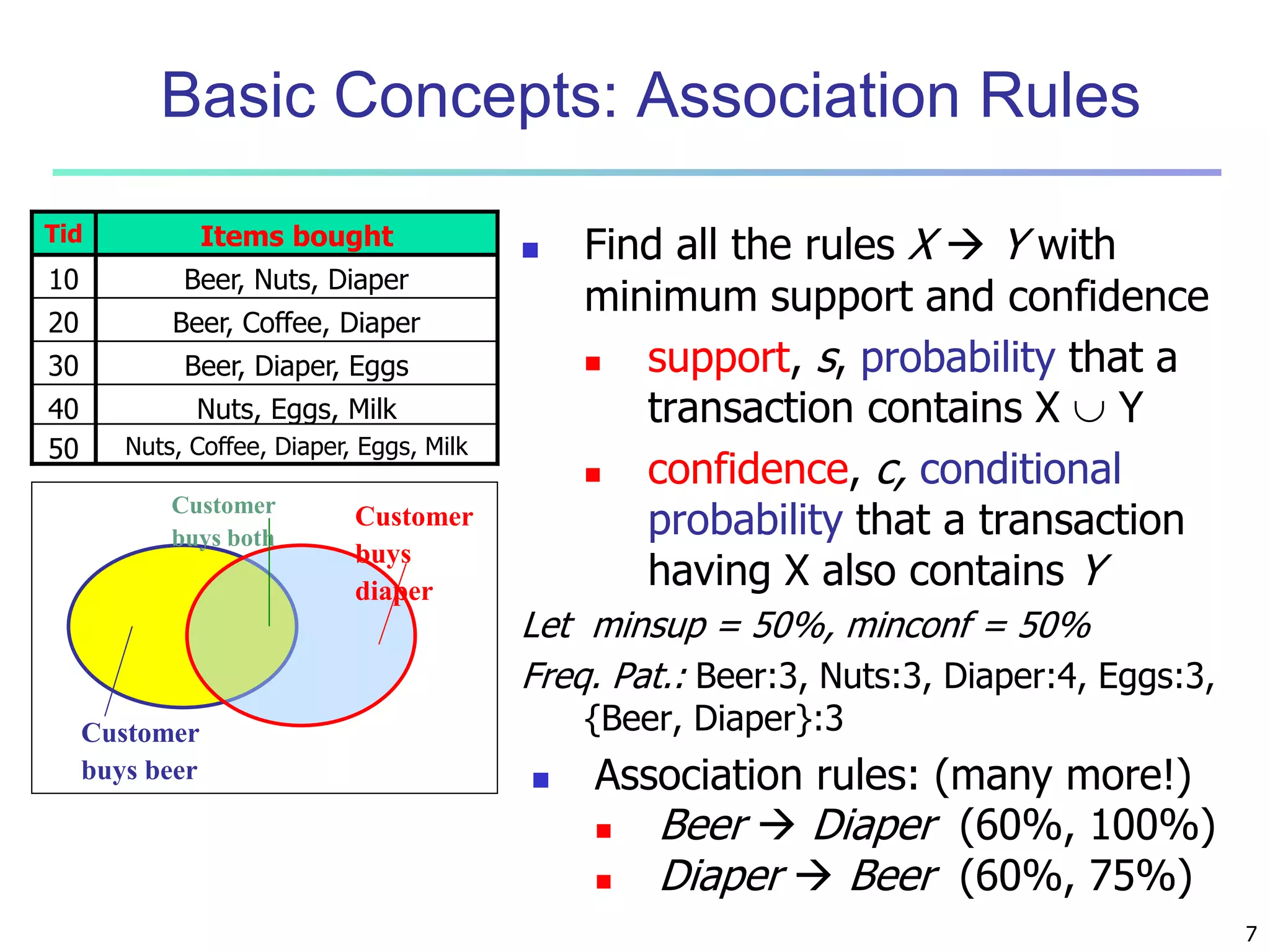

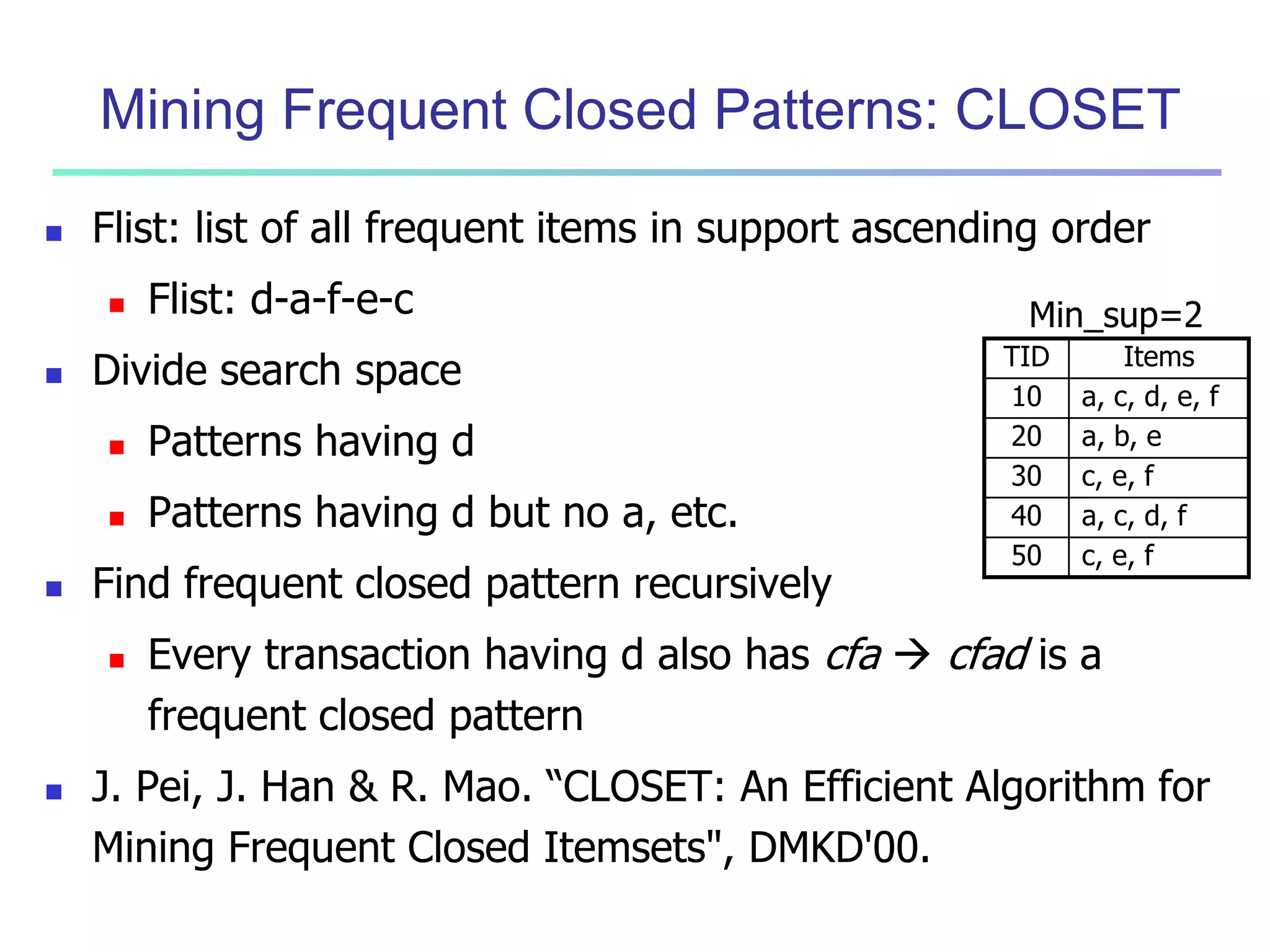

What Is Frequent Pattern Analysis?

Frequent pattern: a pattern (a set of items, subsequences, substructures,

etc.) that occurs frequently in a data set

First proposed by Agrawal, Imielinski, and Swami [AIS93] in the context

of frequent itemsets and association rule mining

Motivation: Finding inherent regularities in data

What products were often purchased together?— Beer and diapers?!

What are the subsequent purchases after buying a PC?

What kinds of DNA are sensitive to this new drug?

Can we automatically classify web documents?

Applications

Basket data analysis, cross-marketing, catalog design, sale campaign

analysis, Web log (click stream) analysis, and DNA sequence analysis.](https://image.slidesharecdn.com/06fpbasic-140913212230-phpapp02/75/Data-Mining-Concepts-and-Techniques_-Chapter-6-Mining-Frequent-Patterns-Association-and-Correlations-Basic-Concepts-and-Methods-4-2048.jpg)

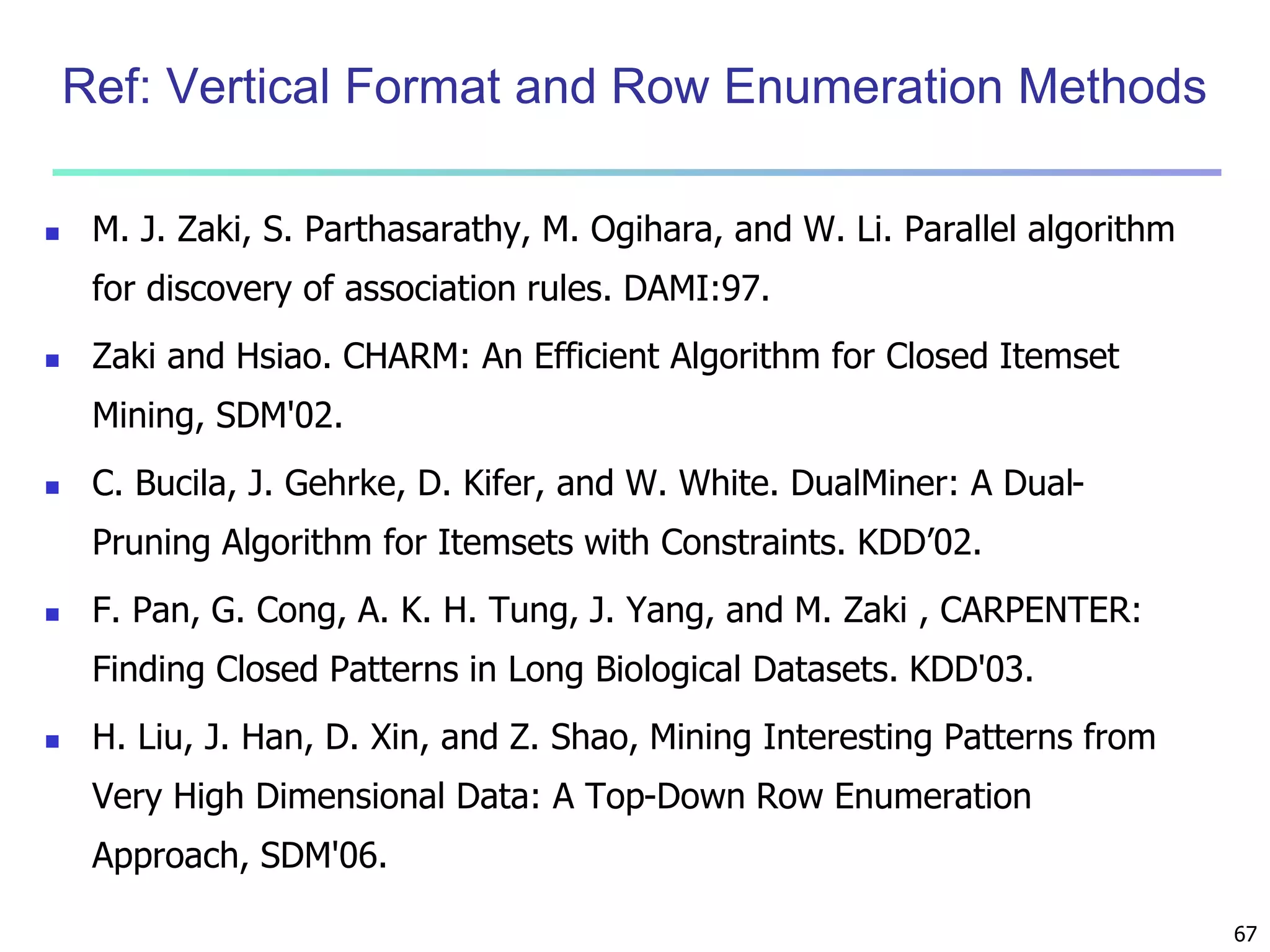

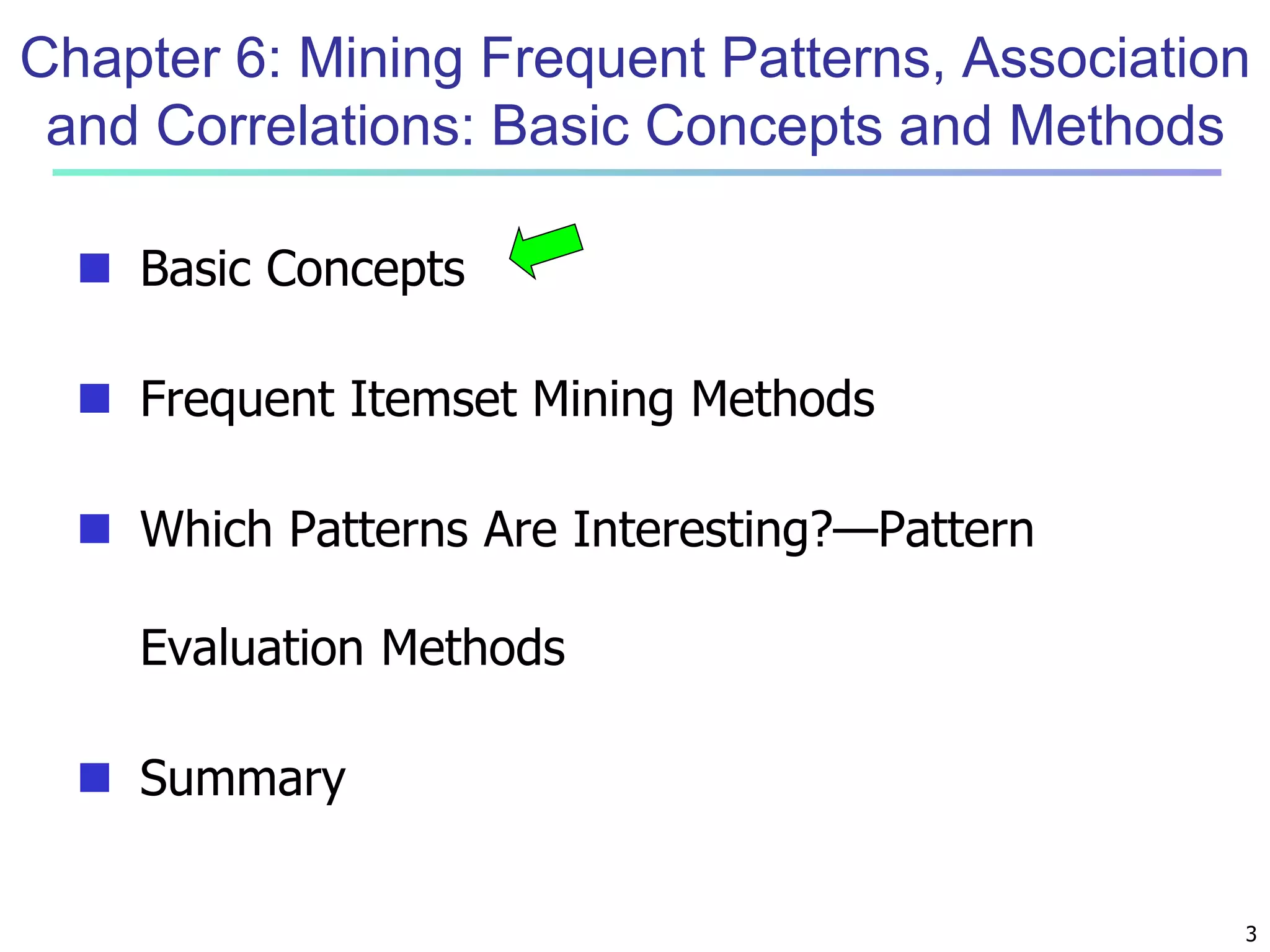

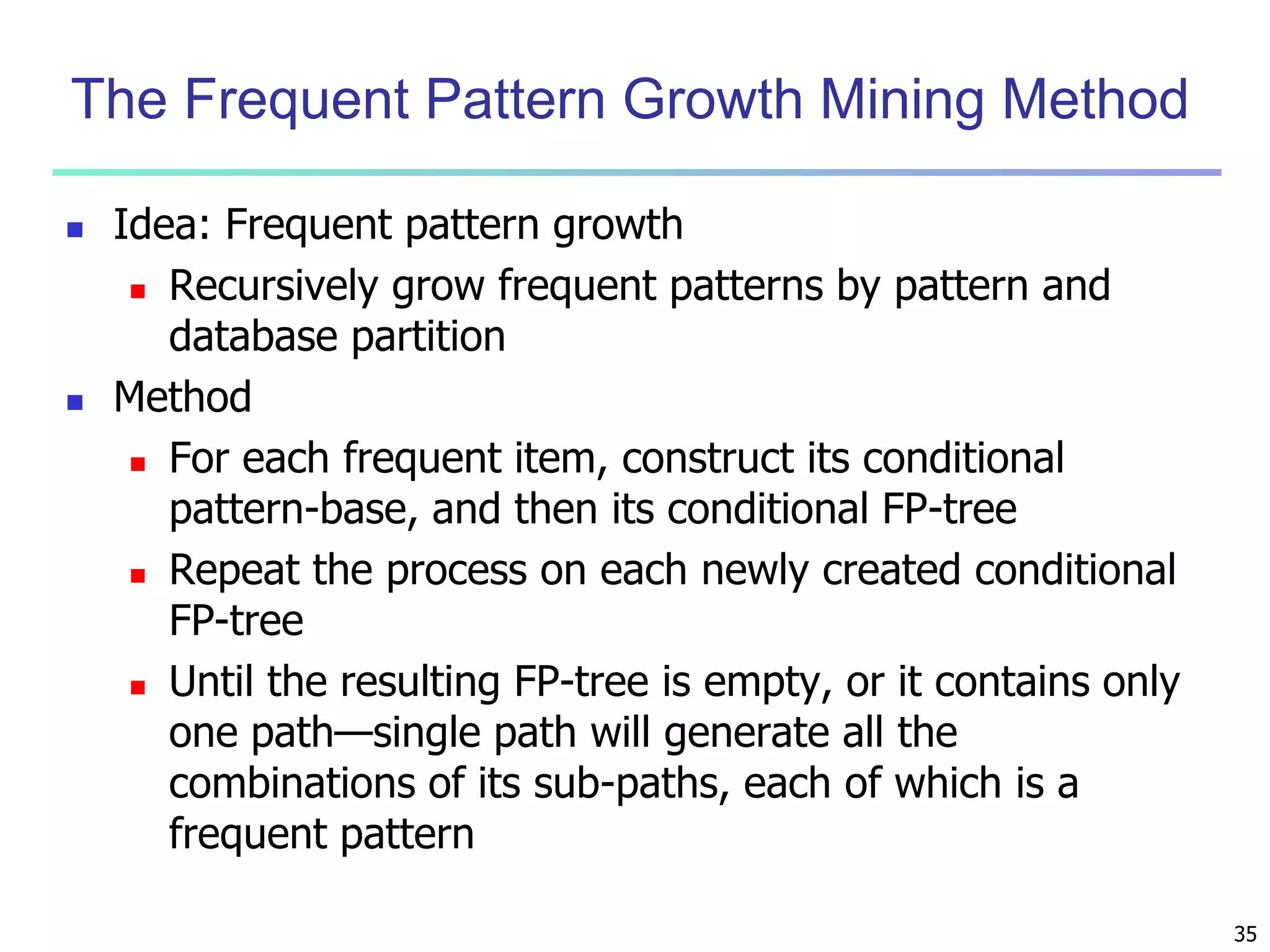

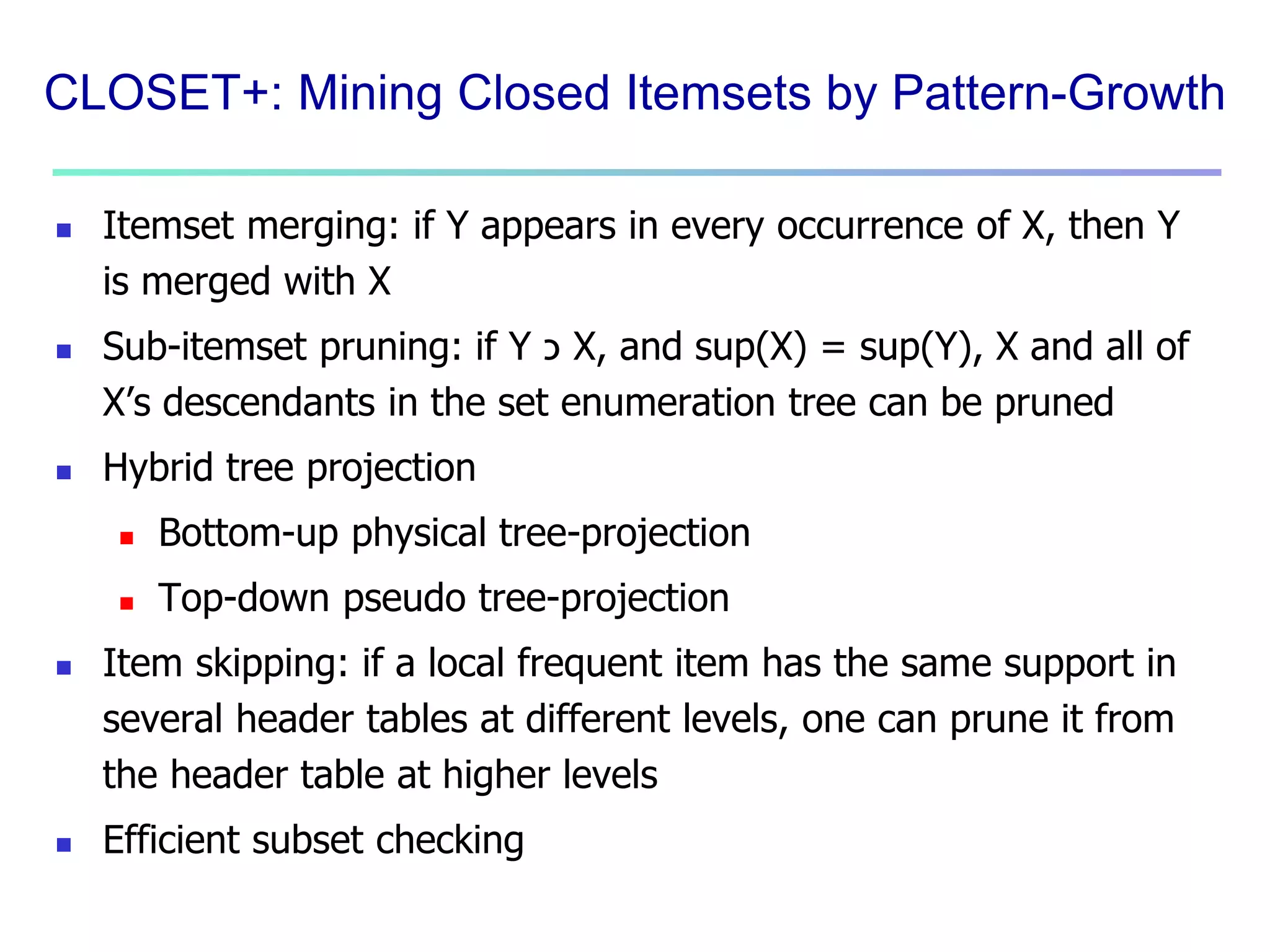

![19

Candidate Generation: An SQL Implementation

SQL Implementation of candidate generation

Suppose the items in Lk-1 are listed in an order

Step 1: self-joining Lk-1

insert into Ck

select p.item1, p.item2, …, p.itemk-1, q.itemk-1

from Lk-1 p, Lk-1 q

where p.item1=q.item1, …, p.itemk-2=q.itemk-2, p.itemk-1 <

q.itemk-1

Step 2: pruning

forall itemsets c in Ck do

forall (k-1)-subsets s of c do

if (s is not in Lk-1) then delete c from Ck

Use object-relational extensions like UDFs, BLOBs, and Table functions for

efficient implementation [S. Sarawagi, S. Thomas, and R. Agrawal. Integrating

association rule mining with relational database systems: Alternatives and

implications. SIGMOD’98]](https://image.slidesharecdn.com/06fpbasic-140913212230-phpapp02/75/Data-Mining-Concepts-and-Techniques_-Chapter-6-Mining-Frequent-Patterns-Association-and-Correlations-Basic-Concepts-and-Methods-17-2048.jpg)

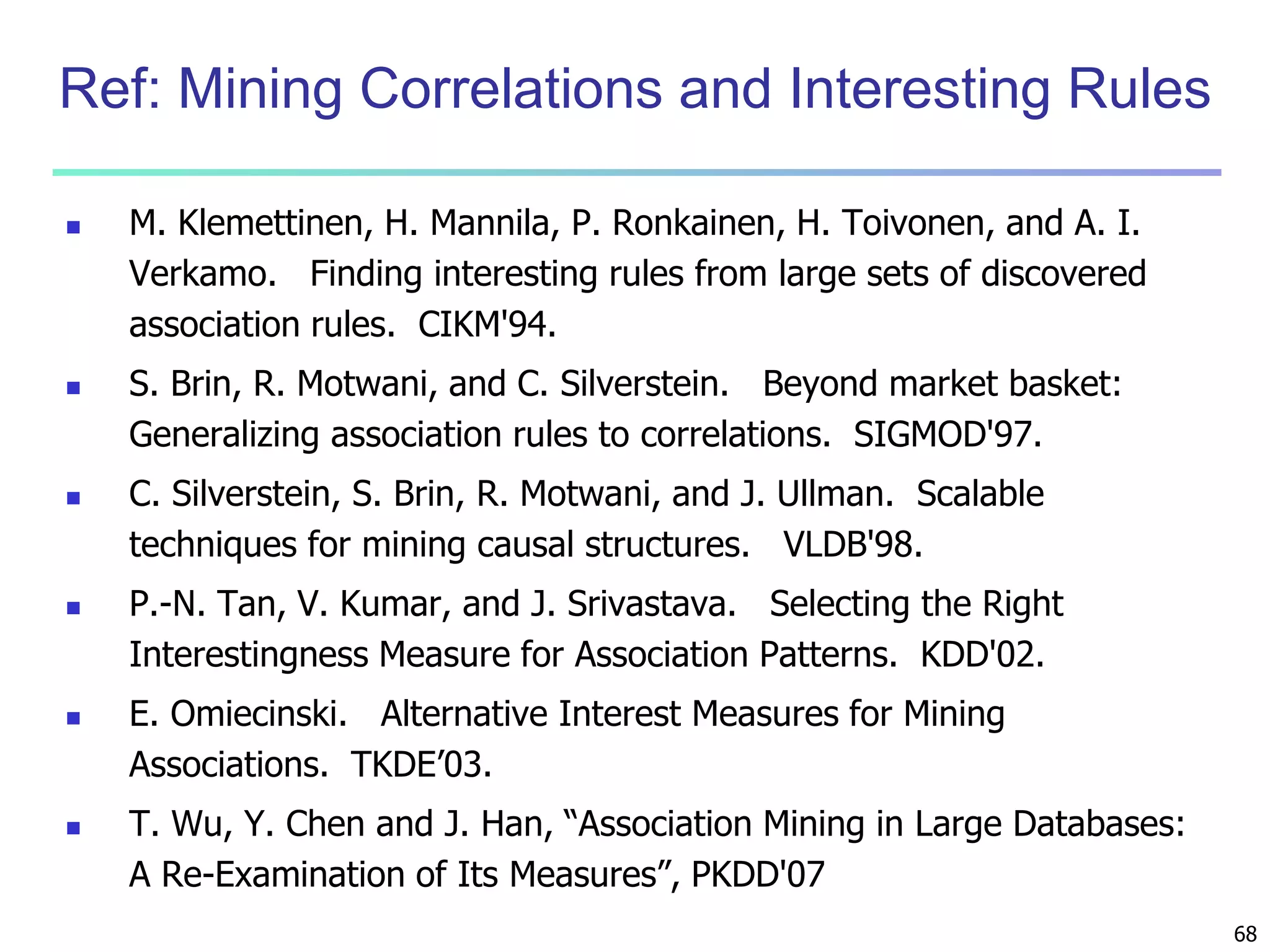

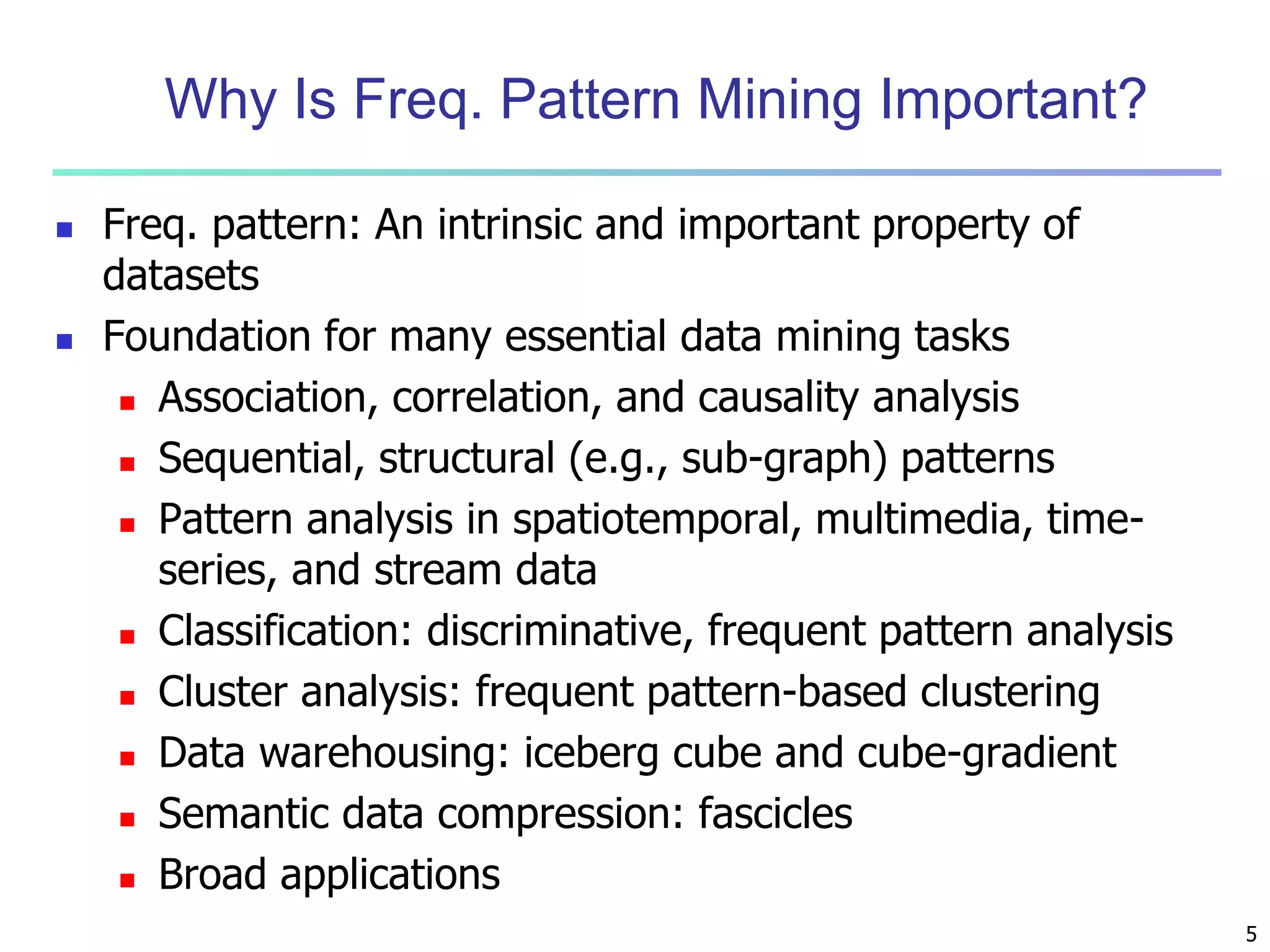

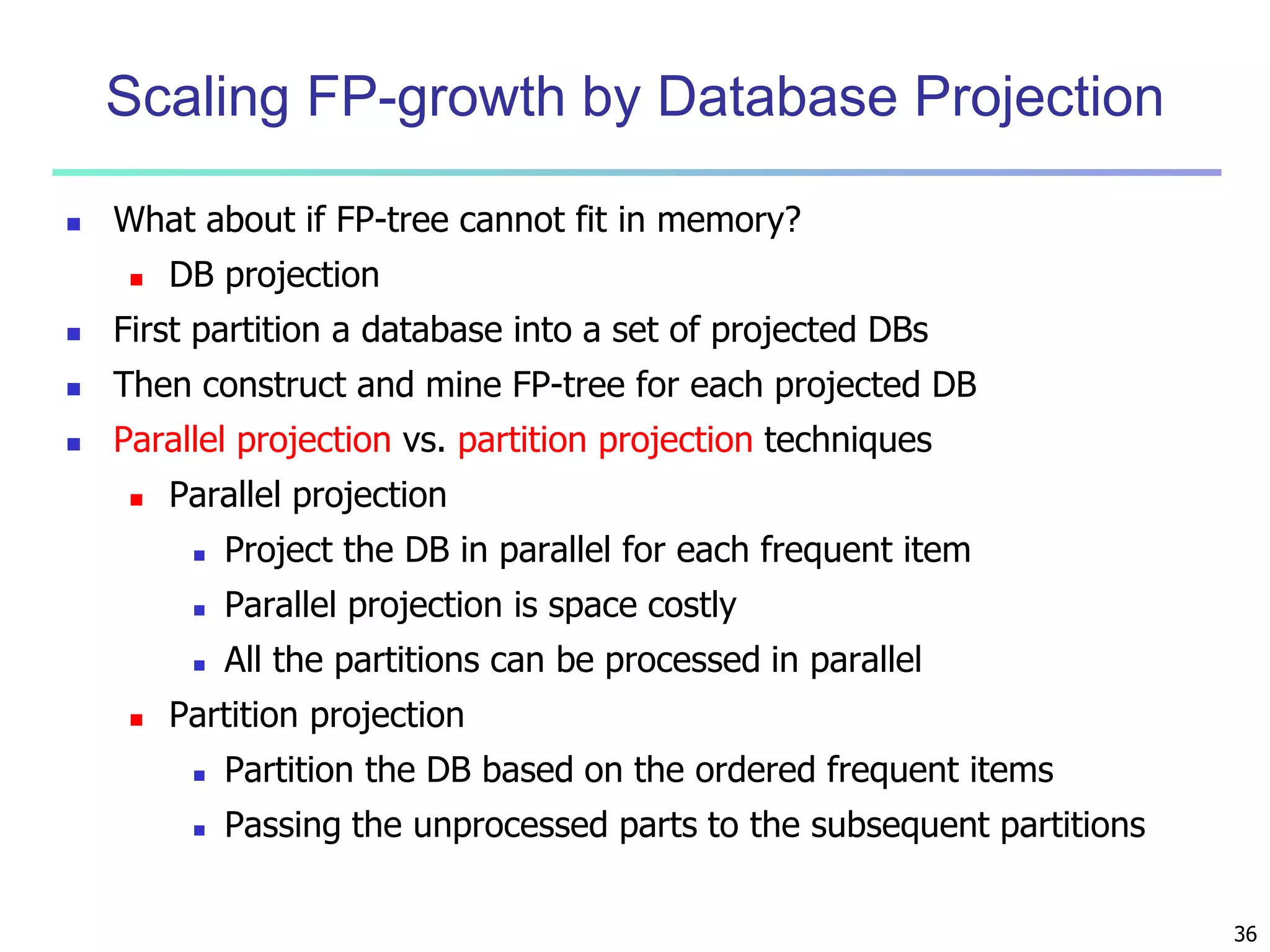

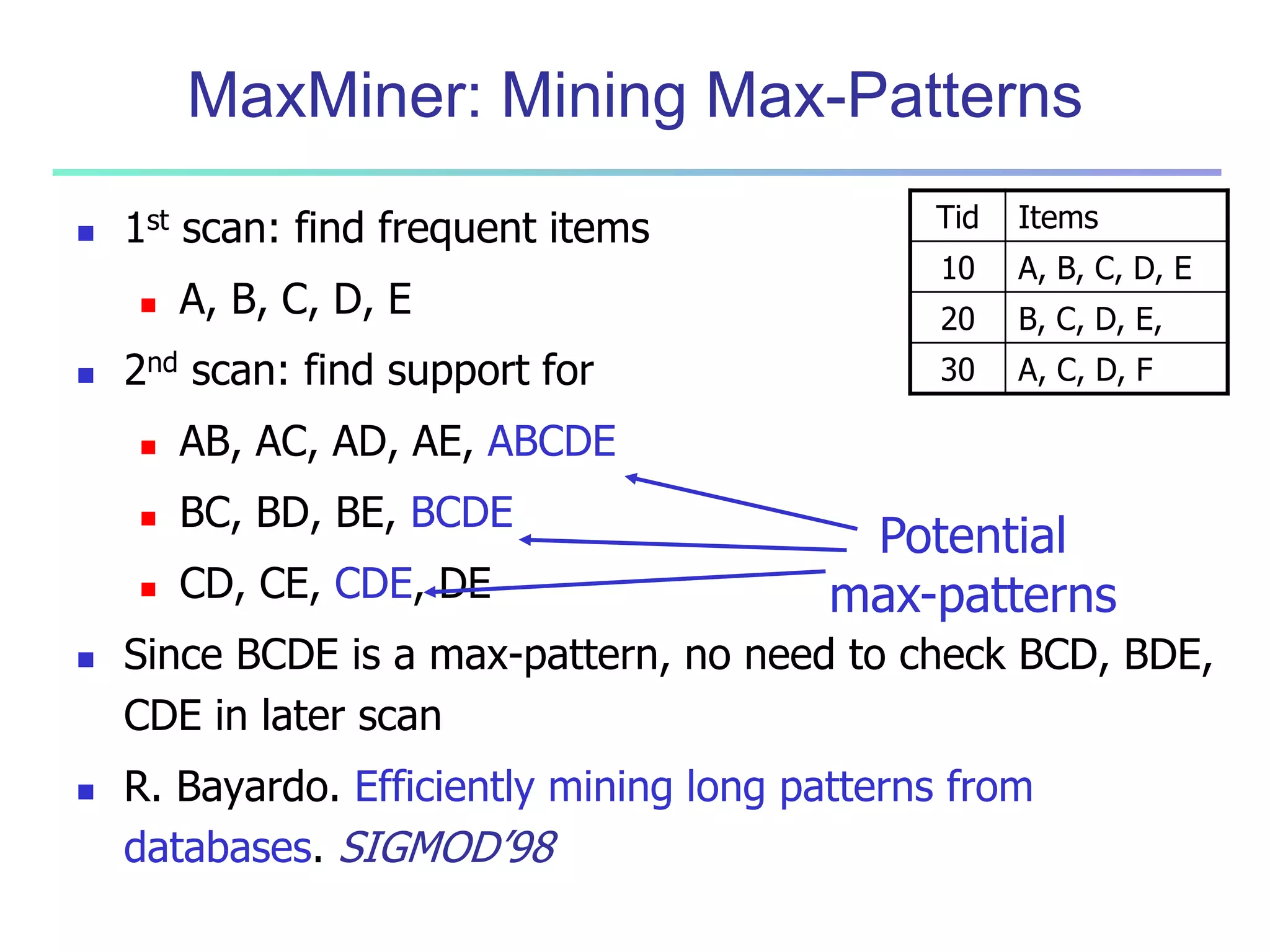

![55

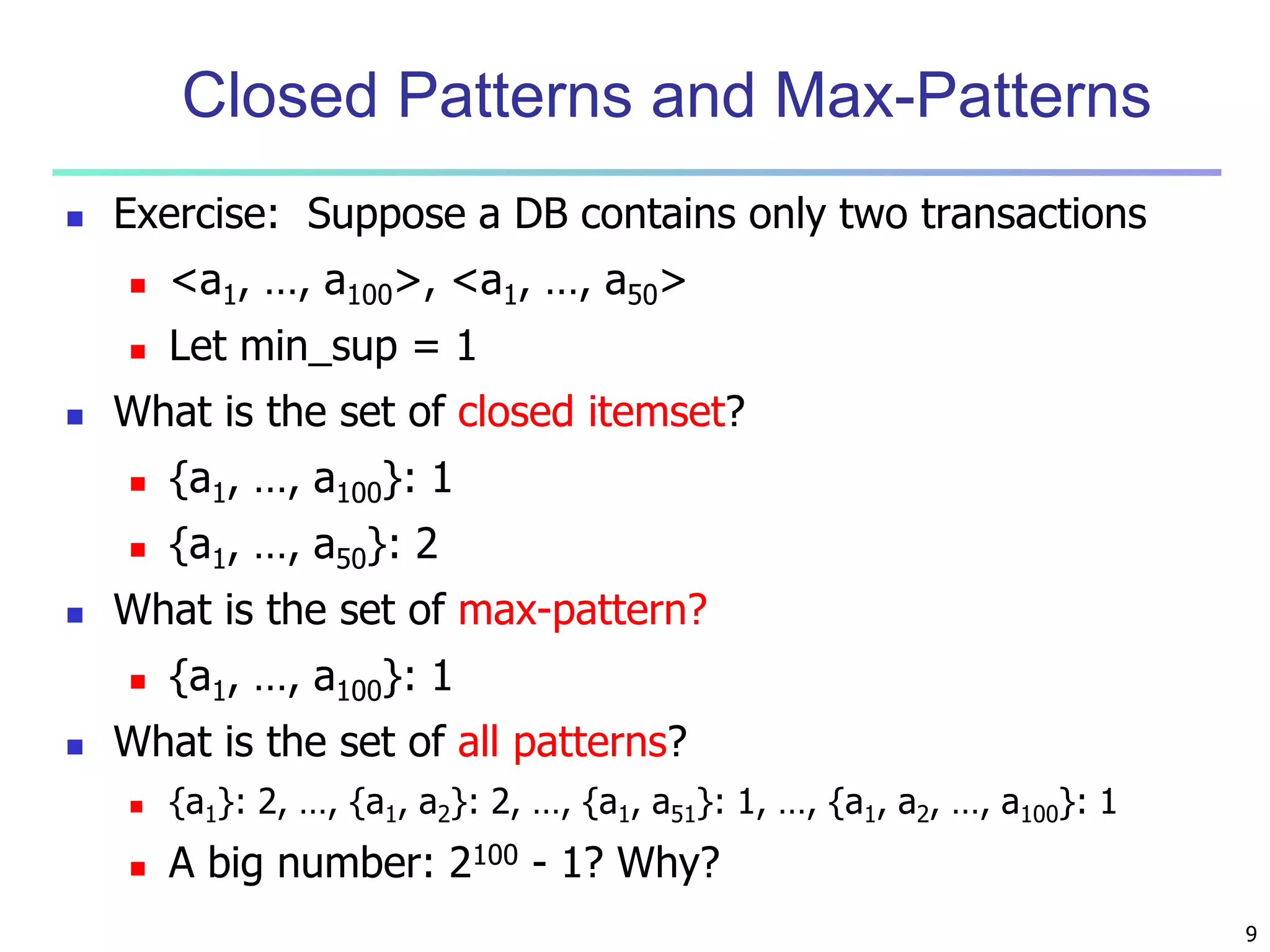

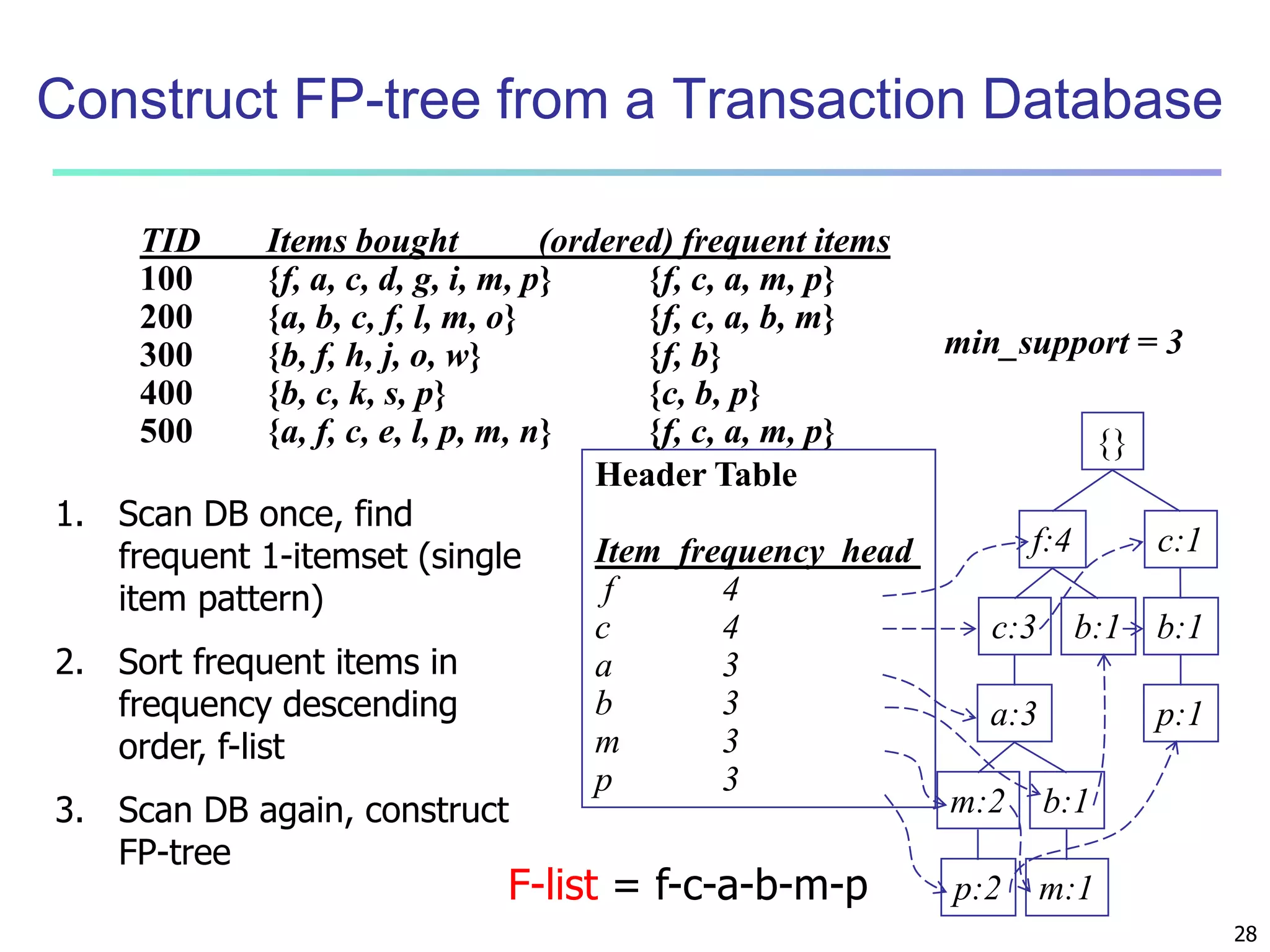

Interestingness Measure: Correlations (Lift)

play basketball eat cereal [40%, 66.7%] is misleading

The overall % of students eating cereal is 75% > 66.7%.

play basketball not eat cereal [20%, 33.3%] is more accurate,

although with lower support and confidence

Measure of dependent/correlated events: lift

0.89

P A B

( )

P A P B

2000/ 5000

lif t(B,C)

3000/ 5000*3750/ 5000

Basketball Not basketball Sum (row)

Cereal 2000 1750 3750

Not cereal 1000 250 1250

Sum(col.) 3000 2000 5000

( ) ( )

lift

1.33

1000/ 5000

lif t(B,C)

3000/ 5000*1250/ 5000](https://image.slidesharecdn.com/06fpbasic-140913212230-phpapp02/75/Data-Mining-Concepts-and-Techniques_-Chapter-6-Mining-Frequent-Patterns-Association-and-Correlations-Basic-Concepts-and-Methods-53-2048.jpg)

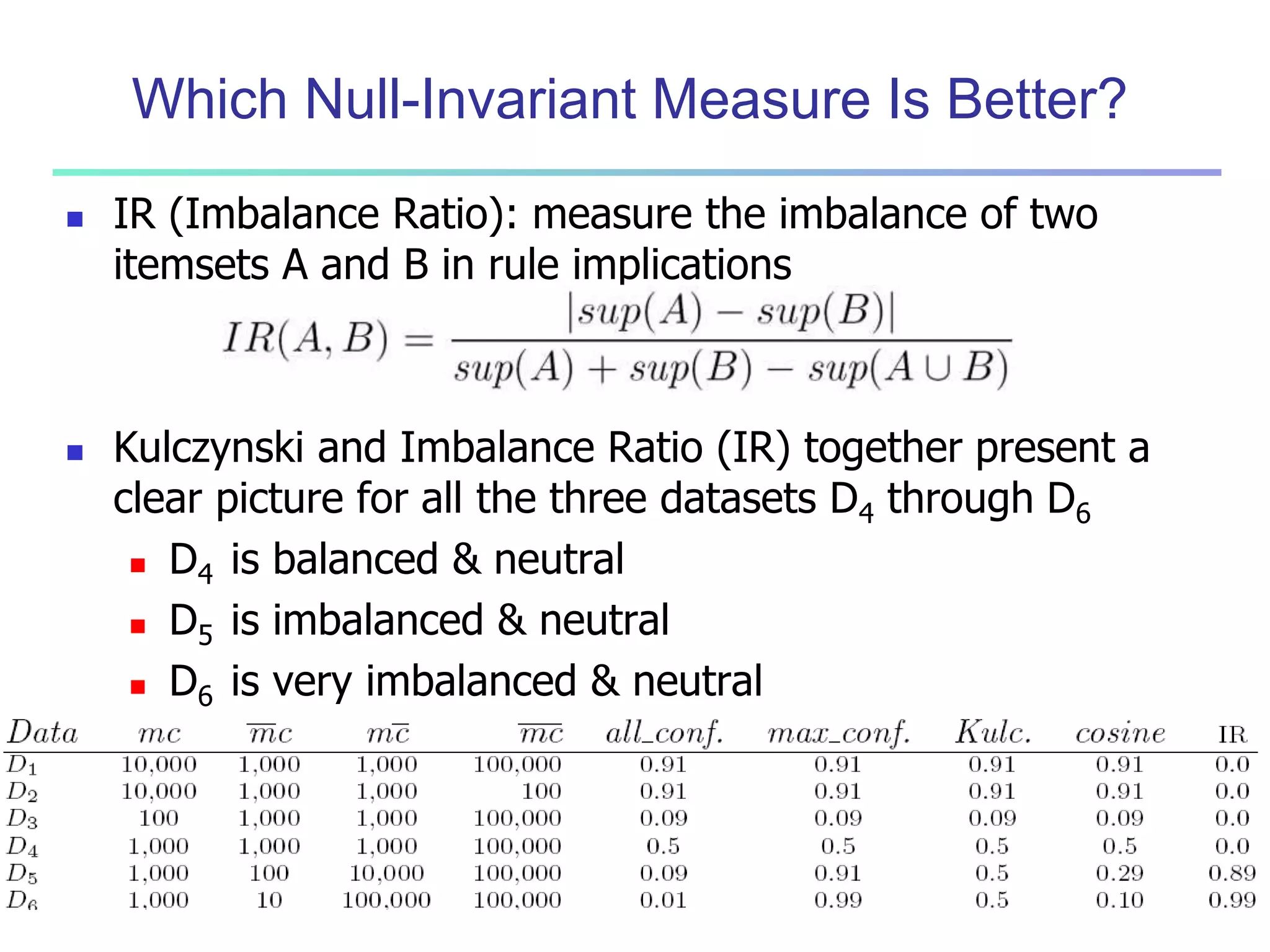

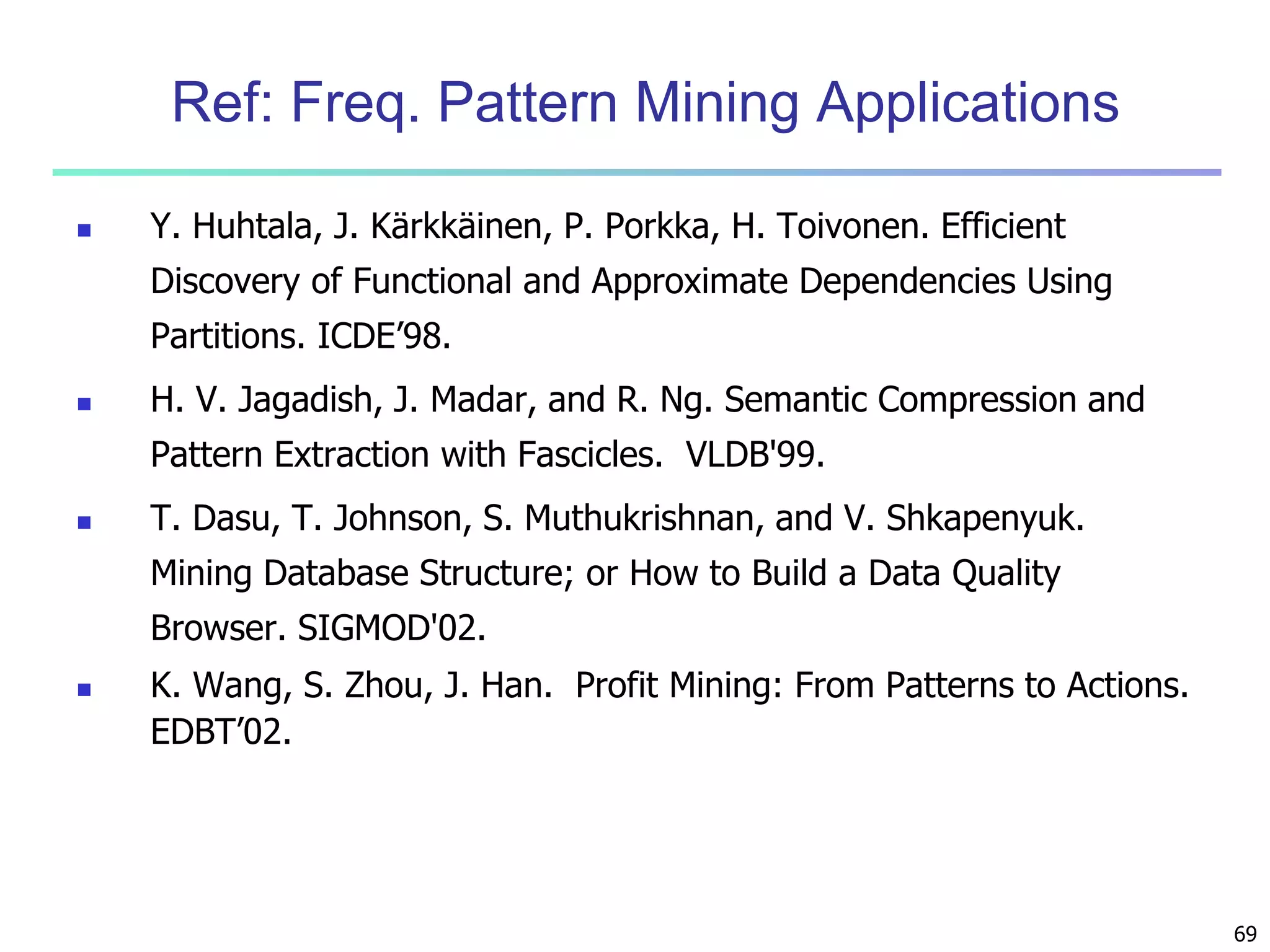

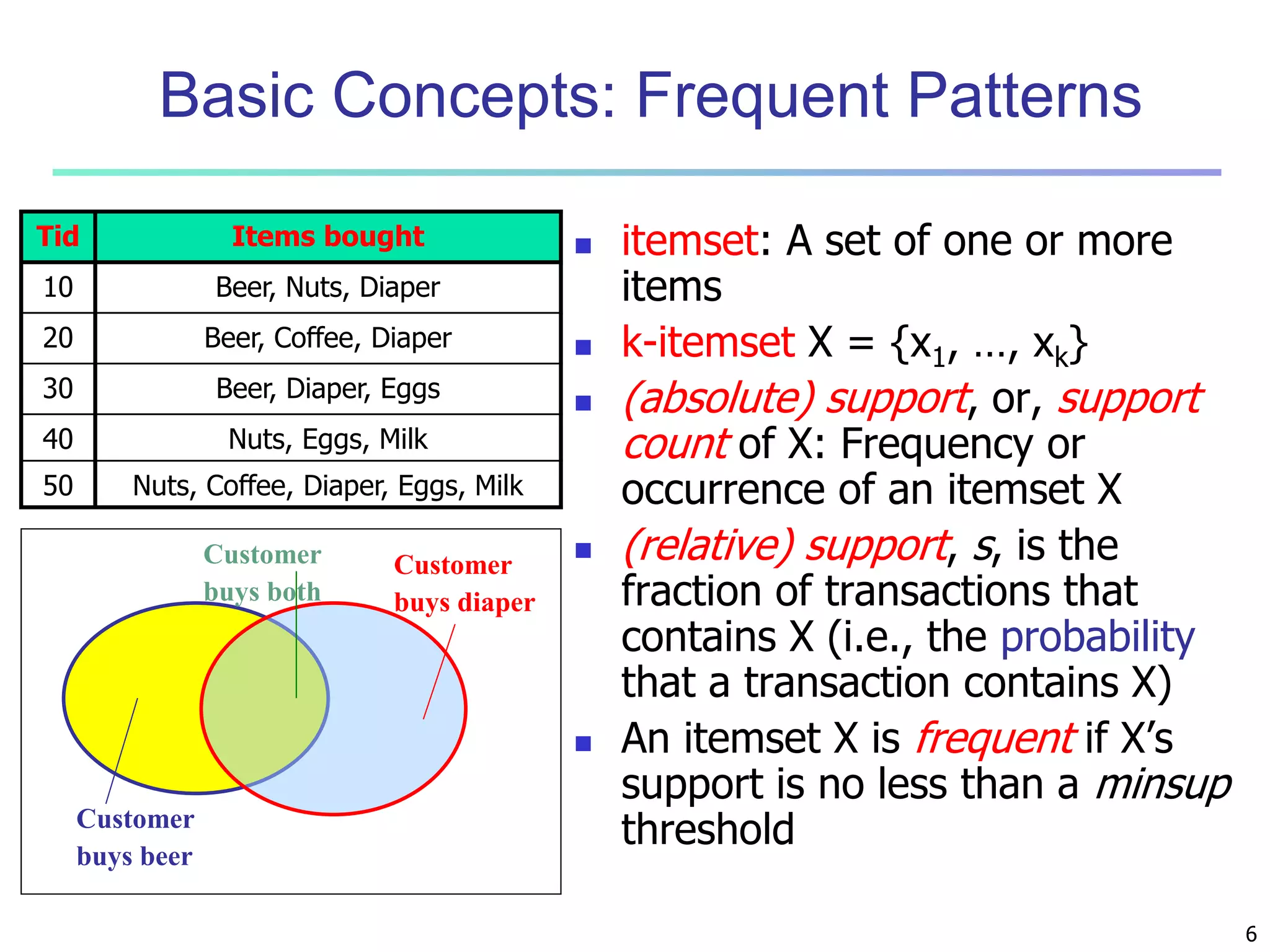

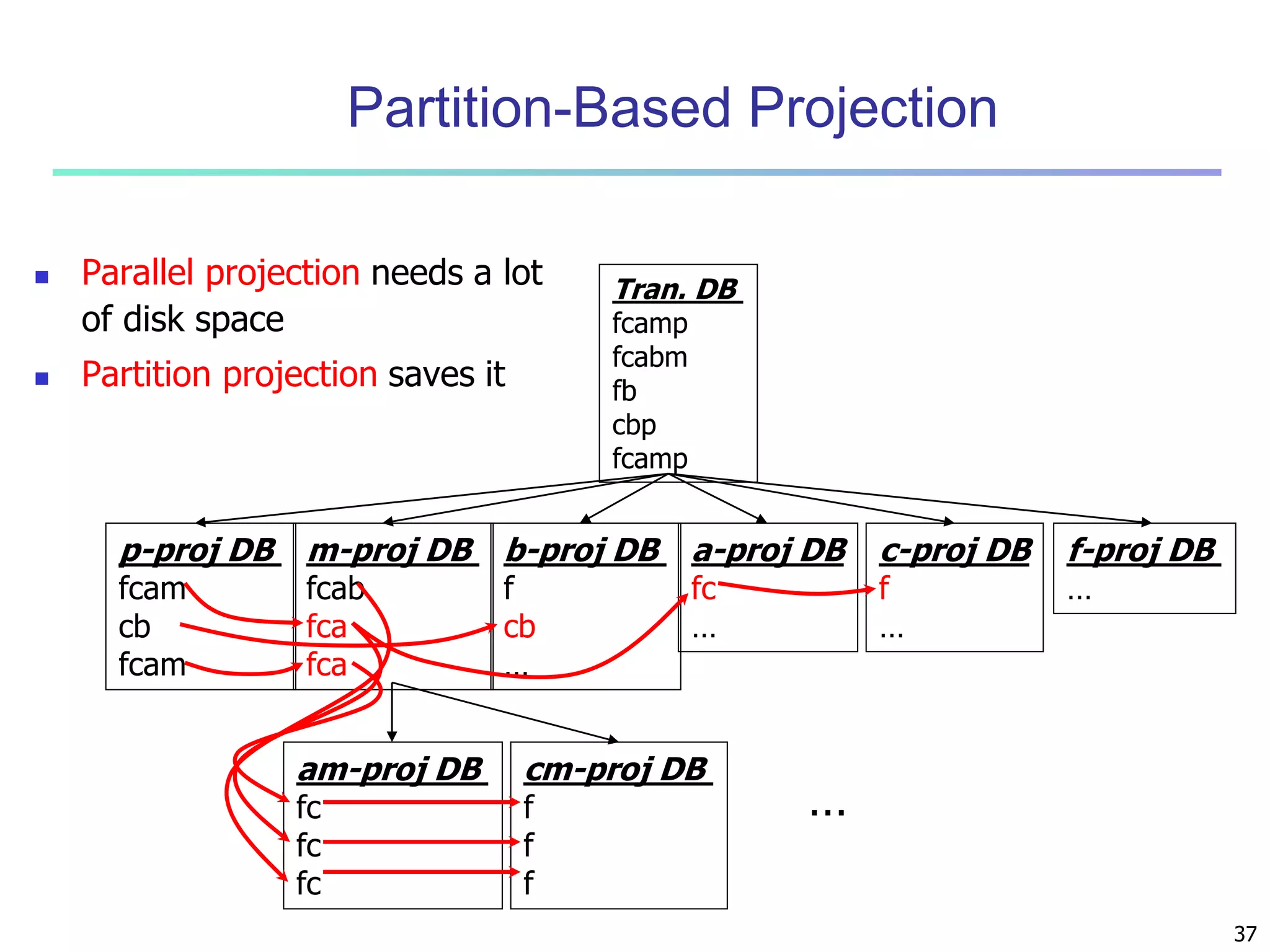

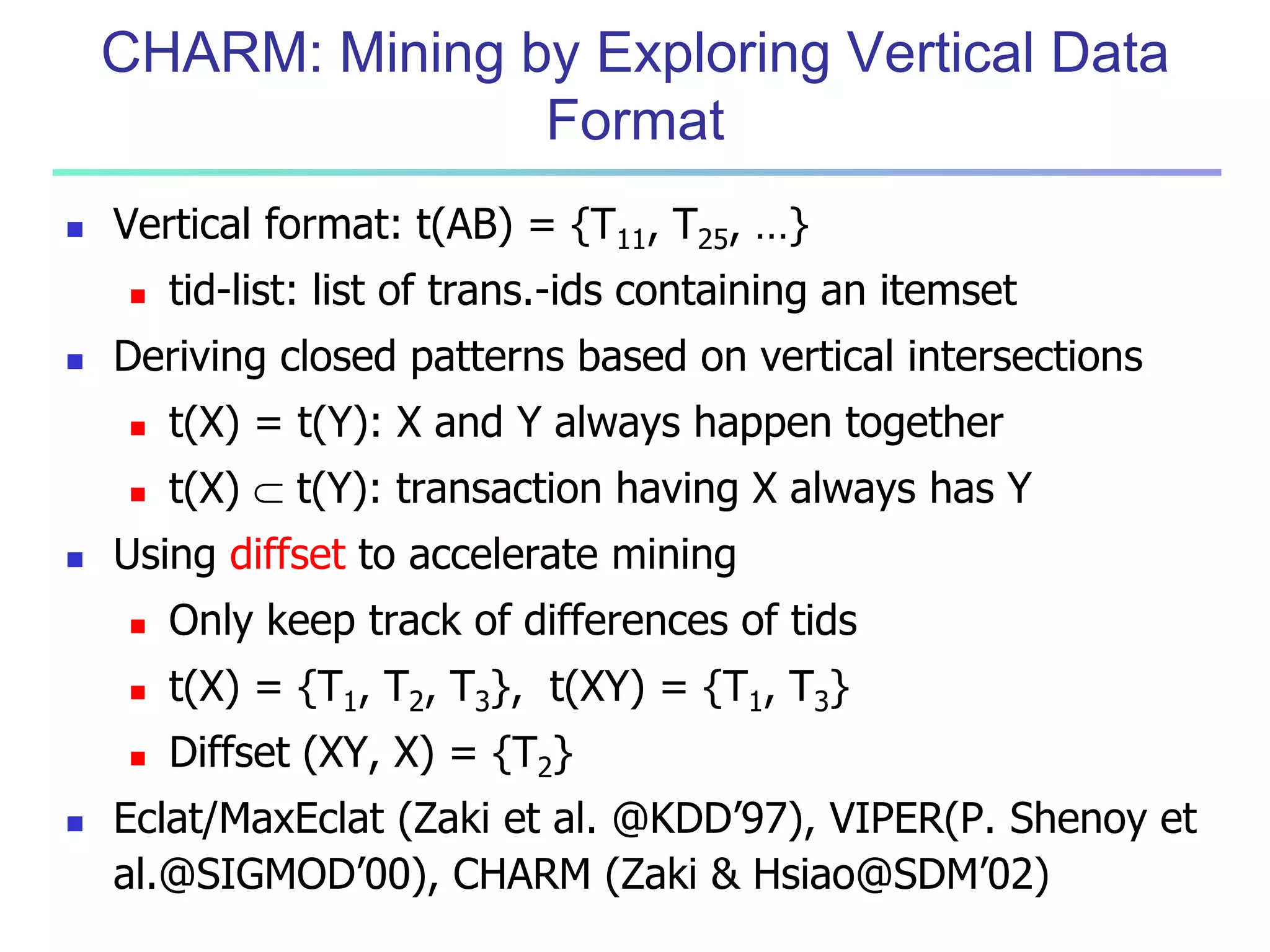

![56

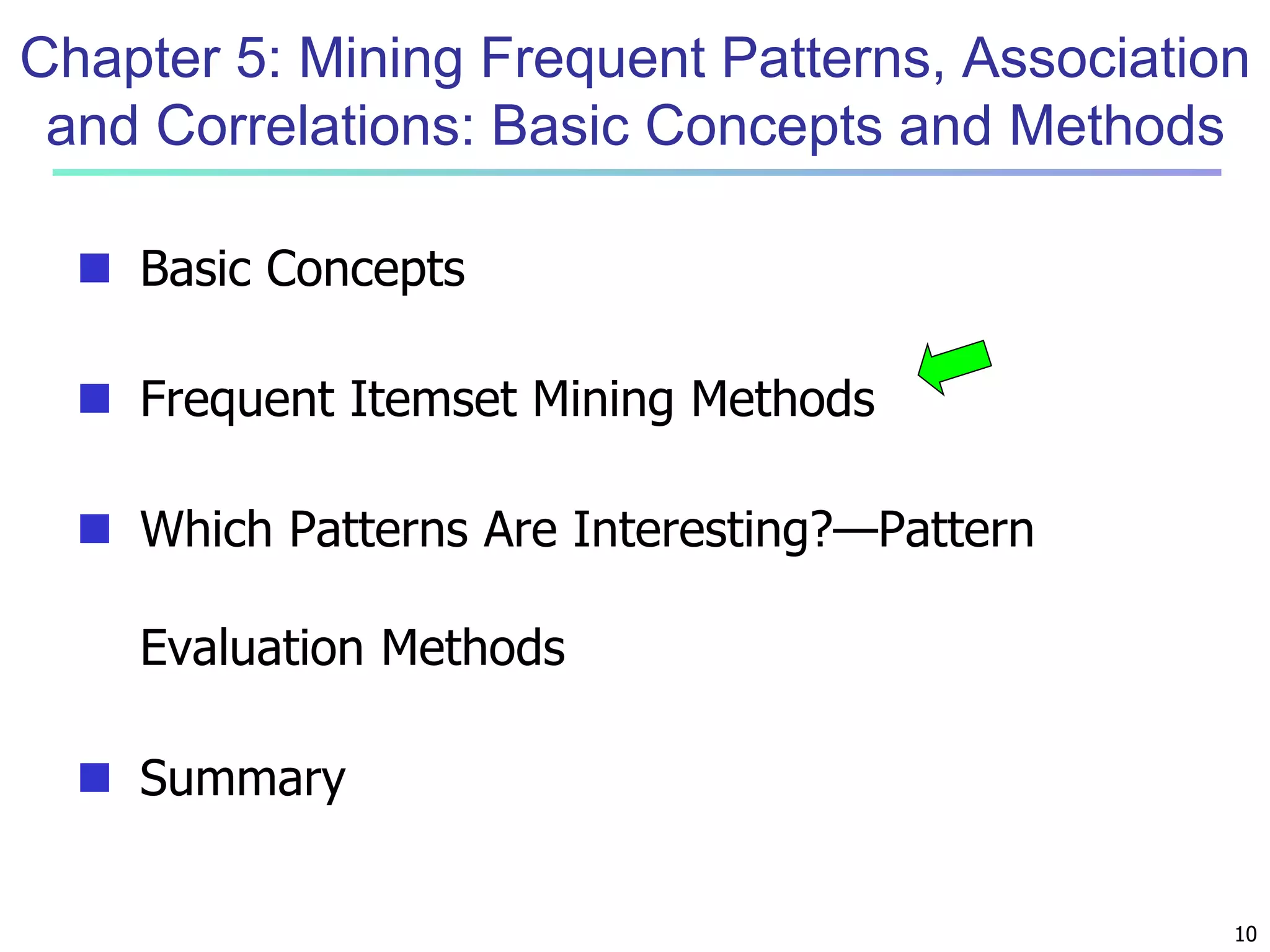

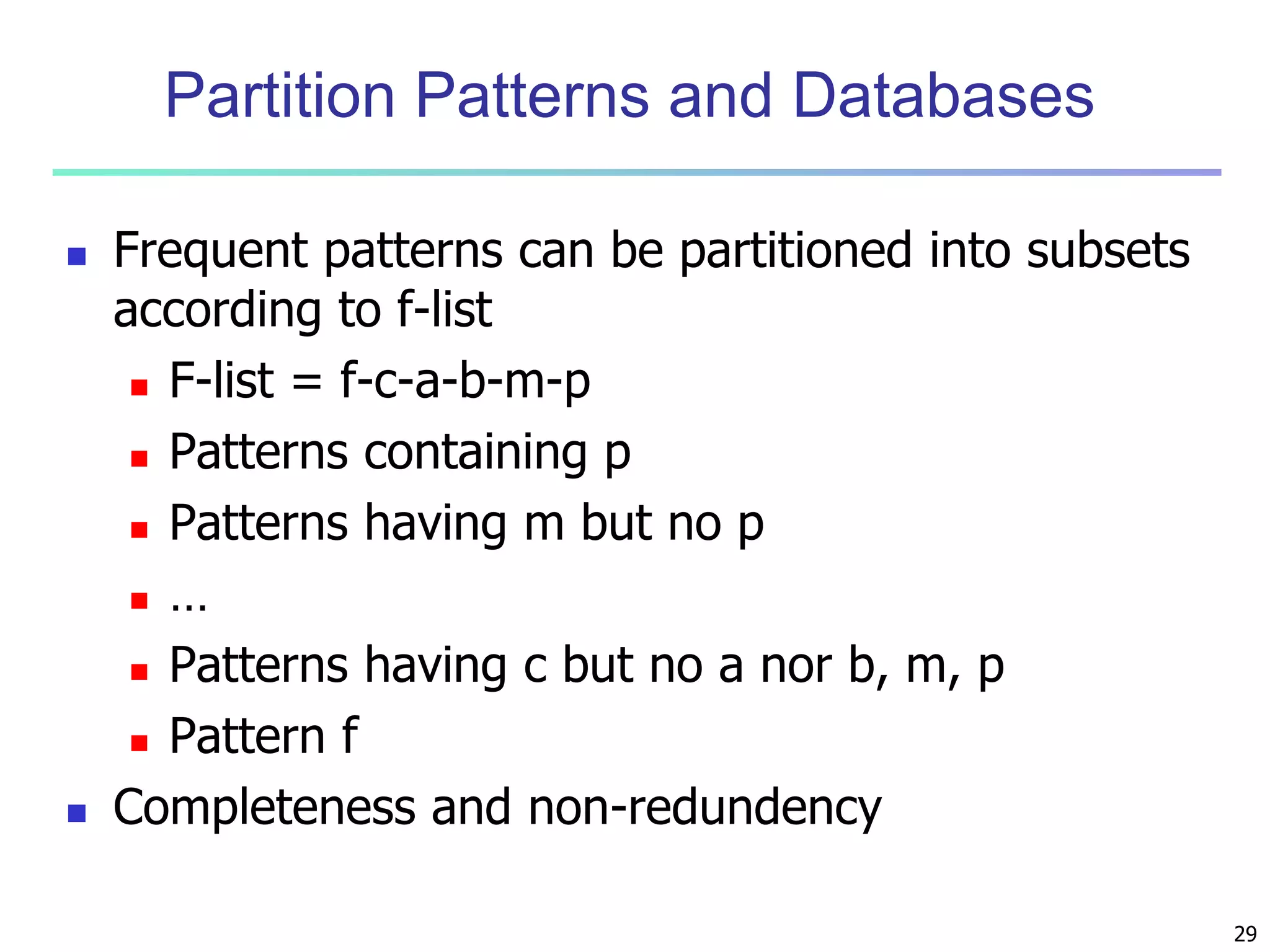

Are lift and 2 Good Measures of Correlation?

“Buy walnuts buy

milk [1%, 80%]” is

misleading if 85% of

customers buy milk

Support and confidence

are not good to indicate

correlations

Over 20 interestingness

measures have been

proposed (see Tan,

Kumar, Sritastava

@KDD’02)

Which are good ones?](https://image.slidesharecdn.com/06fpbasic-140913212230-phpapp02/75/Data-Mining-Concepts-and-Techniques_-Chapter-6-Mining-Frequent-Patterns-Association-and-Correlations-Basic-Concepts-and-Methods-54-2048.jpg)